VRGaussianAvatar: Integrating 3D Gaussian Avatars into VR

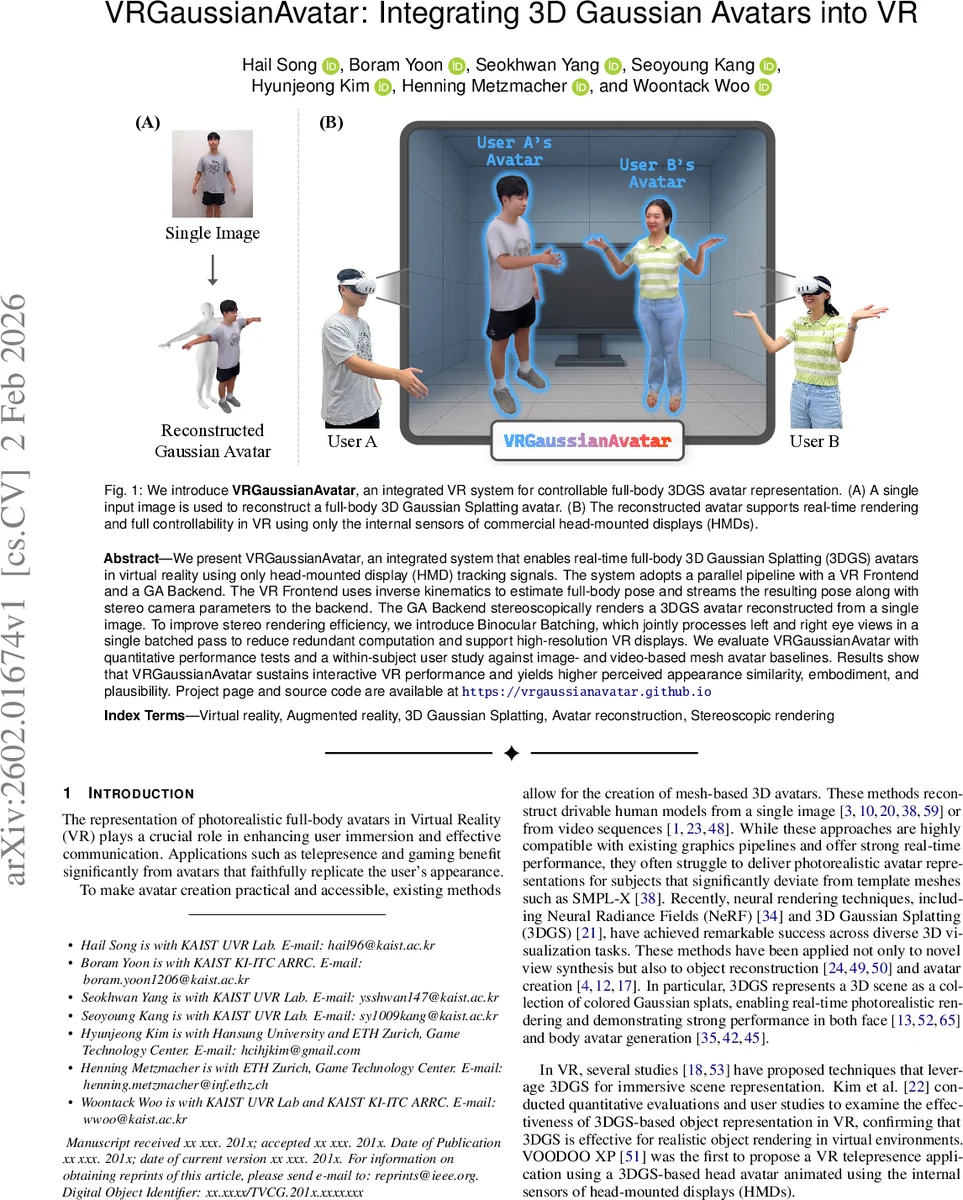

We present VRGaussianAvatar, an integrated system that enables real-time full-body 3D Gaussian Splatting (3DGS) avatars in virtual reality using only head-mounted display (HMD) tracking signals. The system adopts a parallel pipeline with a VR Frontend and a GA Backend. The VR Frontend uses inverse kinematics to estimate full-body pose and streams the resulting pose along with stereo camera parameters to the backend. The GA Backend stereoscopically renders a 3DGS avatar reconstructed from a single image. To improve stereo rendering efficiency, we introduce Binocular Batching, which jointly processes left and right eye views in a single batched pass to reduce redundant computation and support high-resolution VR displays. We evaluate VRGaussianAvatar with quantitative performance tests and a within-subject user study against image- and video-based mesh avatar baselines. Results show that VRGaussianAvatar sustains interactive VR performance and yields higher perceived appearance similarity, embodiment, and plausibility. Project page and source code are available at https://vrgaussianavatar.github.io.

💡 Research Summary

VRGaussianAvatar presents an end‑to‑end system that brings photorealistic full‑body 3D Gaussian Splatting (3DGS) avatars into immersive virtual reality using only the internal sensors of a commercial head‑mounted display (HMD). The architecture is split into a VR Frontend (implemented in Unity) and a Gaussian Avatar (GA) Backend (implemented in Python). The Frontend receives 6‑DoF head pose and hand tracking from the HMD, runs an off‑the‑shelf inverse‑kinematics (IK) solver (Final IK) to estimate a SMPL‑X‑compatible full‑body pose θ, and constructs per‑eye world‑to‑camera transforms (T_CW,L and T_CW,R) together with shared intrinsics K. These data, together with a monotonically increasing frame ID, are streamed to the Backend each frame.

The Backend holds a pre‑computed 3DGS representation of the user’s avatar, which is obtained offline from a single portrait image using the Large Animatable Human Model (LHM). LHM extracts body and head features, tokenises them, and feeds them into a Multimodal Body‑Head Transformer (MBHT). The decoder outputs a canonical set of Gaussians χ (centroids, anisotropic scales, orientations, opacities, and spherical‑harmonic appearance), SMPL‑X rig weights w_ik, and per‑bone rigid transforms T_k(·). Because the Gaussians are rigged, applying the online pose θ simply re‑positions each Gaussian via the corresponding bone transform, enabling real‑time animation.

A key contribution is “Binocular Batching”, a stereoscopic rendering strategy that processes the left‑ and right‑eye views in a single GPU batch. Instead of rendering each eye sequentially (duplicating memory transfers, kernel launches, and cache warm‑up), the method uploads the static Gaussian attributes once, then evaluates eye‑specific projection and visibility for both eyes within the same pass. This halves the fixed overhead, improves cache coherence, and yields a 30 % reduction in per‑frame rendering time on a consumer‑grade GPU, allowing 90 fps at 2160 × 1200 per‑eye resolution.

Communication between Frontend and Backend is performed over localhost with a lightweight protocol; the per‑frame payload is under a kilobyte, resulting in sub‑millisecond network latency. The system was benchmarked against two baselines: an image‑based mesh avatar and a video‑based mesh avatar, both built on SMPL‑X and driven by the same HMD‑only tracking. Quantitative metrics (frame rate, latency, GPU memory) show that VRGaussianAvatar consistently outperforms the baselines.

A within‑subject user study with 24 participants evaluated perceived appearance similarity, virtual embodiment, and plausibility. Participants controlled avatars of themselves and of others using the same HMD inputs. The 3DGS avatars received significantly higher scores across all dimensions, with embodiment improving by roughly 18 % over mesh baselines. Subjective comments highlighted the natural look of the Gaussian splats and the smooth motion despite the limited tracking data.

In summary, the paper delivers a practical pipeline that integrates single‑image 3DGS avatar reconstruction, HMD‑only pose estimation, and an efficient binocular rendering scheme to achieve real‑time, high‑fidelity full‑body avatars in VR. The approach is compatible with existing VR hardware, requires no external capture rigs, and opens the door for more immersive telepresence, social VR, and gaming experiences where photorealistic self‑representation is essential.

Comments & Academic Discussion

Loading comments...

Leave a Comment