HuPER: A Human-Inspired Framework for Phonetic Perception

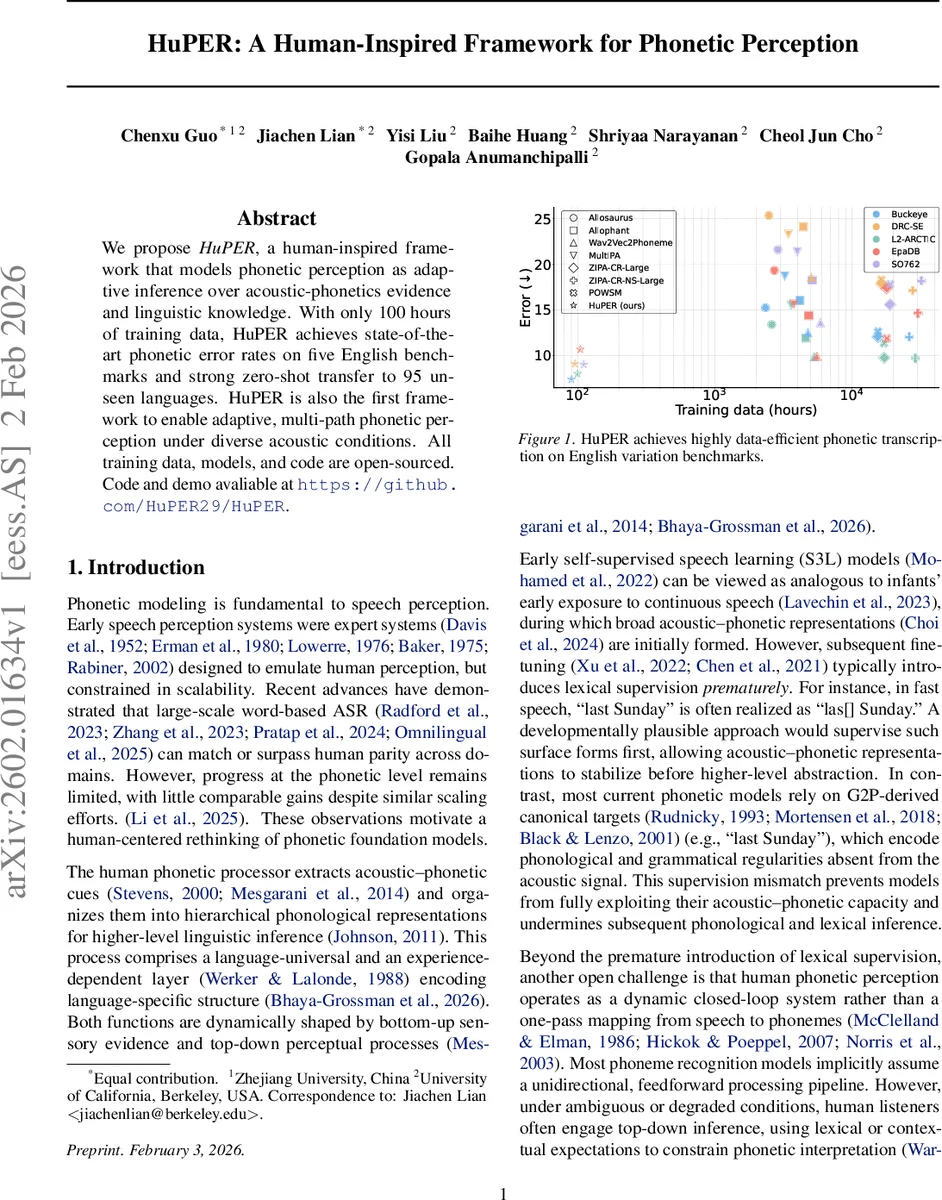

We propose HuPER, a human-inspired framework that models phonetic perception as adaptive inference over acoustic-phonetics evidence and linguistic knowledge. With only 100 hours of training data, HuPER achieves state-of-the-art phonetic error rates on five English benchmarks and strong zero-shot transfer to 95 unseen languages. HuPER is also the first framework to enable adaptive, multi-path phonetic perception under diverse acoustic conditions. All training data, models, and code are open-sourced. Code and demo avaliable at https://github.com/HuPER29/HuPER.

💡 Research Summary

HuPER (Human‑Perceptual Phonetic Encoder) is a novel speech‑perception framework that explicitly models the two‑stage, closed‑loop nature of human phonetic processing. The authors argue that existing automatic speech recognition (ASR) systems treat phoneme recognition as a single feed‑forward mapping from acoustic signal to phoneme labels, whereas human listeners continuously integrate bottom‑up acoustic‑phonetic evidence with top‑down linguistic expectations, dynamically selecting the most reliable pathway depending on signal quality, context, and task demands.

The architecture consists of four main components:

-

HuPER‑Recognizer – a WaveLM‑Large model fine‑tuned on a small human‑annotated phone corpus (TIMIT) using CTC loss. To overcome the scarcity of phone‑level labels, the authors introduce a self‑training pipeline (Algorithm 1) that generates pseudo‑phone labels on a large transcript‑only corpus (LibriSpeech). A “Corrector” network learns edit operations (keep, delete, substitute, insert) that transform canonical G2P‑derived phoneme sequences into acoustically grounded phone proxies. The corrected pseudo‑labels are then used to re‑train the recognizer, yielding a language‑general acoustic‑phonetic encoder.

-

Doubly Robust Risk Correction (DRRC) – The self‑training process is formalized as missing‑label learning. The true risk R(θ) depends on latent true phone labels Y, which are observed only in the small labeled set. By introducing a propensity function g*(z, ŷ) that models the probability of observing Y given the auxiliary G2P transcription Z and the teacher prediction ŷ, the authors derive a corrected loss C_g(W) that unbiasedly estimates R(θ) provided either the propensity model is correct or the proxy labels are accurate. Theorem 3.1 (informal) proves consistency of the empirical estimator under either condition, offering a solid statistical guarantee for the self‑training scheme.

-

HuPER‑Perceiver – Instead of using only the 1‑best phone sequence, the recognizer outputs a weighted phone lattice Π_θ(X). This lattice preserves uncertainty and is composed with a phone‑to‑word lexicon L and a word‑level language model G via weighted finite‑state transducer (WFST) composition. Decoding is performed by extracting the shortest path in Π_θ(X) ∘ L ∘ G, yielding a word transcript. This modular design allows swapping lexicons or language models without retraining the acoustic encoder.

-

Dysfluent WFST – To handle non‑fluent speech (insertions, deletions, substitutions), a constraint WFST H(U) is compiled from an external reference text U (either a provided transcript or the Perceiver’s own 1‑best hypothesis). H(U) encodes a bounded set of plausible phone realizations around the canonical pronunciation while permitting dysfluent edits, enabling top‑down guidance in challenging conditions.

-

HuPER‑Scheduler – The central planner monitors frame‑level posterior margins and normalized entropy to compute a distortion score d_t per frame, which is aggregated into an utterance‑level evidence score s(X) (Equation 8). If s(X) ≤ τ (a predefined threshold), the system follows a pure bottom‑up path, directly outputting the shortest path in the phone lattice. If s(X) > τ, the Scheduler activates the Dysfluent WFST route, either using an external reference R or the Perceiver’s own hypothesis as the constraint. This dynamic routing mirrors human listeners who rely on acoustic evidence when it is clear and fall back on lexical expectations when the signal is degraded.

Experimental Evaluation

Three tasks are evaluated: (1) standard speech‑only transcription with strong acoustic evidence, (2) transcription under varying signal quality where the Scheduler must choose between bottom‑up only and combined bottom‑up/top‑down paths, and (3) transcription with an explicit reference text. HuPER achieves an average phoneme error rate (PFER) of 8.82 across five English benchmarks, surpassing prior self‑supervised learning (S3L) baselines despite using only 100 hours of labeled data. Zero‑shot transfer to 95 unseen languages is reported as “strong,” demonstrating that the language‑universal acoustic‑phonetic encoder generalizes well. Ablation studies confirm that the DRRC‑based self‑training and the adaptive Scheduler each contribute significantly to robustness, especially under noisy or dysfluent conditions where the system automatically switches to the top‑down constrained path, reducing error rates dramatically.

All code, pretrained models, and training data are released under an open‑source license on GitHub (https://github.com/HuPER29/HuPER), facilitating reproducibility and further research.

Key Contributions

- First unified computational framework that explicitly models human‑inspired, multi‑path phonetic perception.

- Introduction of a theoretically grounded DRRC self‑training pipeline that mitigates label bias and missing‑label issues.

- Adaptive Scheduler that quantifies evidence distortion and dynamically selects between bottom‑up and top‑down inference routes.

- Demonstration of data‑efficient state‑of‑the‑art phoneme recognition (100 h) and strong multilingual zero‑shot transfer.

Overall, HuPER bridges the gap between human cognitive models of speech perception and modern deep learning ASR systems, offering a flexible, robust, and theoretically sound approach that could influence future directions in speech technology.

Comments & Academic Discussion

Loading comments...

Leave a Comment