What Do Agents Learn from Trajectory-SFT: Semantics or Interfaces?

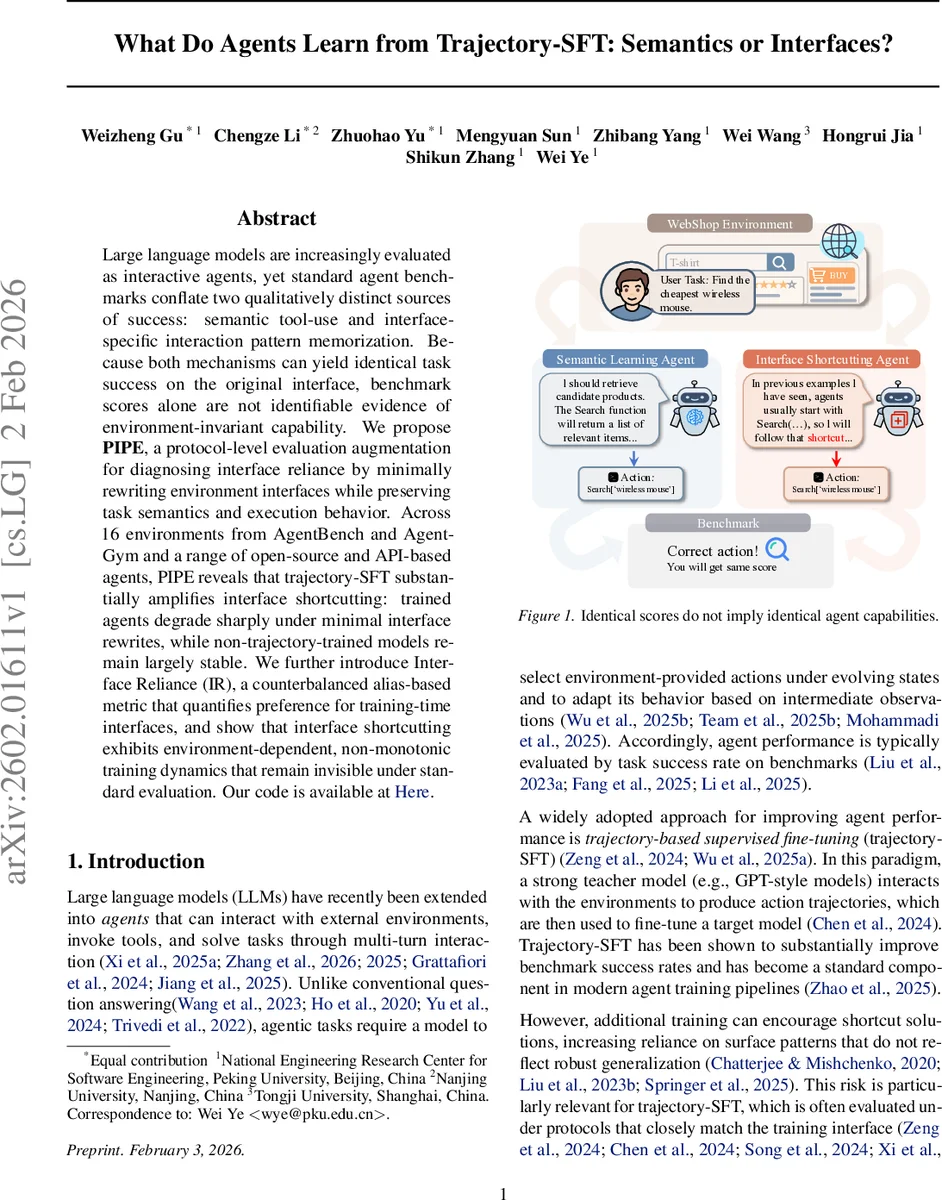

Large language models are increasingly evaluated as interactive agents, yet standard agent benchmarks conflate two qualitatively distinct sources of success: semantic tool-use and interface-specific interaction pattern memorization. Because both mechanisms can yield identical task success on the original interface, benchmark scores alone are not identifiable evidence of environment-invariant capability. We propose PIPE, a protocol-level evaluation augmentation for diagnosing interface reliance by minimally rewriting environment interfaces while preserving task semantics and execution behavior. Across 16 environments from AgentBench and AgentGym and a range of open-source and API-based agents, PIPE reveals that trajectory-SFT substantially amplifies interface shortcutting: trained agents degrade sharply under minimal interface rewrites, while non-trajectory-trained models remain largely stable. We further introduce Interface Reliance (IR), a counterbalanced alias-based metric that quantifies preference for training-time interfaces, and show that interface shortcutting exhibits environment-dependent, non-monotonic training dynamics that remain invisible under standard evaluation. Our code is available at https://anonymous.4open.science/r/What-Do-Agents-Learn-from-Trajectory-SFT-Semantics-or-Interfaces--0831/.

💡 Research Summary

The paper tackles a subtle but critical problem in the evaluation of large‑language‑model (LLM) agents: current benchmark scores conflate two fundamentally different sources of success—semantic learning of tool functionality and memorization of the exact interface surface forms used during training (interface shortcuts). Trajectory‑based supervised fine‑tuning (trajectory‑SFT) has become a standard way to boost agent performance, yet it is unclear whether the observed gains stem from genuine understanding of tool semantics or from over‑fitting to the specific action names and invocation patterns present in the training trajectories.

To disentangle these effects, the authors introduce PIPE (Perturb Interface Protocol for Evaluation). PIPE works by minimally rewriting only the interface specification of an environment while leaving the underlying actions, their functional descriptions, and the environment dynamics untouched. Two families of perturbations are defined: (1) synonym‑based, where action names are replaced by semantically equivalent alternatives, and (2) symbol‑based, where names become meaningless tokens. Because the functional description remains unchanged, any performance drop under these perturbations can be attributed to reliance on the original surface form rather than to increased task difficulty.

The paper formalizes a performance gap Δ = Score(original) – Score(perturbed) and proposes a counter‑balanced metric called Interface Reliance (IR) that quantifies an agent’s dependence on the training‑time interface. A large Δ or high IR indicates strong shortcut behavior; a small Δ suggests the agent has learned to use tools based on their semantics and can adapt to renamed interfaces.

The authors conduct extensive experiments on 16 environments drawn from AgentBench and AgentGym, covering both open‑source models (LLaMA‑2‑based AgentLM, Qwen‑3, Gemma) and commercial API models (GPT‑3.5, GPT‑4o‑mini, GPT‑5‑mini). Each model is evaluated under three conditions: the original interface, synonym‑perturbed, and symbol‑perturbed. They first verify that PIPE does not uniformly increase intrinsic task difficulty: untrained agents show negligible average Δ, sometimes even improving on certain environments, indicating that the perturbations preserve the core problem.

When trajectory‑SFT is applied, the results change dramatically. Trained agents experience substantial performance degradation under both synonym and symbol perturbations, with average Δ ranging from 0.25 to 0.40 (i.e., a 25‑40 % drop in success rate). Symbol‑based changes tend to cause the largest drops, confirming that agents have learned to rely on exact action names rather than on the functional meaning conveyed in the description. This effect is consistent across both open‑source and proprietary models, suggesting that interface shortcutting is a general phenomenon of trajectory‑SFT, not limited to any particular architecture.

Further analysis shows that the severity of shortcutting varies with environment complexity and is non‑monotonic across training epochs. Importantly, the authors demonstrate that the shortcut behavior is not inevitable: by augmenting the training data with diverse interface aliases, the IR metric can be reduced, indicating that agents can be nudged toward more semantic learning.

The contributions are threefold: (1) exposing the ambiguity in standard benchmark evaluation where success rates alone cannot distinguish semantic competence from interface memorization; (2) providing the PIPE protocol and IR metric as practical tools for diagnosing interface reliance without altering task difficulty; (3) empirically showing that trajectory‑SFT amplifies interface shortcutting, with the effect modulated by environment characteristics and training design.

In conclusion, the paper argues that future agent evaluation must go beyond raw success rates and incorporate diagnostics like PIPE to ensure that reported improvements reflect genuine, environment‑invariant capabilities. The work opens avenues for training strategies that explicitly discourage interface over‑fitting, such as interface‑diverse data augmentation, meta‑learning for interface generalization, and the design of new benchmarks that systematically vary interface specifications.

Comments & Academic Discussion

Loading comments...

Leave a Comment