UV-M3TL: A Unified and Versatile Multimodal Multi-Task Learning Framework for Assistive Driving Perception

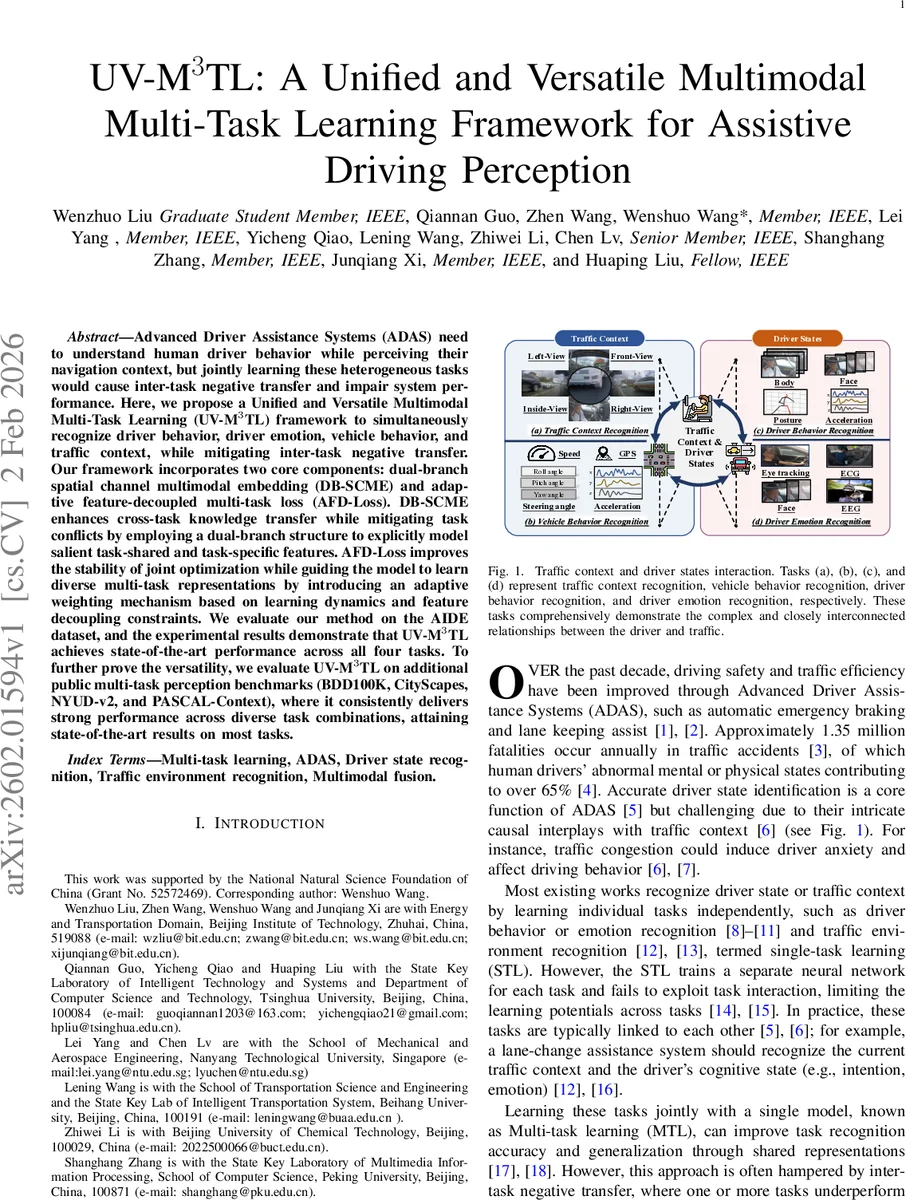

Advanced Driver Assistance Systems (ADAS) need to understand human driver behavior while perceiving their navigation context, but jointly learning these heterogeneous tasks would cause inter-task negative transfer and impair system performance. Here, we propose a Unified and Versatile Multimodal Multi-Task Learning (UV-M3TL) framework to simultaneously recognize driver behavior, driver emotion, vehicle behavior, and traffic context, while mitigating inter-task negative transfer. Our framework incorporates two core components: dual-branch spatial channel multimodal embedding (DB-SCME) and adaptive feature-decoupled multi-task loss (AFD-Loss). DB-SCME enhances cross-task knowledge transfer while mitigating task conflicts by employing a dual-branch structure to explicitly model salient task-shared and task-specific features. AFD-Loss improves the stability of joint optimization while guiding the model to learn diverse multi-task representations by introducing an adaptive weighting mechanism based on learning dynamics and feature decoupling constraints. We evaluate our method on the AIDE dataset, and the experimental results demonstrate that UV-M3TL achieves state-of-the-art performance across all four tasks. To further prove the versatility, we evaluate UV-M3TL on additional public multi-task perception benchmarks (BDD100K, CityScapes, NYUD-v2, and PASCAL-Context), where it consistently delivers strong performance across diverse task combinations, attaining state-of-the-art results on most tasks.

💡 Research Summary

The paper introduces UV‑M³TL, a unified multimodal multi‑task learning framework designed for advanced driver assistance systems (ADAS) that must simultaneously understand driver behavior, driver emotion, vehicle dynamics, and traffic context. The authors identify two major challenges in existing multi‑task approaches: (1) negative transfer caused by heterogeneous tasks with divergent objectives and data distributions, and (2) optimization imbalance due to differing loss scales and convergence speeds. To address these, UV‑M³TL incorporates two novel components.

First, the Dual‑Branch Spatial‑Channel Multimodal Embedding (DB‑SCME) processes multimodal inputs (multi‑view exterior cameras, cabin cameras, facial images, physiological signals, GPS, speed, steering angle, LiDAR, etc.) through a spatial‑channel attention mechanism that highlights salient regions and channels. The embedding then splits into a shared branch that extracts common semantic cues useful across tasks (e.g., road geometry, general motion patterns) and a task‑specific branch that preserves distinctive cues (e.g., facial expressions, eye gaze, vehicle acceleration). By explicitly separating shared and exclusive representations across both spatial and channel dimensions, DB‑SCME mitigates feature conflicts while still enabling positive knowledge transfer.

Second, the Adaptive Feature‑Decoupled Multi‑Task Loss (AFD‑Loss) introduces a dynamic weighting scheme based on learning dynamics (loss reduction rate, gradient variance) and a feature‑decoupling regularizer that penalizes similarity between shared and task‑specific feature vectors (implemented as a cosine similarity term). The dynamic weighting prevents any single task from dominating the gradient flow, especially early in training when loss magnitudes differ widely, and reallocates emphasis toward slower‑converging tasks later on. The decoupling term forces the two branches to learn orthogonal representations, reducing redundancy and encouraging each task to benefit from distinct information.

The authors evaluate UV‑M³TL on five benchmark datasets: the newly released AIDE dataset (which contains synchronized multimodal recordings and annotations for driver behavior, driver emotion, vehicle behavior, and traffic context) and four public multi‑task perception suites—BDD100K, CityScapes, NYUD‑v2, and PASCAL‑Context. On AIDE, UV‑M³TL achieves state‑of‑the‑art performance on all four tasks, delivering average accuracy improvements ranging from 1.4 % to 13.5 % over prior methods. An ablation study shows that removing either DB‑SCME or AFD‑Loss degrades performance by 3–5 %, confirming the complementary nature of the two modules. Across the four additional datasets, which involve dense prediction tasks such as semantic segmentation, depth estimation, surface normal prediction, and panoptic segmentation, UV‑M³TL consistently outperforms recent multi‑task baselines (e.g., MMTL‑UniAD, AdaMT‑Net, InvPT) on most metrics (mIoU, mAcc, RMSE, etc.).

The paper’s contributions are fourfold: (1) a unified multimodal MTL framework that jointly tackles driver‑centric and environment‑centric tasks, (2) the DB‑SCME module that adaptively balances shared and task‑specific feature extraction across spatial and channel axes, (3) the AFD‑Loss that leverages learning‑progress‑aware weighting together with a feature‑decoupling regularizer to stabilize joint optimization, and (4) extensive empirical validation demonstrating both effectiveness and versatility across diverse scenarios.

In summary, UV‑M³TL offers a practical solution for real‑world ADAS where heterogeneous sensor streams and multiple perception objectives must be processed jointly. The approach advances the state of the art by explicitly addressing negative transfer and optimization imbalance, and it opens avenues for future work on real‑time deployment, model compression, and incorporation of additional physiological modalities such as EEG or galvanic skin response.

Comments & Academic Discussion

Loading comments...

Leave a Comment