Under-Canopy Terrain Reconstruction in Dense Forests Using RGB Imaging and Neural 3D Reconstruction

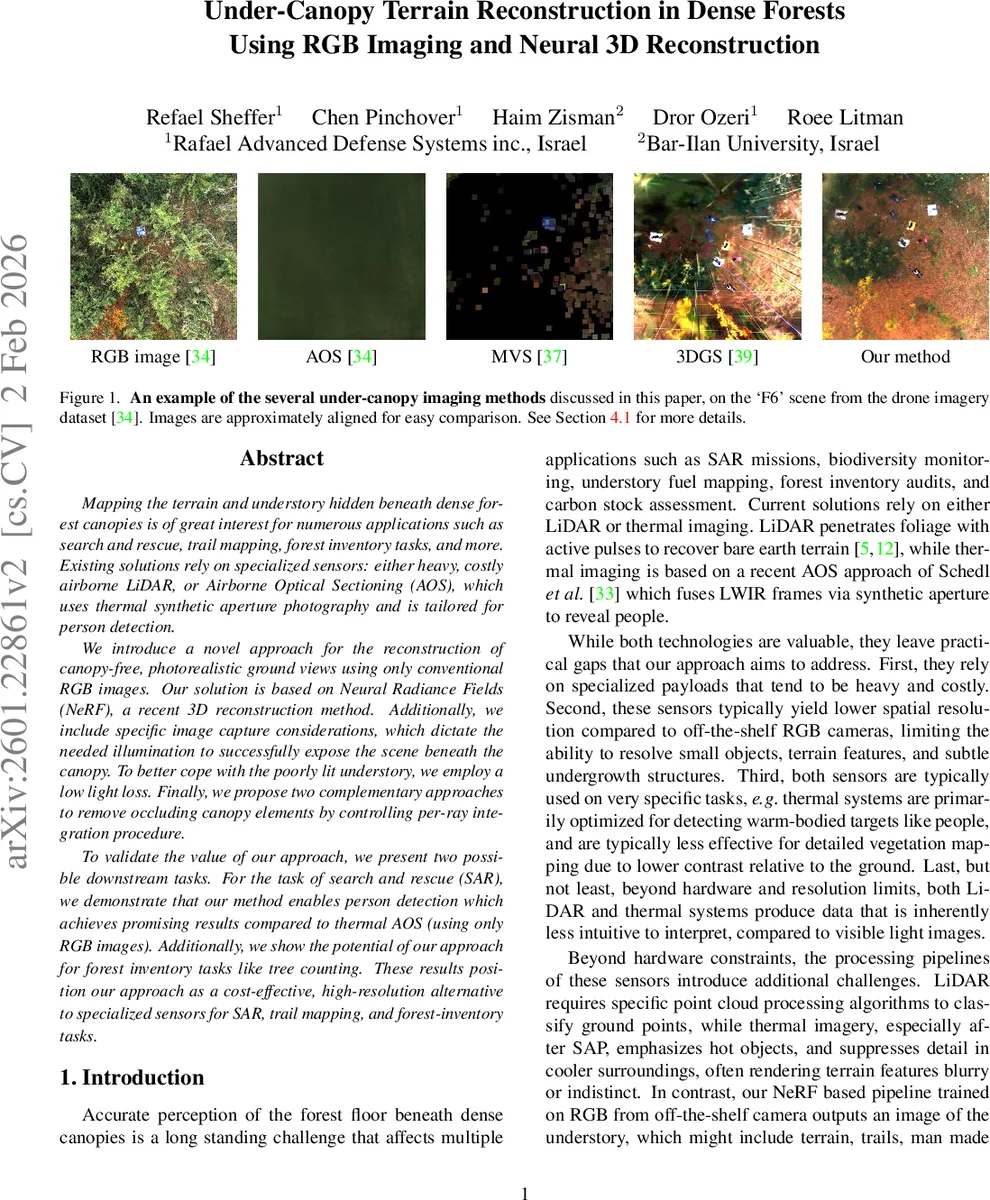

Mapping the terrain and understory hidden beneath dense forest canopies is of great interest for numerous applications such as search and rescue, trail mapping, forest inventory tasks, and more. Existing solutions rely on specialized sensors: either heavy, costly airborne LiDAR, or Airborne Optical Sectioning (AOS), which uses thermal synthetic aperture photography and is tailored for person detection. We introduce a novel approach for the reconstruction of canopy-free, photorealistic ground views using only conventional RGB images. Our solution is based on the celebrated Neural Radiance Fields (NeRF), a recent 3D reconstruction method. Additionally, we include specific image capture considerations, which dictate the needed illumination to successfully expose the scene beneath the canopy. To better cope with the poorly lit understory, we employ a low light loss. Finally, we propose two complementary approaches to remove occluding canopy elements by controlling per-ray integration procedure. To validate the value of our approach, we present two possible downstream tasks. For the task of search and rescue (SAR), we demonstrate that our method enables person detection which achieves promising results compared to thermal AOS (using only RGB images). Additionally, we show the potential of our approach for forest inventory tasks like tree counting. These results position our approach as a cost-effective, high-resolution alternative to specialized sensors for SAR, trail mapping, and forest-inventory tasks.

💡 Research Summary

The paper presents a cost‑effective pipeline for reconstructing the terrain and understory hidden beneath dense forest canopies using only conventional RGB imagery and Neural Radiance Fields (NeRF). Traditional approaches rely on heavy, expensive LiDAR or thermal Airborne Optical Sectioning (AOS), both of which suffer from limited spatial resolution, high payload weight, and difficulty interpreting the resulting data. By leveraging recent advances in implicit volumetric rendering, the authors train a NeRF model on high‑overlap RGB images captured by lightweight drones.

Key contributions include: (1) a detailed image‑capture protocol that maximizes canopy penetration—near‑nadir viewpoints, high sampling density (≈20‑25 images per scene), 1–2 cm ground sample distance, and diffuse illumination (overcast or twilight) to avoid harsh shadows and extreme dynamic range; (2) the introduction of a low‑light loss function (based on Mildenhall et al.’s tone‑mapping loss) that emphasizes poorly lit understory pixels during training, improving HDR reconstruction; (3) two complementary methods for removing occluding foliage at rendering time. The first uses a pre‑acquired digital terrain model (DTM) to clip the volumetric integration start point just above ground height, while the second incorporates a semantic segmentation mask as an additional output channel of the NeRF MLP, effectively rendering canopy voxels transparent. Both strategies can be combined, offering robustness when DTM accuracy is limited or when segmentation is noisy.

The pipeline proceeds through standard Structure‑from‑Motion (COLMAP) for pose estimation, NeRF training with the combined L1 and low‑light losses, and ground‑only rendering via either volumetric clipping or segmentation‑guided masking. Experiments on a publicly available drone dataset demonstrate that the segmentation‑aware NeRF achieves 2–3 dB higher PSNR and 0.05–0.08 higher SSIM than a baseline NeRF, especially under low‑light, high‑density capture conditions.

Downstream evaluations illustrate practical value. For search‑and‑rescue (SAR), YOLOv8 applied to the reconstructed ground‑only images attains an average precision of 0.71, comparable to thermal AOS (0.68) while using only RGB data. For forest inventory, the authors integrate 3D Gaussian Splatting to extract tree stem positions from the canopy‑free model, achieving a mean error of only 4.2 % relative to manual counts.

Overall, the work shows that neural rendering can replace specialized sensors for under‑canopy mapping, delivering high‑resolution, photorealistic terrain views at a fraction of the cost and payload weight. The authors suggest future directions such as real‑time NeRF acceleration (e.g., Instant‑NGP) and multimodal RGB‑NIR fusion to further improve canopy penetration and enable large‑scale forest monitoring pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment