OS-Marathon: Benchmarking Computer-Use Agents on Long-Horizon Repetitive Tasks

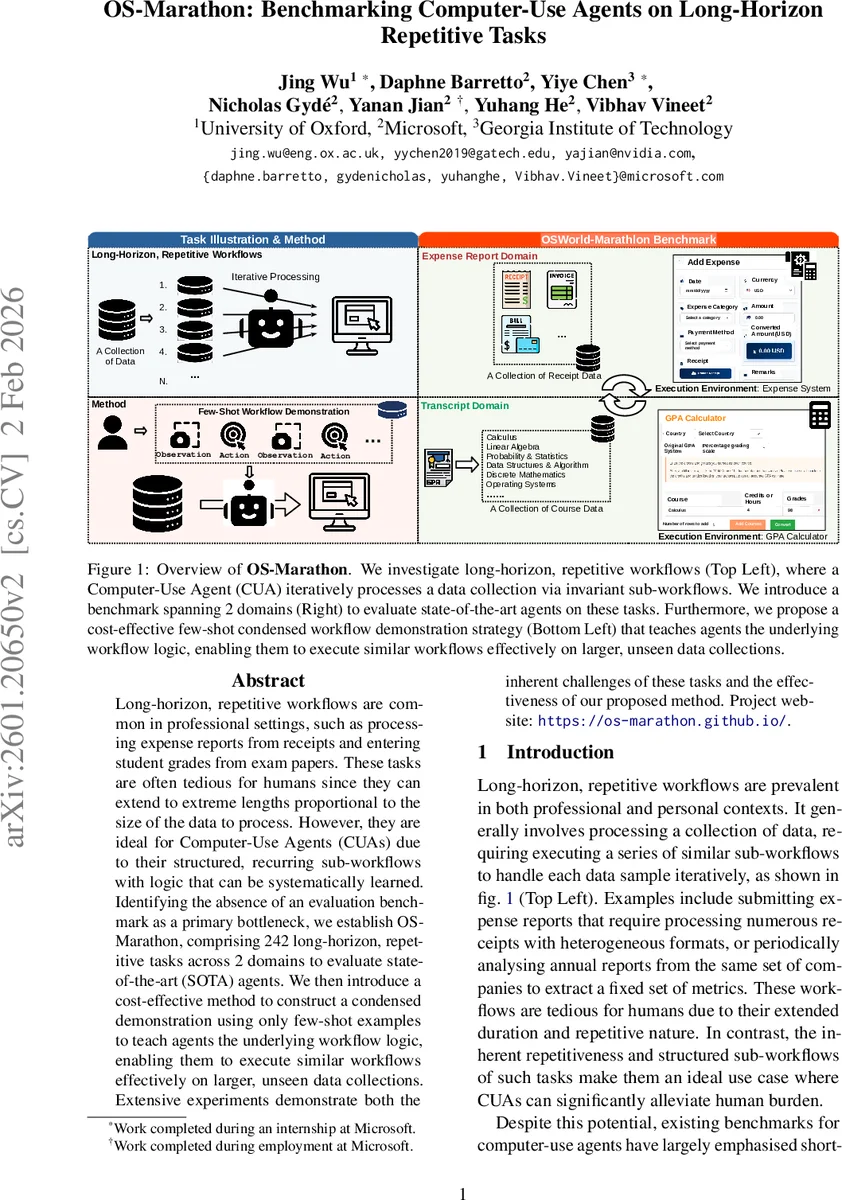

Long-horizon, repetitive workflows are common in professional settings, such as processing expense reports from receipts and entering student grades from exam papers. These tasks are often tedious for humans since they can extend to extreme lengths proportional to the size of the data to process. However, they are ideal for Computer-Use Agents (CUAs) due to their structured, recurring sub-workflows with logic that can be systematically learned. Identifying the absence of an evaluation benchmark as a primary bottleneck, we establish OS-Marathon, comprising 242 long-horizon, repetitive tasks across 2 domains to evaluate state-of-the-art (SOTA) agents. We then introduce a cost-effective method to construct a condensed demonstration using only few-shot examples to teach agents the underlying workflow logic, enabling them to execute similar workflows effectively on larger, unseen data collections. Extensive experiments demonstrate both the inherent challenges of these tasks and the effectiveness of our proposed method. Project website: https://os-marathon.github.io/.

💡 Research Summary

The paper addresses a critical gap in the evaluation of Computer‑Use Agents (CUAs): existing benchmarks focus on short‑horizon tasks, leaving long‑horizon, repetitive workflows—common in real‑world settings such as expense reporting and academic transcript processing—largely unstudied. To fill this void, the authors introduce OS‑Marathon, a benchmark consisting of 242 tasks spread across two domains (Expense Report and Transcript) and seven distinct execution environments (web‑based systems, spreadsheet applications, etc.). Each task is formally defined as a sequence of N sub‑workflows that share identical logic but operate on different data instances, modeled as a Partially Observable Markov Decision Process (POMDP).

Data for the benchmark combines real‑world receipts and transcripts with a large synthetic corpus generated via a two‑stage pipeline: (1) LLM‑driven profile creation (using GPT‑5) and (2) rendering through 37 HTML templates that emulate diverse visual layouts, currencies, and document structures. This hybrid approach ensures both visual realism and logical consistency, while allowing scalable generation of hundreds of unique samples.

Difficulty is stratified along two axes—volume of instances and per‑instance complexity (page count, column layout, etc.)—yielding seven difficulty levels. For example, Expense Report Level 4 requires processing ~30 multi‑page PDFs, and Transcript Level 3 involves multi‑page, multi‑column academic records.

Baseline evaluations with state‑of‑the‑art CUAs (GPT‑4‑based agents, ReAct, Voyager, etc.) reveal three dominant failure modes: (1) logical incoherence (agents execute steps out of order), (2) hallucination during action planning (agents fill fields without extracting required information), and (3) premature termination of the repetitive loop after only a few iterations. Performance degrades sharply as task horizon and document complexity increase.

To mitigate these issues, the authors propose Few‑shot Condensed Workflow Demonstration (FCWD). Instead of feeding the full, lengthy workflow, FCWD abstracts the process into a compact set of few‑shot examples that capture (a) a global plan for orchestrating the repetition and (b) a sub‑workflow template that maps inputs to actions for a single data instance. This dual‑level instruction fits within current context windows while still conveying the essential procedural logic.

Experiments show that integrating FCWD markedly improves agent performance across all difficulty levels, with average success‑rate gains of ~27 percentage points and especially notable improvements in the hardest tiers (Level 3–4). The approach reduces logical errors, curbs hallucination, and enables agents to maintain consistency over longer horizons.

In summary, OS‑Marathon provides the first standardized benchmark for long‑horizon, repetitive desktop tasks, and FCWD offers a lightweight, cost‑effective method to teach CUAs complex workflows using only a handful of demonstrations. The work paves the way for future research on memory‑augmented reasoning, robust planning, and real‑world automation with CUAs.

Comments & Academic Discussion

Loading comments...

Leave a Comment