Code over Words: Overcoming Semantic Inertia via Code-Grounded Reasoning

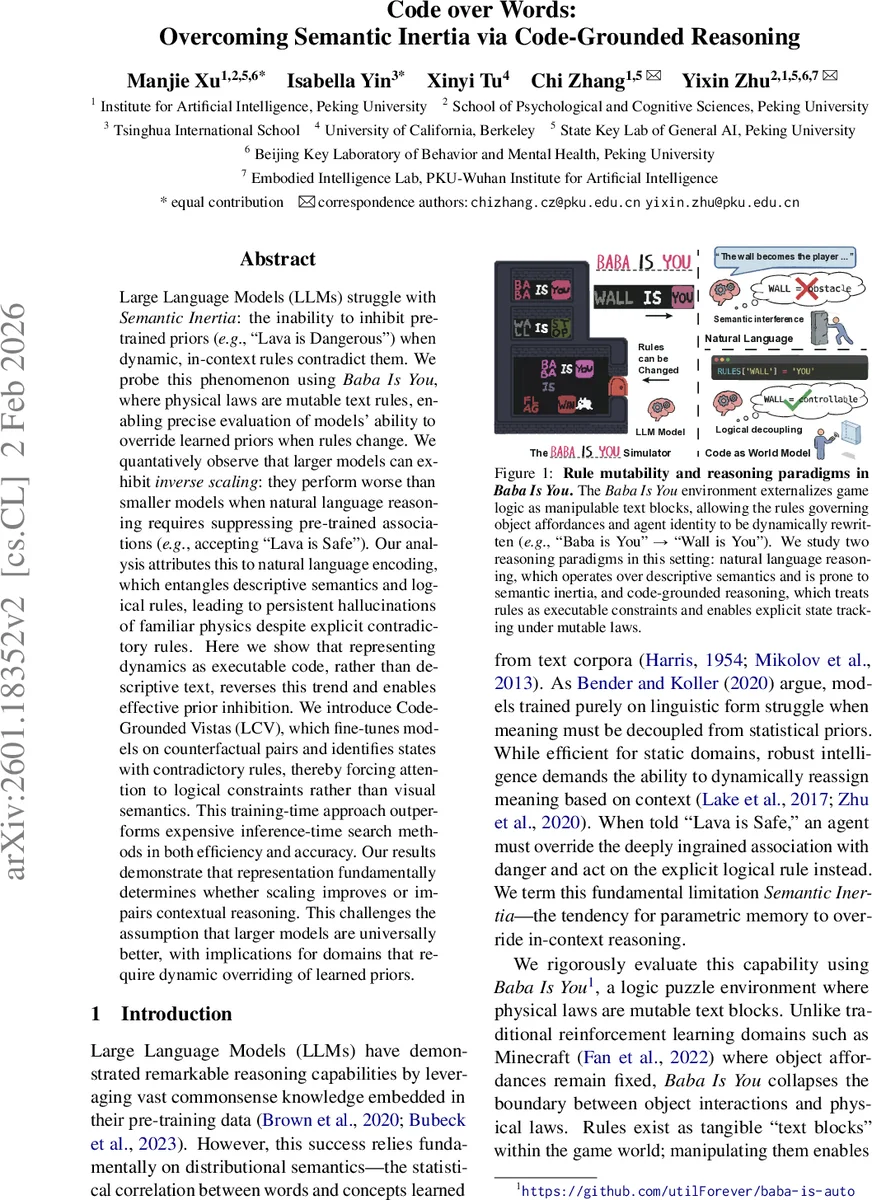

LLMs struggle with Semantic Inertia: the inability to inhibit pre-trained priors (e.g., “Lava is Dangerous”) when dynamic, in-context rules contradict them. We probe this phenomenon using Baba Is You, where physical laws are mutable text rules, enabling precise evaluation of models’ ability to override learned priors when rules change. We quantatively observe that larger models can exhibit inverse scaling: they perform worse than smaller models when natural language reasoning requires suppressing pre-trained associations (e.g., accepting “Lava is Safe”). Our analysis attributes this to natural language encoding, which entangles descriptive semantics and logical rules, leading to persistent hallucinations of familiar physics despite explicit contradictory rules. Here we show that representing dynamics as executable code, rather than descriptive text, reverses this trend and enables effective prior inhibition. We introduce Code-Grounded Vistas (LCV), which fine-tunes models on counterfactual pairs and identifies states with contradictory rules, thereby forcing attention to logical constraints rather than visual semantics. This training-time approach outperforms expensive inference-time search methods in both efficiency and accuracy. Our results demonstrate that representation fundamentally determines whether scaling improves or impairs contextual reasoning. This challenges the assumption that larger models are universally better, with implications for domains that require dynamic overriding of learned priors.

💡 Research Summary

**

The paper tackles a fundamental limitation of large language models (LLMs) called Semantic Inertia: the tendency to cling to pre‑trained semantic associations (e.g., “lava is dangerous”) even when the current context explicitly contradicts them. To probe this, the authors use the puzzle game Baba Is You, where physical laws are expressed as mutable text blocks that can be rearranged to create rules such as “Lava is Safe” or “Wall is You”. This environment provides a clean testbed for measuring whether a model can suppress entrenched priors and follow dynamically changing, counter‑intuitive rules.

First, the authors conduct a systematic scaling study across three model families (Llama‑3, Pythia, Qwen2.5) ranging from 160 M to 70 B parameters. They evaluate two prompting modalities: (a) natural‑language prompts that describe the rule in plain text, and (b) code‑grounded prompts that ask the model to generate an executable Python transition function parameterized by the current rule set. For each scenario they compute ΔP = P(logic‑driven | state) − P(prior‑driven | state). Negative ΔP indicates that the model defaults to its prior knowledge, i.e., exhibits semantic inertia.

The results reveal a striking inverse scaling phenomenon under natural‑language prompting: larger models show larger negative ΔP, meaning they are worse at overriding their priors. This aligns with recent observations that bigger models, having absorbed more human‑centric data, become more resistant to contextual updates. In contrast, when the same tasks are expressed as executable code, the scaling trend reverses. Code grounding decouples symbols from their semantic baggage; the model now predicts a program rather than a token sequence, and larger models achieve higher (positive) ΔP, demonstrating that they can leverage their capacity for logical inference when the representation suppresses linguistic priors.

Motivated by this, the authors introduce Code‑Grounded Vistas (LCV), a training‑time framework that forces the model to synthesize correct world dynamics as Python code. LCV consists of three key steps: (1) constructing a counterfactual contrast dataset where identical visual states are paired with mutually contradictory rule sets; (2) fine‑tuning the model to map (state, rule‑set) → a deterministic transition function def transition(state, rules): …; and (3) using a classical planner that consumes the generated transition function to search for actions. Because the theory induction is amortized during fine‑tuning, inference requires only a single forward pass, eliminating the costly generate‑test‑debug loops used by prior methods such as TheoryCoder.

Empirically, LCV outperforms strong inference‑time baselines both in accuracy and latency. It reduces inference time by roughly a factor of four while achieving higher ΔP scores and overall success rates on the Baba Bench benchmark, which includes three tiers of semantic difficulty (aligned, conflict, and dynamic plasticity). Notably, the 70 B Llama‑3 model, which suffered the most semantic inertia under natural‑language prompts, attains the highest performance when operating under LCV, effectively turning inverse scaling into normal positive scaling.

The paper’s key insights are: (1) representation matters—natural language entangles descriptive semantics with logical constraints, making it hard for models to suppress priors; code provides a clean, executable abstraction that isolates logic from semantics. (2) Scaling does not guarantee better reasoning in contexts requiring dynamic ontological restructuring; larger models may amplify prior bias unless the representation is appropriately aligned. (3) Amortized theory induction via counterfactual contrast is a scalable alternative to expensive inference‑time search, enabling real‑time deployment in environments with mutable rules.

Overall, the work demonstrates that by grounding reasoning in executable code, we can overcome semantic inertia, restore beneficial scaling properties, and open new avenues for deploying LLMs in domains where rules change on the fly—such as robotics, adaptive game AI, and dynamic policy management.

Comments & Academic Discussion

Loading comments...

Leave a Comment