MemBuilder: Reinforcing LLMs for Long-Term Memory Construction via Attributed Dense Rewards

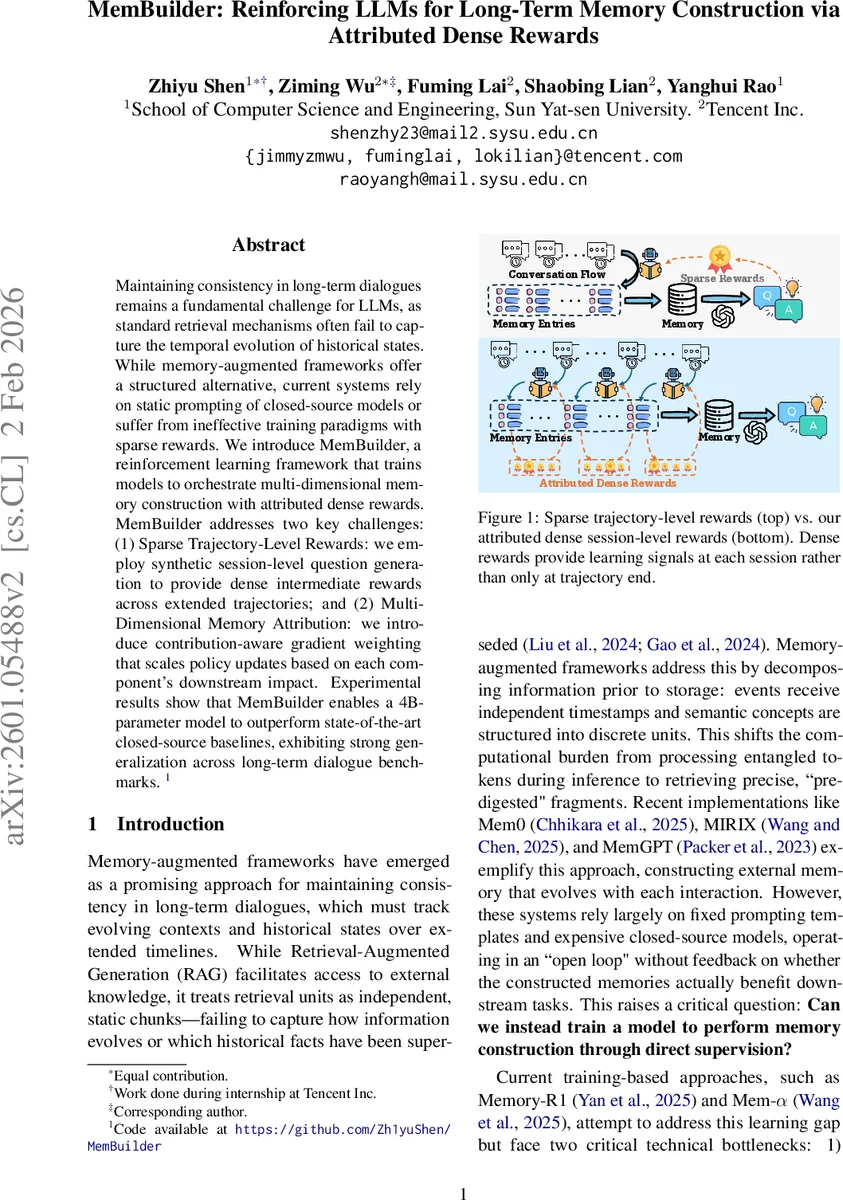

Maintaining consistency in long-term dialogues remains a fundamental challenge for LLMs, as standard retrieval mechanisms often fail to capture the temporal evolution of historical states. While memory-augmented frameworks offer a structured alternative, current systems rely on static prompting of closed-source models or suffer from ineffective training paradigms with sparse rewards. We introduce MemBuilder, a reinforcement learning framework that trains models to orchestrate multi-dimensional memory construction with attributed dense rewards. MemBuilder addresses two key challenges: (1) Sparse Trajectory-Level Rewards: we employ synthetic session-level question generation to provide dense intermediate rewards across extended trajectories; and (2) Multi-Dimensional Memory Attribution: we introduce contribution-aware gradient weighting that scales policy updates based on each component’s downstream impact. Experimental results show that MemBuilder enables a 4B-parameter model to outperform state-of-the-art closed-source baselines, exhibiting strong generalization across long-term dialogue benchmarks.

💡 Research Summary

MemBuilder tackles the persistent problem of maintaining coherence in long‑term dialogues by teaching a lightweight (4‑billion‑parameter) language model to construct and manage a structured external memory bank through reinforcement learning. Traditional retrieval‑augmented generation (RAG) treats retrieved chunks as independent, static units, which fails to capture the temporal evolution of conversational context. Recent memory‑augmented systems such as Mem0, MIRIX, and MemGPT decompose dialogue into multiple memory types (Core, Episodic, Semantic, Procedural) but rely on fixed prompting templates and closed‑source models, offering no feedback on whether the constructed memories actually benefit downstream tasks. Moreover, prior learning‑based approaches (Memory‑R1, Memα) suffer from two critical bottlenecks: (1) sparse trajectory‑level rewards that are only given after an entire multi‑session dialogue, making it impossible for the model to attribute success to specific memory actions; and (2) a global reward shared across all memory dimensions, which obscures the distinct contribution of each memory type during question answering.

MemBuilder introduces Attributed Dense Rewards Policy Optimization (ADRPO), a two‑pronged solution. First, it generates dense session‑level rewards by synthesizing a set of question‑answer pairs for each dialogue session. An expert LLM (Claude 4.5 Sonnet) creates J questions that probe single‑session retention, cross‑session aggregation, and temporal reasoning. During RL training, the policy model performs memory operations for the current session, producing a candidate memory bank. The same synthetic questions are then answered using this candidate memory, and an LLM judge evaluates correctness. The task reward is the average QA accuracy across the J questions, providing immediate feedback after every session rather than only at the end of the trajectory. A validity gate discards malformed outputs, and a length‑penalty term λ·ℓ discourages excessive memory growth.

Second, MemBuilder addresses multi‑dimensional memory attribution by weighting gradient updates according to downstream usage. While all memory types share the same session reward, the algorithm records how often each memory component (Episodic, Semantic, Procedural) is retrieved during the synthetic QA phase. The most frequently accessed type receives an amplification factor α > 1, while the others keep a weight of 1; Core memory, which is always included in the prompt, retains a fixed weight of 1. These weights multiply the importance‑ratio term in the PPO‑style loss, ensuring that memory actions that directly contribute to successful answers receive stronger reinforcement.

The memory architecture itself is deliberately simple yet expressive. Core memory stores a fixed‑size block of persistent user profile information, automatically compressed when capacity is exceeded. Episodic memory records timestamped events in a “YYYY‑MM‑DD: summary | details” format, enabling precise temporal reasoning. Semantic memory holds user‑specific factual knowledge, deliberately excluding generic world knowledge that resides in the model parameters. Procedural memory captures step‑by‑step routines. For each session, the model selects an action for each memory type from a predefined action space (e.g., APPEND/REPLACE/REWRITE for Core; ADD/UPDATE/MERGE for Episodic). Notably, UPDATE creates a new entry that references the previous one, preserving history, and MERGE synthesizes multiple related events into a concise conclusion while retaining evidence links.

Training proceeds in two stages. First, Supervised Fine‑Tuning (SFT) collects expert trajectories from Claude 4.5 Sonnet to teach the model the correct JSON‑style output format and basic memory operations, mitigating the cold‑start problem where a small model might produce invalid actions. Second, ADRPO samples N rollouts per session, computes the dense reward and attribution weights, and optimizes a loss that combines a clipped policy‑gradient term, the contribution‑aware scaling, and a KL‑divergence regularizer to keep the policy close to the expert reference.

Empirical evaluation spans three long‑term dialogue benchmarks: LoCoMo (multi‑session QA), Long‑MemEval (temporal reasoning), and PerL‑TQA (personalized QA). MemBuilder achieves 84.23 % accuracy on LoCoMo, surpassing the closed‑source Claude 4.5 Sonnet baseline (≈81 %) and other state‑of‑the‑art models. Ablation studies reveal that removing dense rewards drops performance by 4–6 percentage points, while omitting contribution‑aware weighting reduces the usage of Episodic memory and degrades overall accuracy by about 2 points. The length‑penalty mechanism successfully controls memory growth, keeping token usage within practical limits even when the allowed memory size is doubled, with only modest inference latency increase.

The paper acknowledges limitations: synthetic question generation depends on an expensive expert LLM, though the questions can be reused across many training iterations; the current implementation is tuned for a 4B model, and scaling to larger architectures may require redesigning the action space and improving sample efficiency; and the static amplification factor α could bias learning toward a single memory type, suggesting future work on dynamic scheduling.

In summary, MemBuilder demonstrates that dense, session‑level reinforcement signals combined with downstream‑usage‑aware gradient weighting enable a modest‑size LLM to learn effective, multi‑dimensional memory construction, achieving performance on par with or exceeding large proprietary systems. This work opens a path toward affordable, open‑source conversational agents capable of long‑term, coherent interaction through learned memory management. Future directions include automated question generation, adaptive attribution weighting, and extending the framework to multimodal memory representations.

Comments & Academic Discussion

Loading comments...

Leave a Comment