VL-JEPA: Joint Embedding Predictive Architecture for Vision-language

We introduce VL-JEPA, a vision-language model built on a Joint Embedding Predictive Architecture (JEPA). Instead of autoregressively generating tokens as in classical VLMs, VL-JEPA predicts continuous embeddings of the target texts. By learning in an abstract representation space, the model focuses on task-relevant semantics while abstracting away surface-level linguistic variability. In a strictly controlled comparison against standard token-space VLM training with the same vision encoder and training data, VL-JEPA achieves stronger performance while having 50% fewer trainable parameters. At inference time, a lightweight text decoder is invoked only when needed to translate VL-JEPA predicted embeddings into text. We show that VL-JEPA natively supports selective decoding that reduces the number of decoding operations by 2.85x while maintaining similar performance compared to non-adaptive uniform decoding. Beyond generation, the VL-JEPA’s embedding space naturally supports open-vocabulary classification, text-to-video retrieval, and discriminative VQA without any architecture modification. On eight video classification and eight video retrieval datasets, the average performance VL-JEPA surpasses that of CLIP, SigLIP2, and Perception Encoder. At the same time, the model achieves comparable performance as classical VLMs (InstructBLIP, QwenVL) on four VQA datasets: GQA, TallyQA, POPE and POPEv2, despite only having 1.6B parameters.

💡 Research Summary

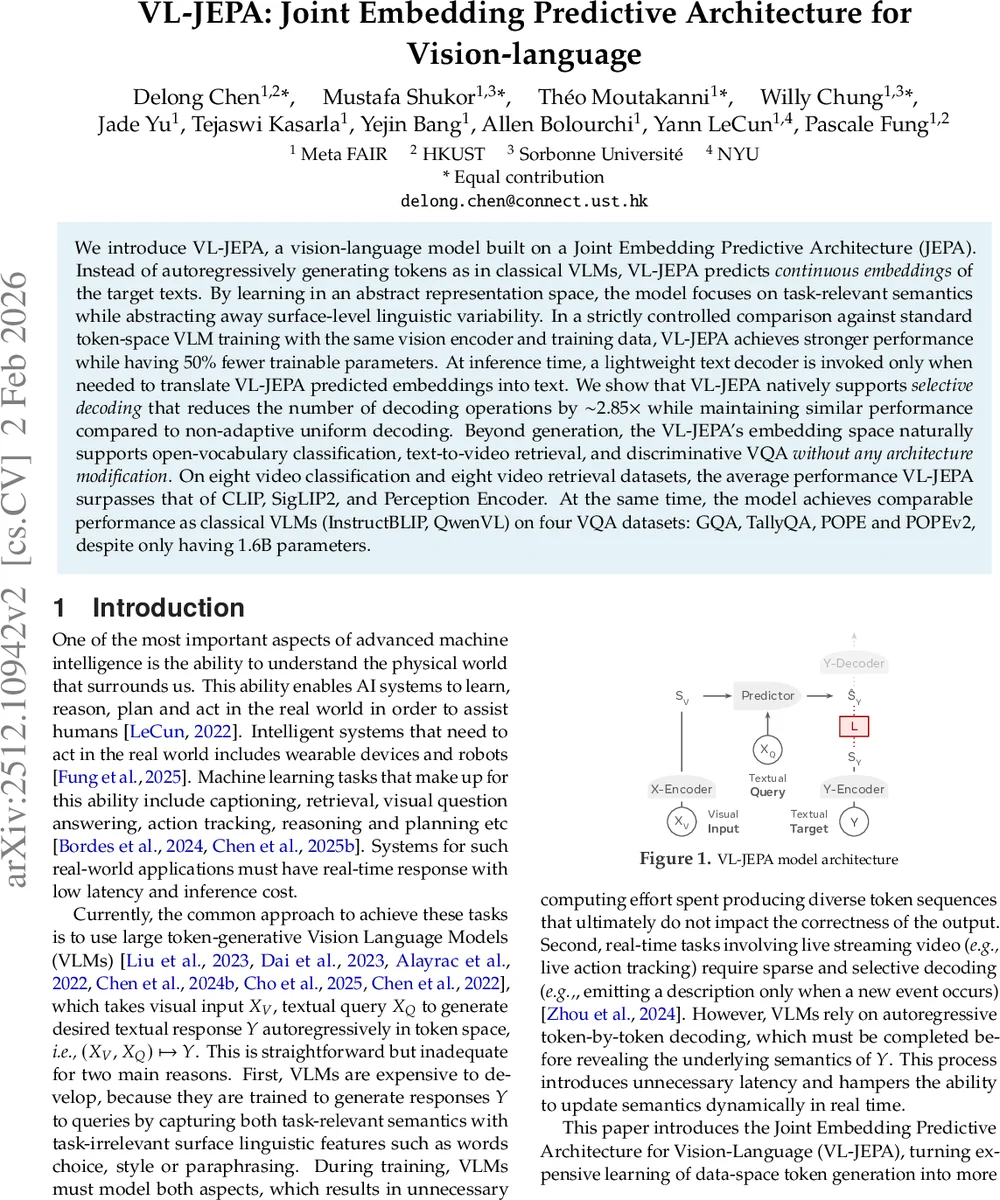

VL‑JEPA introduces a novel Joint Embedding Predictive Architecture for vision‑language tasks that departs from the conventional token‑based generative paradigm of large vision‑language models (VLMs). Instead of autoregressively producing a sequence of tokens, VL‑JEPA learns to predict a continuous embedding of the target text directly from visual inputs and a textual query. The architecture consists of four components: (1) an X‑Encoder (a frozen V‑JEPA2 ViT‑L) that compresses images or video frames into a sequence of visual embeddings S_V; (2) a Predictor built from the last eight layers of Llama‑3.2‑1B, which jointly attends to S_V and the tokenized query X_Q to output a predicted target embedding Ŝ_Y; (3) a Y‑Encoder (EmbeddingGemma‑300M) that maps the ground‑truth text Y into a 1,536‑dimensional embedding S_Y; and (4) a lightweight Y‑Decoder that is only invoked at inference time to translate Ŝ_Y back into human‑readable text.

Training is performed entirely in the embedding space using a bidirectional InfoNCE loss that combines an alignment term (minimizing cosine distance between Ŝ_Y and S_Y) with a uniformity term (pushing embeddings apart to avoid collapse). By operating on embeddings, VL‑JEPA sidesteps the combinatorial explosion of token‑space targets: multiple valid textual answers that are orthogonal in one‑hot space become nearby points in the embedding space, simplifying the learning problem.

The model is trained in two stages. The first stage is large‑scale, query‑free pre‑training on massive image‑text (Datacomp, YFCC‑100M) and video‑text (Action100M) corpora. After 100 k iterations on image data (2 B samples) the model reaches 61.6 % ImageNet zero‑shot accuracy. Video pre‑training follows with 8‑frame and 32‑frame inputs for a total of 70 k iterations, consuming four weeks on a 24‑node cluster equipped with eight NVIDIA H200 GPUs per node. The second stage is supervised fine‑tuning (SFT) with query‑conditioned data (25 M VQA samples, 2.8 M captioning samples, 1.8 M classification samples) to endow the model with VQA capabilities while preserving the alignment learned in pre‑training.

Empirically, the VL‑JEPA BASE model outperforms CLIP, SigLIP2, and Perception Encoder on eight video classification and eight video retrieval benchmarks, achieving higher average top‑1 recall and classification accuracy despite using roughly half the trainable parameters of comparable token‑space VLMs. After SFT, VL‑JEPA SFT matches the performance of state‑of‑the‑art VLM families such as InstructBLIP and Qwen‑VL on four VQA datasets (GQA, TallyQA, POPE, POPEv2) while maintaining a modest 1.6 B parameter budget.

A key contribution is the native support for selective decoding. Because the model predicts a semantic embedding stream non‑autoregressively, it can monitor the variance of Ŝ_Y in real time and trigger the Y‑Decoder only when a significant semantic shift is detected (e.g., variance exceeding a predefined threshold). This reduces the number of decoding operations by approximately 2.85×, with negligible impact on CIDEr scores for captioning tasks, thereby offering substantial latency and compute savings for real‑time video streaming applications.

The paper also discusses limitations and future directions. The current reliance on InfoNCE and simple uniformity regularization may be insufficient to prevent representation collapse in more diverse settings; more sophisticated non‑sample‑contrastive regularizers (VICReg, SIGReg) or EMA‑based freezing of the Y‑Encoder could improve stability. Additionally, the threshold for selective decoding may need domain‑specific tuning, suggesting a need for adaptive mechanisms.

In summary, VL‑JEPA demonstrates that predicting embeddings rather than tokens, combined with a lightweight on‑demand decoder, yields a more efficient and versatile vision‑language model. It achieves superior or comparable performance across classification, retrieval, and VQA tasks while cutting training parameters by half and enabling real‑time, low‑latency inference—opening a promising new direction for large‑scale multimodal AI.

Comments & Academic Discussion

Loading comments...

Leave a Comment