CrossCheck-Bench: Diagnosing Compositional Failures in Multimodal Conflict Resolution

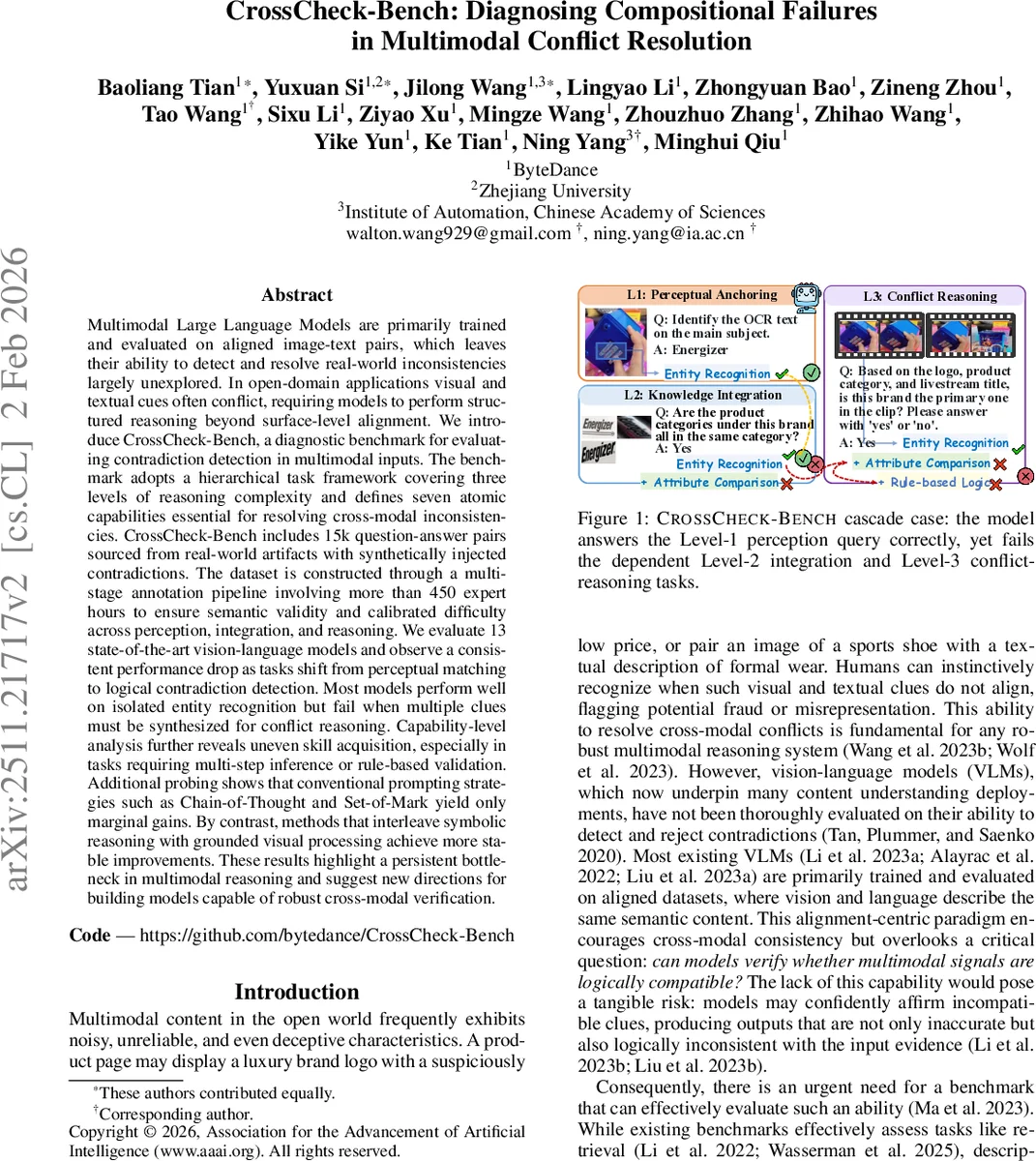

Multimodal Large Language Models are primarily trained and evaluated on aligned image-text pairs, which leaves their ability to detect and resolve real-world inconsistencies largely unexplored. In open-domain applications visual and textual cues often conflict, requiring models to perform structured reasoning beyond surface-level alignment. We introduce CrossCheck-Bench, a diagnostic benchmark for evaluating contradiction detection in multimodal inputs. The benchmark adopts a hierarchical task framework covering three levels of reasoning complexity and defines seven atomic capabilities essential for resolving cross-modal inconsistencies. CrossCheck-Bench includes 15k question-answer pairs sourced from real-world artifacts with synthetically injected contradictions. The dataset is constructed through a multi-stage annotation pipeline involving more than 450 expert hours to ensure semantic validity and calibrated difficulty across perception, integration, and reasoning. We evaluate 13 state-of-the-art vision-language models and observe a consistent performance drop as tasks shift from perceptual matching to logical contradiction detection. Most models perform well on isolated entity recognition but fail when multiple clues must be synthesized for conflict reasoning. Capability-level analysis further reveals uneven skill acquisition, especially in tasks requiring multi-step inference or rule-based validation. Additional probing shows that conventional prompting strategies such as Chain-of-Thought and Set-of-Mark yield only marginal gains. By contrast, methods that interleave symbolic reasoning with grounded visual processing achieve more stable improvements. These results highlight a persistent bottleneck in multimodal reasoning and suggest new directions for building models capable of robust cross-modal verification.

💡 Research Summary

CrossCheck‑Bench is a newly introduced diagnostic benchmark that evaluates how well multimodal large language models (MLLMs) can detect and resolve contradictions between visual and textual inputs—an ability that has been largely overlooked in existing vision‑language model (VLM) evaluations, which typically focus on aligned image‑text pairs. The authors construct a large‑scale dataset of 15 000 question‑answer (QA) pairs derived from real‑world artifacts such as e‑commerce listings, advertisements, and social‑media posts. Each sample is built on a “Multimodal Cue Graph” (MCG) that encodes entities, modalities, attributes, and values; contradictions are synthetically injected in a controlled manner. The data collection pipeline involves three stages: (1) multimodal clue extraction using ensembles of state‑of‑the‑art detectors (YOLOv8‑L, GroundingDINO, OCR, and language models), (2) attribute extraction and cross‑validation with rule‑based templates and GPT‑4o, and (3) expert review amounting to more than 450 hours to ensure semantic validity and calibrated difficulty.

The benchmark is organized into a three‑tier hierarchy reflecting increasing reasoning complexity: L1 Perception (visual grounding and entity recognition), L2 Integration (attribute comparison, multi‑frame extraction, numerical reasoning, region‑constrained OCR), and L3 Reasoning (rule‑based logical inference across modalities). Seven atomic capabilities (A1‑A7) are defined, and each QA task is generated by combining a specific subset of these capabilities, allowing fine‑grained diagnosis of where a model fails. For example, a Level‑1 task may ask whether a logo appears in the image; a Level‑2 task may require comparing the brand name in the image with the brand mentioned in the description; a Level‑3 task may demand reasoning about whether the price implied by the brand and the visual cues is plausible, possibly invoking external knowledge about typical price ranges.

Thirteen leading VLMs—including GPT‑4.1, Gemini‑2.5, Qwen2.5‑VL, InternVL3, MiMo‑VL, and several open‑source vision‑language transformers—are evaluated on the full benchmark. Overall accuracy drops dramatically as the task moves up the hierarchy: models achieve ~78 % on L1, ~52 % on L2, and only ~31 % on L3, far below the human upper bound of ~94 %. The performance gap is especially pronounced for capabilities A3 (Attribute Comparison) and A7 (Rule‑based Logic), indicating that current models struggle to synthesize multiple clues and apply explicit logical rules.

Prompting experiments explore four strategies: (a) vanilla prompting, (b) Chain‑of‑Thought (CoT), (c) Set‑of‑Mark (SoM), and (d) visual grounding with annotated regions. CoT and SoM yield modest gains of 3–5 percentage points, while simple grounding does not significantly help. In contrast, an “Interleaved Symbolic Reasoning” approach—where visual and textual inputs are processed sequentially and intermediate representations are fed into a lightweight symbolic engine—improves L3 accuracy by an average of 9 pp. This suggests that explicit, step‑wise reasoning and rule application are more effective than end‑to‑end black‑box inference for contradiction detection.

A detailed capability‑wise error analysis reveals that low‑level perception (A1, A2) is relatively robust, whereas higher‑level skills (A4 Multi‑frame Extraction, A5 Numerical Reasoning, A6 Region‑Constrained OCR) exhibit high variance and frequent failures, especially when visual quality is poor or when numeric information is implicit. The authors argue that the observed bottleneck stems from the fact that most VLMs are trained on data where visual and textual modalities are already aligned, so they lack exposure to contradictory evidence and the training signal needed to learn cross‑modal logical consistency.

To address these shortcomings, the paper proposes three research directions: (1) modular architectures that explicitly separate perception, integration, and reasoning stages, allowing iterative refinement and symbolic manipulation; (2) incorporation of external knowledge graphs or attribute‑relation databases to provide priors for rule‑based checks (e.g., typical price ranges for a brand); and (3) a feedback loop where human‑in‑the‑loop error analysis is used to fine‑tune models on targeted failure cases, especially for A3 and A7. The authors also release the benchmark code and data under an open‑source license, encouraging the community to extend the dataset to more languages, domains, and modalities.

In summary, CrossCheck‑Bench fills a critical gap in multimodal evaluation by providing a systematic, hierarchical, and capability‑driven framework for diagnosing cross‑modal contradiction resolution. The extensive experiments demonstrate that state‑of‑the‑art VLMs, while competent at basic perception, are far from reliable in logical conflict reasoning. The benchmark and accompanying analyses offer a clear roadmap for future work aimed at building multimodal systems that can robustly verify the consistency of heterogeneous information—a prerequisite for trustworthy AI applications in e‑commerce, content moderation, and beyond.

Comments & Academic Discussion

Loading comments...

Leave a Comment