Data as a Lever: A Neighbouring Datasets Perspective on Predictive Multiplicity

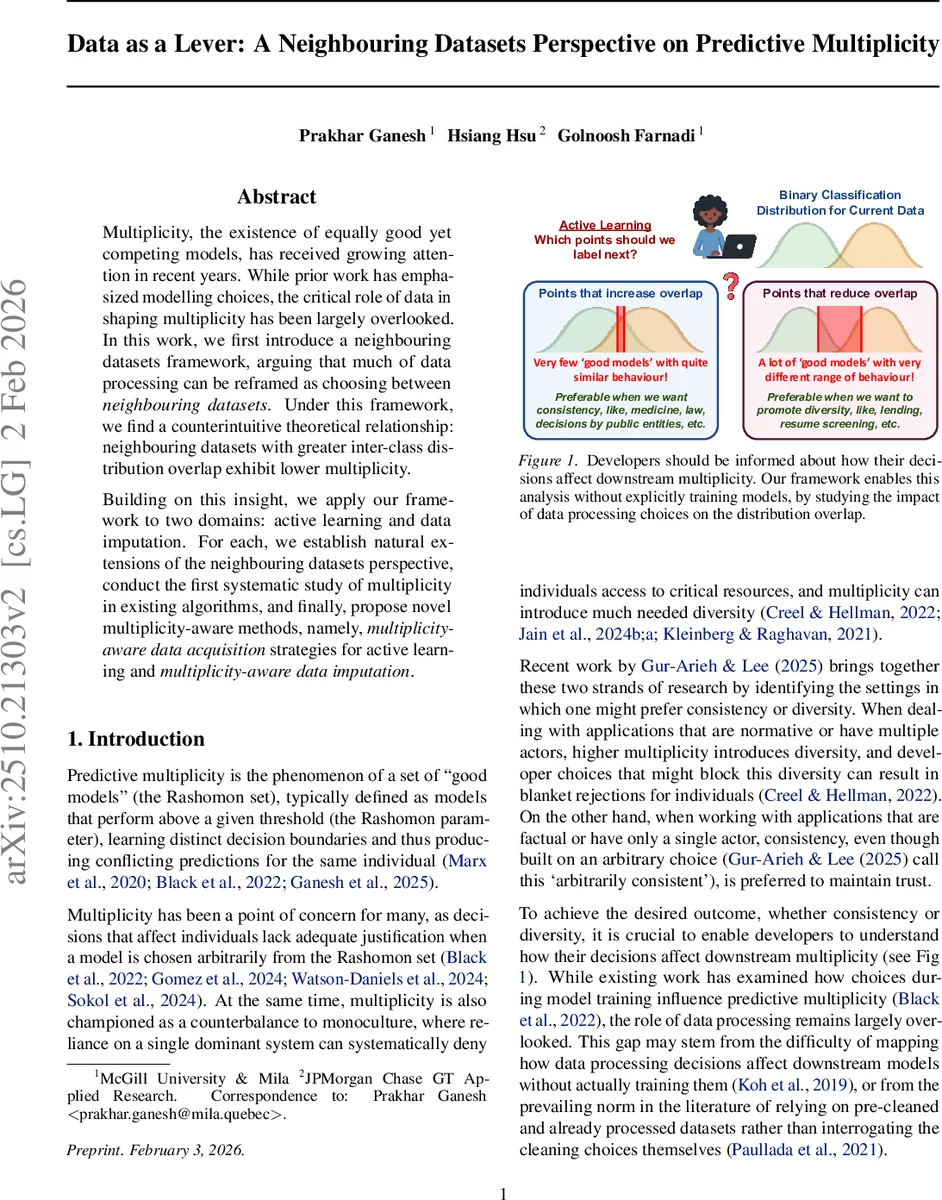

Multiplicity, the existence of equally good yet competing models, has received growing attention in recent years. While prior work has emphasized modelling choices, the critical role of data in shaping multiplicity has been largely overlooked. In this work, we first introduce a neighbouring datasets framework, arguing that much of data processing can be reframed as choosing between neighbouring datasets. Under this framework, we find a counterintuitive theoretical relationship: neighbouring datasets with greater inter-class distribution overlap exhibit lower multiplicity. Building on this insight, we apply our framework to two domains: active learning and data imputation. For each, we establish natural extensions of the neighbouring datasets perspective, conduct the first systematic study of multiplicity in existing algorithms, and finally, propose novel multiplicity-aware methods, namely, multiplicity-aware data acquisition strategies for active learning and multiplicity-aware data imputation.

💡 Research Summary

The paper introduces a “neighbouring datasets” framework to study predictive multiplicity – the existence of many equally good models (the Rashomon set) that disagree on individual predictions. Multiplicity is quantified by an ambiguity metric that measures the proportion of test points receiving conflicting predictions across the Rashomon set. While prior work has focused on modeling choices or overall dataset noise, this work reframes common data‑processing steps (active learning, imputation, outlier handling, etc.) as selections among k‑neighbouring datasets – two datasets of the same size that differ in only k ≪ n records.

The central theoretical contribution is a counter‑intuitive result: when the Rashomon parameter ε (the loss threshold defining “good” models) is held constant across neighbouring datasets, greater inter‑class distribution overlap leads to a smaller Rashomon set and thus lower ambiguity. This reverses the trend reported in earlier studies that compared distinct tasks, where higher overlap (lower separability) was associated with higher multiplicity. The reversal stems from fixing ε across neighbours rather than allowing it to adapt to each task’s difficulty. The authors prove monotonicity of ambiguity within the Rashomon set and argue that higher overlap excludes more models from the set under a shared ε.

To validate the theory, the authors apply the framework to two widely used data‑processing domains.

-

Active Learning – Traditional acquisition strategies (uncertainty, diversity, etc.) implicitly choose among neighbouring datasets but do not consider downstream multiplicity. The authors conduct the first systematic empirical study of multiplicity across several acquisition heuristics, confirming that lower separability indeed yields lower multiplicity even beyond the theoretical assumptions. Building on this insight, they propose multiplicity‑aware acquisition methods that deliberately select points which, when labeled, produce a neighbour dataset with minimal ambiguity while preserving predictive accuracy. Experiments on benchmark binary classification tasks show up to a 15 % reduction in ambiguity compared with standard baselines, with negligible loss in test accuracy.

-

Data Imputation – Different imputation techniques (mean, k‑NN, matrix factorization, etc.) generate distinct neighbours differing only at the missing entries. The authors quantify how each technique reshapes the Rashomon set and consequently the ambiguity. They find that higher missingness amplifies the “steerability” of the dataset, giving the practitioner stronger control over multiplicity. Their multiplicity‑aware imputation algorithm selects an imputation method that minimizes ambiguity for a given missingness level, achieving up to a 10 % absolute drop in ambiguity relative to naïve imputation, again without sacrificing downstream performance.

Overall, the paper contributes: (i) a unified neighbouring‑datasets formalism for studying data‑processing impacts on multiplicity; (ii) a theoretical reversal of the overlap‑multiplicity relationship under a shared Rashomon parameter; (iii) the first empirical multiplicity analyses for active learning and imputation; and (iv) practical algorithms that let developers steer multiplicity toward consistency (e.g., medical decision‑making) or diversity (e.g., lending, hiring) as desired.

The work highlights that data‑centric decisions can be as consequential as model‑centric ones for the fairness, robustness, and interpretability of deployed systems. Limitations include the focus on binary classification, reliance on a specific loss‑based Rashomon definition, and the need for scalable approximations of the Rashomon set in large‑scale settings. Future research directions suggested are extending the theory to multi‑class and regression tasks, integrating privacy‑preserving mechanisms, and exploring real‑time multiplicity monitoring in production pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment