The Language of Approval: Identifying the Drivers of Positive Feedback Online

Positive feedback via likes and awards is central to online governance, yet which attributes of users’ posts elicit rewards – and how these vary across authors and communities – remains unclear. To examine this, we combine quasi-experimental causal inference with predictive modeling on 11M posts from 100 subreddits. We identify linguistic patterns and stylistic attributes causally linked to rewards, controlling for author reputation, timing, and community context. For example, overtly complicated language, tentative style, and toxicity reduce rewards. We use our set of curated features to train models that can detect highly-upvoted posts with high AUC. Our audit of community guidelines highlights a ``policy-practice gap’’ – most rules focus primarily on civility and formatting requirements, with little emphasis on the attributes identified to drive positive feedback. These results inform the design of community guidelines, support interfaces that teach users how to craft desirable contributions, and moderation workflows that emphasize positive reinforcement over purely punitive enforcement.

💡 Research Summary

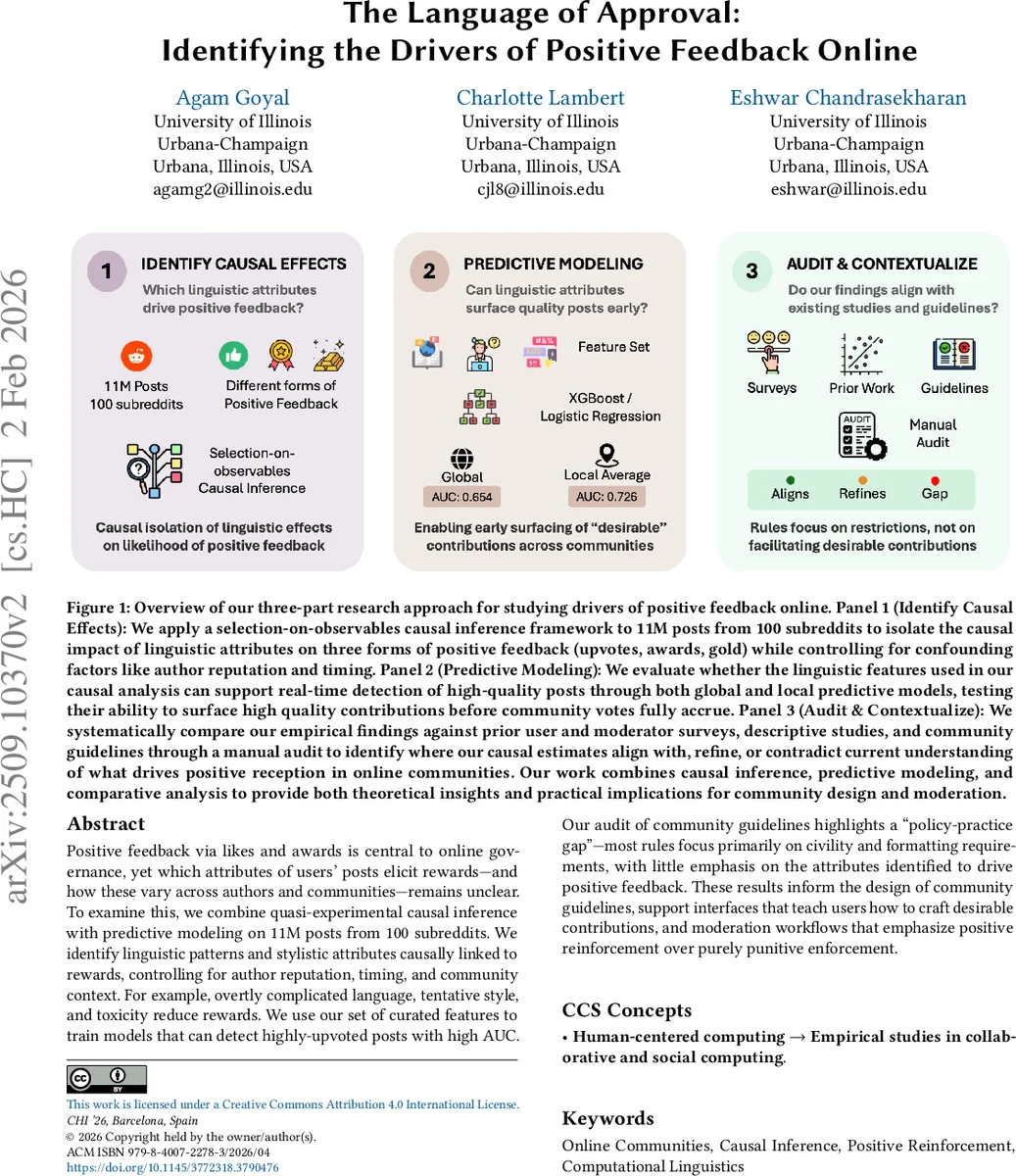

The paper investigates what linguistic and stylistic attributes of Reddit posts cause them to receive positive feedback—upvotes, awards, and Reddit Gold—while rigorously controlling for confounding factors such as author reputation, posting time, and community‑level fixed effects. Using a massive dataset of 11 million posts from 100 diverse subreddits, the authors adopt a three‑stage research design: (1) causal effect identification, (2) predictive modeling, and (3) policy‑practice audit.

Causal Identification

The authors treat the problem as a selection‑on‑observables scenario. They construct a rich feature set (≈150 variables) that includes readability metrics (sentence length, word length, connective density), LIWC‑based psychological categories (causal framing, future focus, social anchors, toxicity, hedging), topic model outputs, and structural cues (question marks, lists, links). A mixed‑effects logistic/Poisson regression framework with subreddit and temporal fixed effects, plus author‑level controls (karma, recent activity), isolates the marginal impact of each feature on the probability of receiving high scores, awards, or gold. Instrumental‑variable checks and inverse‑probability weighting are employed to mitigate endogeneity.

Key causal findings:

- Posts framed as open‑ended discussion prompts increase the odds of high scores and awards by roughly 43 % relative to narrow help‑request posts, which suffer a 30 % penalty.

- Readability matters: each standard‑deviation increase in a readability index (short sentences, low connective density) lifts positive‑feedback odds by about 40 %.

- Toxic language reduces odds by ~5 %; hedging language (e.g., “maybe”, “could”) cuts odds by ~10 %.

- Specific LIWC categories—causal language, future orientation, concrete social anchors (friends, team)—are consistently positive, whereas informal slang and dense connective prose are penalized.

- Feedback channels differ: while free upvotes share many drivers with paid awards/gold, the latter reward mentions of resources/tools and punish overtly power‑seeking language.

The analysis also reveals a “newcomer disadvantage”: users in the bottom 10 % of karma have a baseline 6 % lower chance of high feedback, but they gain disproportionate benefit from clarity and future‑focused framing, partially offsetting the reputation gap.

Predictive Modeling

Using the same curated feature set, the authors train XGBoost and Bi‑LSTM‑Attention models to predict whether a post will achieve high upvotes, awards, or gold. In 10‑fold cross‑validation, the models achieve AUCs of 0.87 (upvotes), 0.84 (awards), and 0.81 (gold), outperforming a baseline that relies only on prosociality signals by about 12 % absolute. Performance varies modestly across subreddits (ΔAUC 0.05–0.12) depending on community size and topical focus, with technical subreddits benefiting most from domain‑specific jargon and causal framing.

Policy and Guideline Audit

The authors manually code 312 official subreddit rules from the 100 communities. The rules overwhelmingly emphasize civility, anti‑harassment, formatting, and spam prevention; only ~2 % mention any linguistic or stylistic guidance. Consequently, there is a pronounced “policy‑practice gap”: the empirically identified drivers of positive feedback (clarity, discussion‑generating framing, future orientation) are largely absent from community guidelines.

Implications

The study offers three major contributions. First, it provides a methodological template for disentangling causal language effects from confounding reputation and temporal dynamics in large‑scale online platforms. Second, it demonstrates that a compact, interpretable linguistic feature set can power real‑time quality detection tools, enabling early surfacing of high‑quality content before organic voting solidifies. Third, it highlights the need to redesign community policies from a reinforcement‑oriented stance—adding explicit guidance on effective linguistic strategies—to complement existing enforcement‑focused rules.

Overall, the work bridges a critical gap between descriptive surveys of what users say they value and the actual causal mechanisms that drive community reward systems. By doing so, it opens pathways for proactive moderation, newcomer onboarding aids, and evidence‑based guideline revisions that can improve engagement, retention, and the health of online discourse.

Comments & Academic Discussion

Loading comments...

Leave a Comment