Ebisu: Benchmarking Large Language Models in Japanese Finance

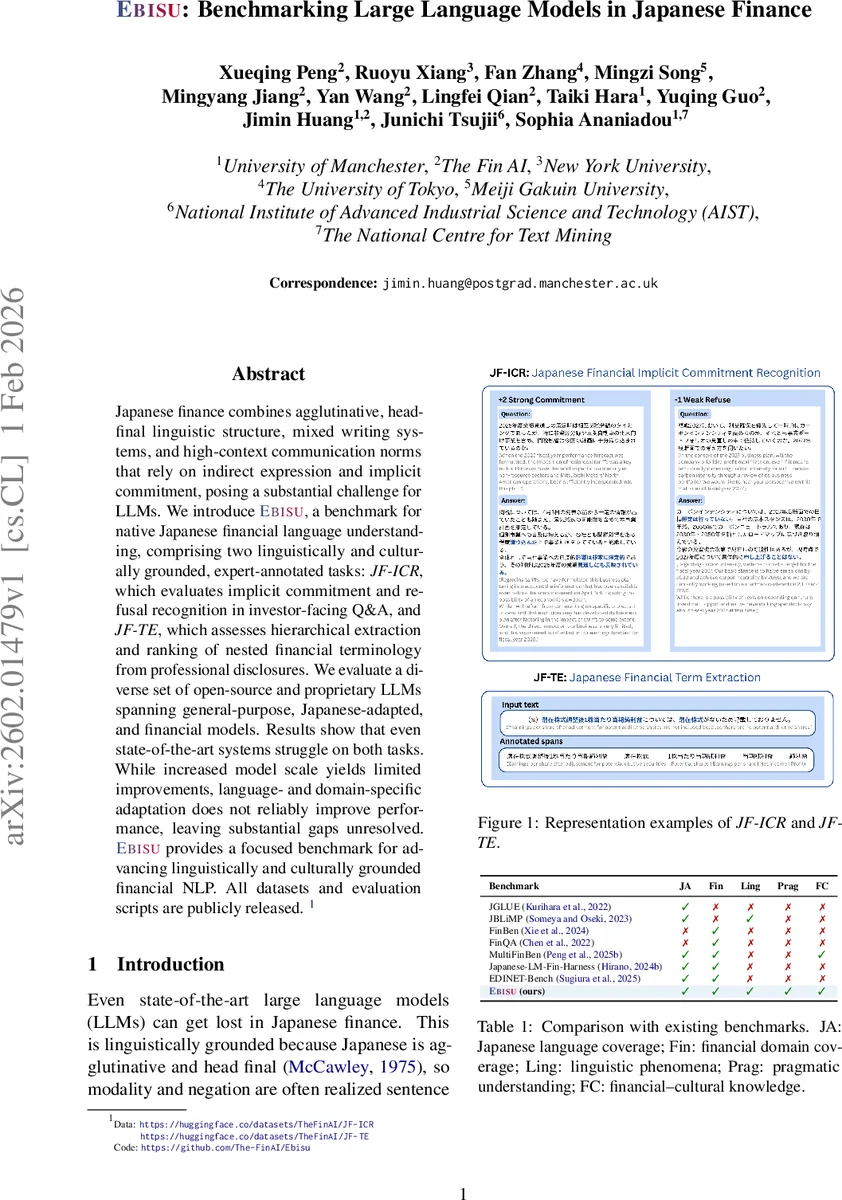

Japanese finance combines agglutinative, head-final linguistic structure, mixed writing systems, and high-context communication norms that rely on indirect expression and implicit commitment, posing a substantial challenge for LLMs. We introduce Ebisu, a benchmark for native Japanese financial language understanding, comprising two linguistically and culturally grounded, expert-annotated tasks: JF-ICR, which evaluates implicit commitment and refusal recognition in investor-facing Q&A, and JF-TE, which assesses hierarchical extraction and ranking of nested financial terminology from professional disclosures. We evaluate a diverse set of open-source and proprietary LLMs spanning general-purpose, Japanese-adapted, and financial models. Results show that even state-of-the-art systems struggle on both tasks. While increased model scale yields limited improvements, language- and domain-specific adaptation does not reliably improve performance, leaving substantial gaps unresolved. Ebisu provides a focused benchmark for advancing linguistically and culturally grounded financial NLP. All datasets and evaluation scripts are publicly released.

💡 Research Summary

The paper introduces Ebisu, a novel benchmark specifically designed to evaluate large language models (LLMs) on native Japanese financial language understanding. Japanese finance presents a unique combination of linguistic challenges: an agglutinative, head‑final syntax, mixed writing systems (kanji, hiragana, katakana), and high‑context communication that often conveys intent indirectly through pragmatic cues such as sentence‑final auxiliaries and nuanced negation. Existing multilingual or finance‑focused benchmarks either lack deep linguistic coverage or are tailored to English‑centric corpora, making them ill‑suited for assessing these subtle phenomena.

Ebisu comprises two expert‑annotated tasks:

-

JF‑ICR (Japanese Financial Implicit Commitment Recognition) – a classification task over 94 single‑turn investor‑facing Q&A pairs drawn from earnings calls, shareholder meetings, and briefing sessions of four Japanese companies (2023‑2026). Each answer is labeled on a five‑point scale ranging from strong commitment (+2) to strong refusal (‑2). Annotation involved two native‑level financial experts, double‑annotation, adjudication, and achieved high inter‑annotator agreement (Macro‑F1 0.9215, Cohen’s κ 0.8769, Krippendorff’s α 0.8768).

-

JF‑TE (Japanese Financial Term Extraction) – a hierarchical extraction and ranking task built from 202 note‑level excerpts of professional disclosures (annual securities reports). Annotators identified 2,412 term mentions covering 777 unique financial concepts, handling nested nominal compounds and script‑variant loanwords. Evaluation uses exact‑match F1 and HitRate@K (K = 1, 5, 10) to assess both boundary detection and ranking quality.

The authors evaluate 22 LLMs, spanning open‑weight models (e.g., LLaMA, GPT‑NeoX), Japanese‑adapted variants, and proprietary finance‑specialized systems. Results reveal that even state‑of‑the‑art models perform poorly: accuracy on JF‑ICR rarely exceeds 30 %, and F1 on JF‑TE stays below 0.4. Scaling up model size yields modest gains (+0.33 accuracy, +0.38 F1) but does not close the gap. Crucially, language‑specific adaptation (Japanese‑pretrained models) does not consistently outperform English‑centric counterparts at comparable scales, and further domain‑specific pretraining sometimes harms performance (e.g., a 0.12 F1 drop on JF‑TE).

Error analysis shows that failures concentrate on linguistically and pragmatically defined phenomena rather than missing domain knowledge. On JF‑ICR, models misinterpret clause‑final auxiliaries, layered negation, and indirect refusal strategies, often confusing weak refusals with neutral or hedged intents. On JF‑TE, performance deteriorates as compound depth and script variability increase; the gap between HitRate@1 and HitRate@10 indicates difficulty in precise term‑boundary resolution and handling of katakana loanwords whose meanings have drifted from source languages.

The paper’s contributions are fourfold: (1) the Ebisu benchmark itself, filling a gap for Japanese‑specific financial NLP; (2) two richly annotated datasets that capture real‑world corporate communication; (3) a comprehensive evaluation with diagnostic analyses that isolate the limited impact of scaling and adaptation; (4) public release of data, annotation guidelines, and evaluation scripts to foster further research.

Overall, the study demonstrates that current LLM scaling and adaptation strategies are insufficient for mastering the morphosyntactic and pragmatic mechanisms central to Japanese financial discourse. Ebisu provides a focused, high‑quality testbed for future work aiming to bridge this gap and develop models capable of nuanced intent inference and term grounding in Japanese finance.

Comments & Academic Discussion

Loading comments...

Leave a Comment