When Domains Interact: Asymmetric and Order-Sensitive Cross-Domain Effects in Reinforcement Learning for Reasoning

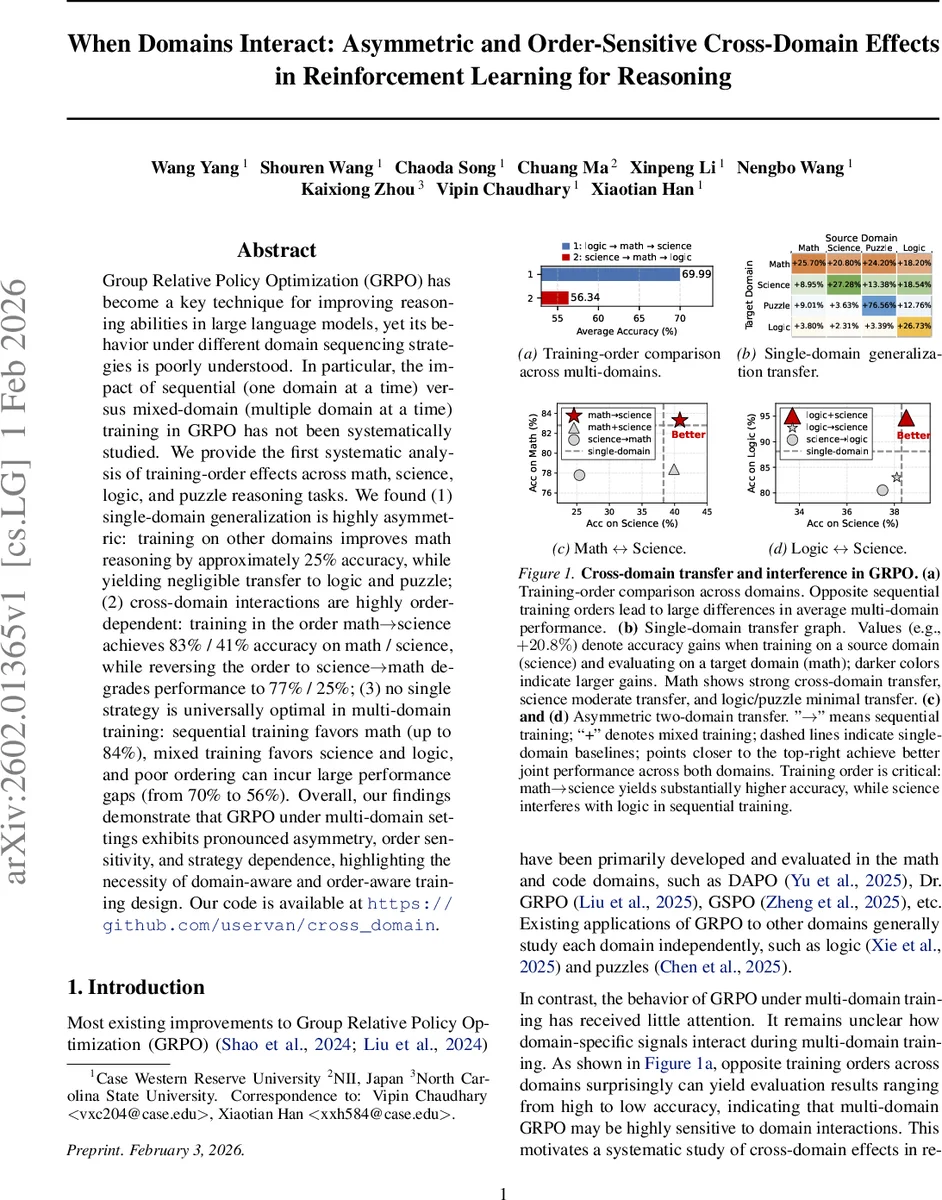

Group Relative Policy Optimization (GRPO) has become a key technique for improving reasoning abilities in large language models, yet its behavior under different domain sequencing strategies is poorly understood. In particular, the impact of sequential (one domain at a time) versus mixed-domain (multiple domain at a time) training in GRPO has not been systematically studied. We provide the first systematic analysis of training-order effects across math, science, logic, and puzzle reasoning tasks. We found (1) single-domain generalization is highly asymmetric: training on other domains improves math reasoning by approximately 25% accuracy, while yielding negligible transfer to logic and puzzle; (2) cross-domain interactions are highly order-dependent: training in the order math$\rightarrow$science achieves 83% / 41% accuracy on math / science, while reversing the order to science$\rightarrow$math degrades performance to 77% / 25%; (3) no single strategy is universally optimal in multi-domain training: sequential training favors math (up to 84%), mixed training favors science and logic, and poor ordering can incur large performance gaps (from 70% to 56%). Overall, our findings demonstrate that GRPO under multi-domain settings exhibits pronounced asymmetry, order sensitivity, and strategy dependence, highlighting the necessity of domain-aware and order-aware training design.

💡 Research Summary

The paper “When Domains Interact: Asymmetric and Order‑Sensitive Cross‑Domain Effects in Reinforcement Learning for Reasoning” investigates how the Group Relative Policy Optimization (GRPO) algorithm behaves when applied to multiple reasoning domains simultaneously. The authors focus on four representative domains—mathematics, science, logic, and puzzles—and ask three central questions: (1) How does training on a single domain affect performance on the other domains? (2) How do two‑domain sequential training orders influence each other’s performance? (3) What are the best strategies for training on three or more domains at once?

Methodology

The experiments use the Qwen‑3‑4B‑Base model, a 4‑billion‑parameter language model, fine‑tuned with GRPO. Training data consist of 5 K examples per domain, drawn from publicly available benchmarks (Skywork‑OR1‑RL‑Data for math, OpenScienceReasoning‑2 for science, knights‑and‑knaves for logic, and Enigmata‑Data for puzzles). The model is trained for 15 epochs with a batch size of 256 and a learning rate of 1e‑6. For each domain, a held‑out test set is used: MA TH500 for math, GPQA for science, a subset of the original logic test, and a puzzle test derived from Enigmata.

Single‑Domain Generalization

When the model is trained on a single domain and evaluated on the others, mathematics shows the strongest cross‑domain transfer. Training on any of the four domains improves math accuracy on MA TH500 by roughly 20–25 percentage points, with the largest gain (+25.7 pp) coming from puzzle training. Science also benefits from cross‑domain training, but to a lesser extent (+20.8 pp from math). Logic and puzzles exhibit minimal transfer; only in‑domain training yields high performance (logic reaches 97 % accuracy only when trained on logic data). The authors attribute the strong math transfer to the model’s pre‑training exposure to mathematical content, which makes the policy more receptive to additional signals.

Two‑Domain Sequential Interaction

The authors then examine ordered pairs of domains. The order “math → science” yields the best joint performance (83 % math, 41 % science). Reversing the order to “science → math” degrades both (77 % math, 25 % science), demonstrating a clear order‑sensitivity. Similar asymmetric effects appear between science and logic: training science first harms subsequent logic performance, while logic first harms science. Mixed (simultaneous) training of the two domains mitigates interference and improves both, though it still falls short of the optimal sequential order for the math–science pair. These findings suggest that GRPO’s shared policy parameters can be overwritten by later domains, leading to catastrophic forgetting when the later domain’s reward structure conflicts with the earlier one.

Multi‑Domain Training Strategies

Three families of multi‑domain strategies are compared: (a) the best sequential order (e.g., logic → math → science), (b) the worst sequential order (science → math → logic), and (c) mixed training where all three domains are presented together in each batch. Table 1 shows dramatic variance: the best sequential order reaches 84 % on math, 36.7 % on science, and 87.3 % on logic; the worst sequential order drops to 78.2 % math, 26.9 % science, and 63.8 % logic. Mixed training (math + science + logic) yields a more balanced profile (80.4 % math, 40 % science, 95 % logic). The results indicate that different domains have distinct preferences: math benefits most from a carefully chosen sequential curriculum, while science and logic achieve higher scores under mixed exposure.

Key Insights

- Asymmetric Transfer – Cross‑domain benefits are not reciprocal; math is a “donor” domain, while logic and puzzles are “receivers” only of their own data.

- Order Sensitivity – The sequence in which domains are presented can cause up to a 14 % absolute drop in average accuracy, highlighting catastrophic forgetting within a shared GRPO policy.

- Strategy Dependence – No single training regimen dominates across all domains; optimal curricula must be tailored to the target mix of tasks.

Contributions and Implications

The paper provides the first systematic empirical study of GRPO under multi‑domain conditions, establishing that domain interactions are highly asymmetric and order‑dependent. It offers practical guidance: when a target application prioritizes math, a sequential curriculum ending with math (or placing math first) is advisable; when balanced performance across diverse reasoning tasks is required, mixed training is preferable. Ignoring these dynamics can lead to substantial performance gaps (e.g., 70 % vs. 56 % average accuracy).

Limitations and Future Work

All experiments are conducted on a 4 B‑parameter model; scaling to larger models (e.g., 70 B) may alter transfer dynamics. The study also uses a fixed GRPO hyper‑parameter set; adaptive learning rates or domain‑specific adapters could further alleviate forgetting. Future research could explore meta‑learning approaches to automatically discover optimal curricula, or investigate hierarchical policy architectures that maintain domain‑specific sub‑policies while sharing a common backbone.

In summary, the work demonstrates that multi‑domain reinforcement learning for reasoning is far from a “one‑size‑fits‑all” problem. Effective training requires careful consideration of domain asymmetries, ordering effects, and the trade‑off between specialization and balance. These findings lay a foundation for building more versatile, multi‑task reasoning agents based on GRPO and similar policy‑gradient methods.

Comments & Academic Discussion

Loading comments...

Leave a Comment