A-MapReduce: Executing Wide Search via Agentic MapReduce

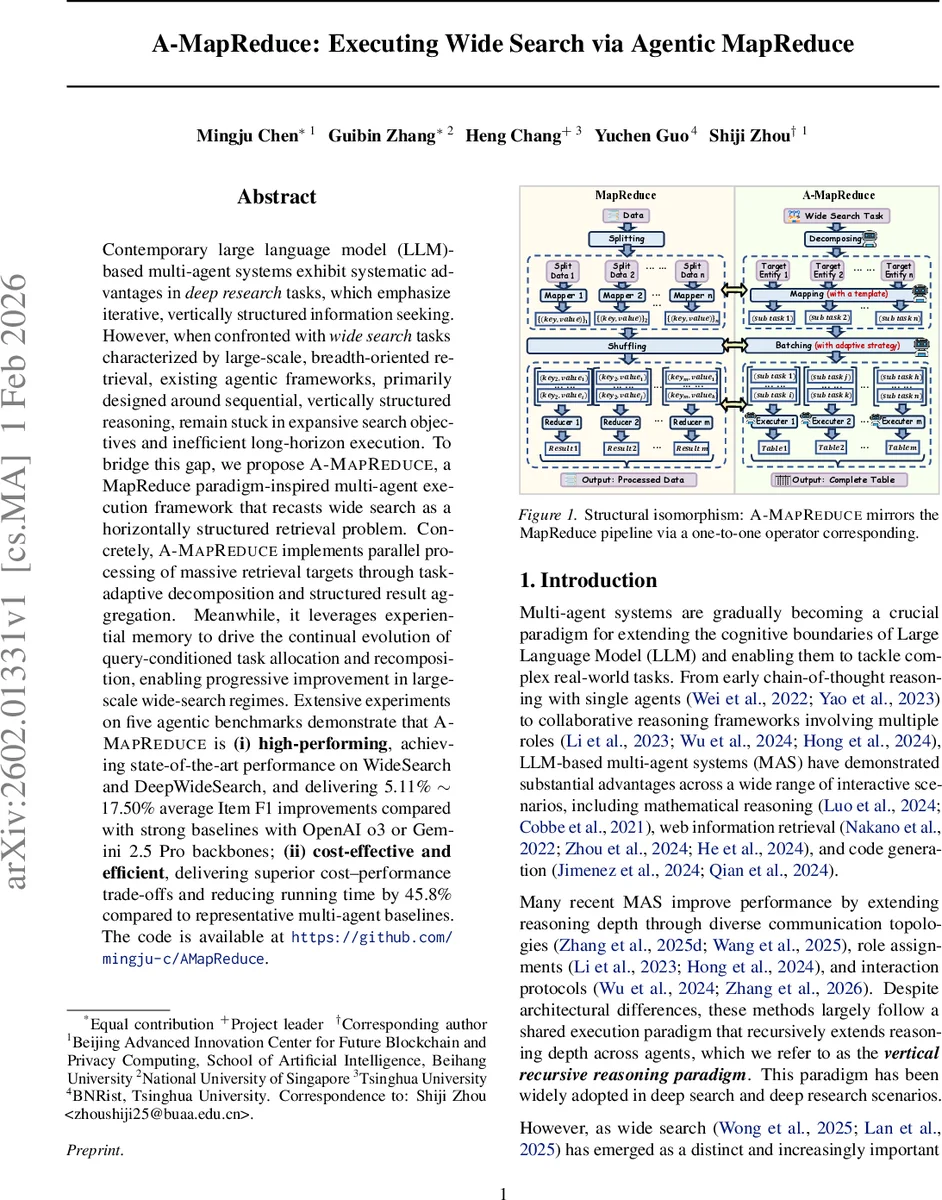

Contemporary large language model (LLM)-based multi-agent systems exhibit systematic advantages in deep research tasks, which emphasize iterative, vertically structured information seeking. However, when confronted with wide search tasks characterized by large-scale, breadth-oriented retrieval, existing agentic frameworks, primarily designed around sequential, vertically structured reasoning, remain stuck in expansive search objectives and inefficient long-horizon execution. To bridge this gap, we propose A-MapReduce, a MapReduce paradigm-inspired multi-agent execution framework that recasts wide search as a horizontally structured retrieval problem. Concretely, A-MapReduce implements parallel processing of massive retrieval targets through task-adaptive decomposition and structured result aggregation. Meanwhile, it leverages experiential memory to drive the continual evolution of query-conditioned task allocation and recomposition, enabling progressive improvement in large-scale wide-search regimes. Extensive experiments on five agentic benchmarks demonstrate that A-MapReduce is (i) high-performing, achieving state-of-the-art performance on WideSearch and DeepWideSearch, and delivering 5.11% - 17.50% average Item F1 improvements compared with strong baselines with OpenAI o3 or Gemini 2.5 Pro backbones; (ii) cost-effective and efficient, delivering superior cost-performance trade-offs and reducing running time by 45.8% compared to representative multi-agent baselines. The code is available at https://github.com/mingju-c/AMapReduce.

💡 Research Summary

The paper addresses a critical gap in large‑language‑model (LLM)‑driven multi‑agent systems (MAS): while existing frameworks excel at “deep search” tasks that require recursive, vertically‑structured reasoning, they struggle with “wide search” problems that involve massive, breadth‑oriented retrieval of thousands to millions of entities. To bridge this gap, the authors introduce A‑MapReduce, a novel agentic execution framework inspired by the classic MapReduce paradigm.

Core Concept

A‑MapReduce reconceptualizes a wide‑search query q as a three‑part execution decision Θ = (M_q, P_q, B_q).

- M_q (task matrix) encodes a set of N target entities with a subset of known attributes (K′ < K).

- P_q (task template) is a string containing placeholders aligned to the columns of M_q; filling the template with a row of M_q yields an atomic retrieval prompt.

- B_q (batching strategy) determines how the atomic prompts are grouped for parallel execution (per‑atom, attribute‑wise, or adaptive batching).

The Mapping stage first performs a lightweight sequential analysis to discover implicit target entities, then samples Θ from a query‑conditioned distribution p(Θ|q, observations). Using M_q and P_q, the system generates a set of atomic tasks T(q) = {t_i}. B_q partitions T(q) into m disjoint batches {B_at^k}.

During the Reducing stage, each batch is dispatched to an independent search agent A_search^k, which executes the prompts in parallel and returns a partial table ˆY_k. The manager agent A_manage aggregates all partial tables, validates them against the schema S, and, if completeness is violated, triggers a lightweight delta‑patch round by resampling a repair decision Θ_rep.

Experience‑based Evolution

A‑MapReduce maintains an experiential memory M = {D, H, F_ψ}. D stores execution records (query, decision, trace, utility). H is a hint pool containing distilled patterns such as optimal batch sizes, effective templates, or matrix initializations, each with a score w_h and a provenance set of supporting tasks. The distillation operator F_ψ aggregates hints, allowing the system to condition future Θ sampling on historically high‑utility patterns. Consequently, the decision distribution p(Θ|q) progressively shifts toward regions that maximize a utility function u = Q(Y; q) − λ_c C(τ) − λ_t D(τ), where Q measures output quality, C is cost, and D is delay. In practice, only quality feedback is used for updates to ensure reproducibility.

Experimental Evaluation

Using OpenAI o3 and Gemini 2.5 Pro as backbone LLMs, the authors evaluate A‑MapReduce on five agentic benchmarks, notably WideSearch and DeepWideSearch. Results show:

- Item‑F1 improvements of 5.11 % – 17.50 % over strong baselines (up to 27.62 % on specific tasks).

- Runtime reduction of 45.8 % compared with representative multi‑agent baselines.

- Cost savings of approximately $1.10 per task on average.

- The experiential memory mechanism yields consistent utility gains after a few iterations, confirming the benefit of experience‑driven decision refinement.

Significance and Limitations

A‑MapReduce demonstrates that wide‑search tasks—requiring massive coverage and structured aggregation—can be efficiently handled by a horizontally‑structured, parallel execution model coupled with continual learning from past executions. The framework is open‑source, facilitating reproducibility and future extensions. However, current experiments focus on text‑based retrieval; extending to multimodal data (images, audio) and scaling hint management for very large memory footprints remain open research directions.

In summary, the paper provides a well‑motivated, technically solid, and empirically validated approach that transforms the way LLM‑based multi‑agent systems tackle breadth‑oriented information retrieval, achieving superior quality, speed, and cost efficiency through a MapReduce‑style architecture and experience‑driven evolution.

Comments & Academic Discussion

Loading comments...

Leave a Comment