PARSE: An Open-Domain Reasoning Question Answering Benchmark for Persian

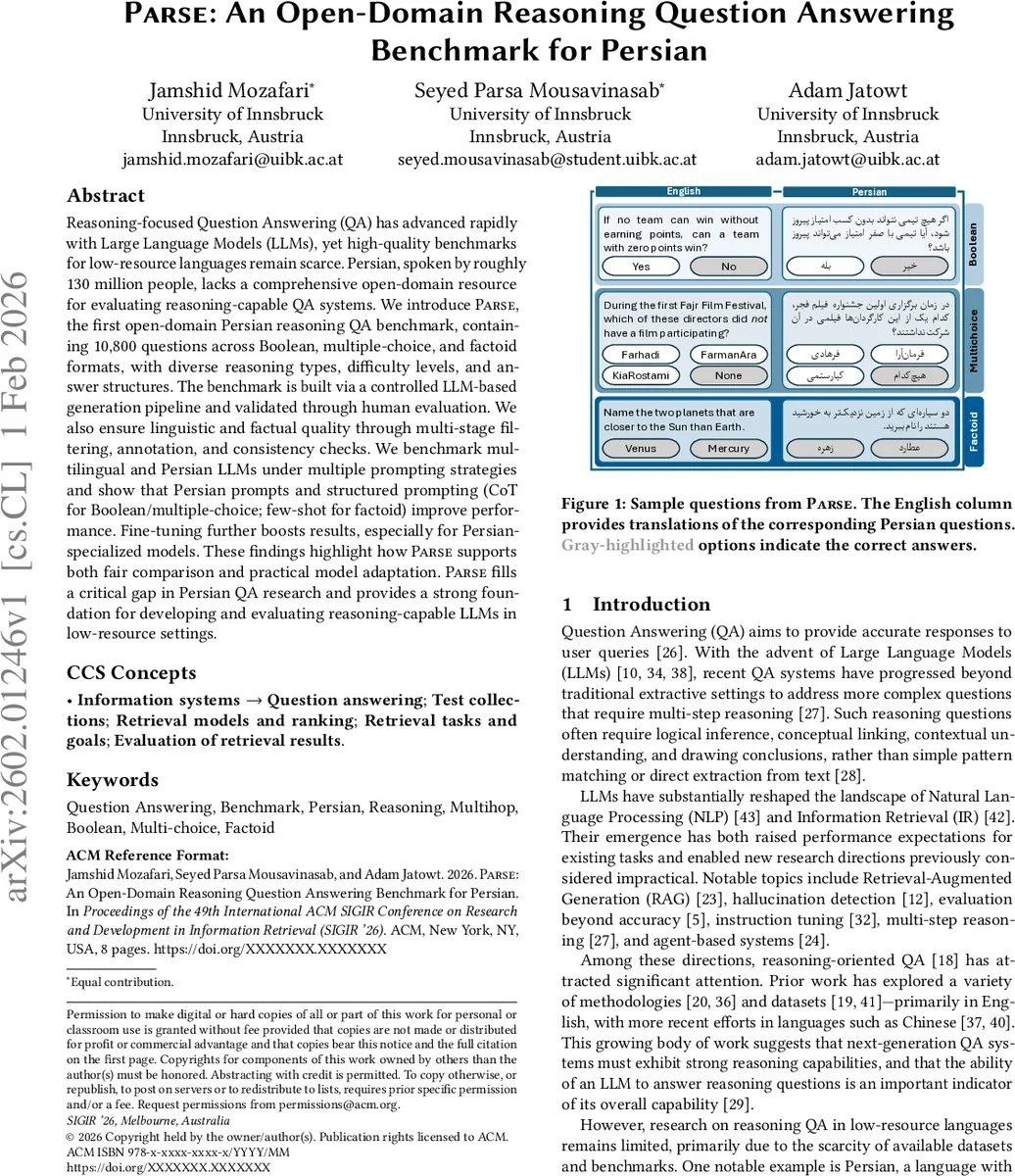

Reasoning-focused Question Answering (QA) has advanced rapidly with Large Language Models (LLMs), yet high-quality benchmarks for low-resource languages remain scarce. Persian, spoken by roughly 130 million people, lacks a comprehensive open-domain resource for evaluating reasoning-capable QA systems. We introduce PARSE, the first open-domain Persian reasoning QA benchmark, containing 10,800 questions across Boolean, multiple-choice, and factoid formats, with diverse reasoning types, difficulty levels, and answer structures. The benchmark is built via a controlled LLM-based generation pipeline and validated through human evaluation. We also ensure linguistic and factual quality through multi-stage filtering, annotation, and consistency checks. We benchmark multilingual and Persian LLMs under multiple prompting strategies and show that Persian prompts and structured prompting (CoT for Boolean/multiple-choice; few-shot for factoid) improve performance. Fine-tuning further boosts results, especially for Persian-specialized models. These findings highlight how PARSE supports both fair comparison and practical model adaptation. PARSE fills a critical gap in Persian QA research and provides a strong foundation for developing and evaluating reasoning-capable LLMs in low-resource settings.

💡 Research Summary

The paper introduces PARSE, the first open‑domain reasoning question‑answering (QA) benchmark for Persian, addressing a critical gap in resources for low‑resource languages. PARSE comprises 10,800 QA pairs evenly distributed across three primary formats—Boolean (yes/no), Multiple‑choice (four options), and Factoid (short answer)—and further subdivided by reasoning dimensions (multihop, complex inference, negation, comparison, list‑based, and unanswerable). Each sub‑type contains 600 questions, split equally into easy, medium, and hard difficulty levels, yielding a total of 18 configurations. This taxonomy mirrors the breadth of English benchmarks such as SQuAD, HotpotQA, and MultiRC, but is the first to bring such comprehensive coverage to Persian.

Data creation follows a two‑stage pipeline. First, the authors employ GPT‑4o with carefully engineered prompts that encode (i) the role of the model, (ii) the target QA type and sub‑type, (iii) concrete constraints (answer format, number of options, use of Persian, realism, topical diversity), and (iv) CSV‑style output instructions. Prompts are configuration‑specific, and batches of 30 questions (10 per difficulty) are generated repeatedly until the target count is reached. Throughout generation, the authors monitor linguistic fidelity, correct handling of negation/comparison, balanced option ordering, and genuine multihop evidence chains. Second, all outputs are normalized into a JSON schema, then subjected to multi‑pass quality control: structural validation, duplicate removal, semantic checks (e.g., Boolean must be answerable with “Yes/No”, multiple‑choice must have exactly one correct option unless it is a non‑answerable item), and difficulty calibration. The pipeline yields a uniformly balanced dataset with high linguistic naturalness.

Human validation is performed to guard against systematic LLM errors. Twelve native Persian speakers evaluate a 10 % sample (1,080 items) on three dimensions—Ambiguity, Readability, and Correctness—using a 1‑5 Likert scale. Average scores exceed 4.3 across all dimensions, confirming overall quality. A separate difficulty assessment shows that human accuracy declines from easy to hard items, indicating that the difficulty labels reflect genuine cognitive load rather than superficial factors.

The benchmark is used to evaluate a suite of multilingual and Persian‑specialized LLMs (Qwen, LLaMA, Mistral, Gemma, etc.) under three prompting strategies: Zero‑shot, Few‑shot, and Chain‑of‑Thought (CoT). Key findings include: (1) Persian‑language prompts consistently outperform English prompts for the same model, highlighting the importance of language‑specific instruction; (2) CoT prompting yields the largest gains for Boolean and Multiple‑choice questions, especially on sub‑types requiring multihop reasoning; (3) Few‑shot prompting is most effective for Factoid questions; (4) larger models (e.g., LLaMA‑70B, Qwen‑72B) achieve higher scores, yet fine‑tuning Persian‑focused models narrows or even eliminates the gap, with the biggest improvements observed on multihop and complex inference items. These experiments demonstrate that both prompt engineering and domain‑adapted fine‑tuning are crucial for unlocking reasoning capabilities in Persian LLMs.

The authors claim four main contributions: (i) the creation of PARSE, a publicly released benchmark covering a full spectrum of QA phenomena in Persian; (ii) a reproducible LLM‑driven generation pipeline with multi‑stage human validation; (iii) extensive empirical analysis of prompting strategies and fine‑tuning effects on multilingual and Persian models; and (iv) evidence that Persian‑specific prompts and fine‑tuning substantially boost performance, supporting fair comparison and practical model adaptation.

Limitations are acknowledged. The current question pool focuses on general knowledge and cultural topics, leaving domain‑specific areas (medical, legal, technical) unexplored. Residual factual inaccuracies persist in the LLM‑generated content, suggesting a need for automated fact‑checking mechanisms. Future work is proposed to expand the benchmark to specialized domains, integrate cross‑checking pipelines, and explore human‑LLM collaborative generation to further improve quality.

In conclusion, PARSE provides a rigorously constructed, linguistically sound, and richly annotated resource for evaluating reasoning‑capable Persian QA systems. By demonstrating the impact of language‑aware prompting and fine‑tuning, the paper offers actionable insights for researchers aiming to develop robust, reasoning‑enabled LLMs in low‑resource languages.

Comments & Academic Discussion

Loading comments...

Leave a Comment