Chronos: Learning Temporal Dynamics of Reasoning Chains for Test-Time Scaling

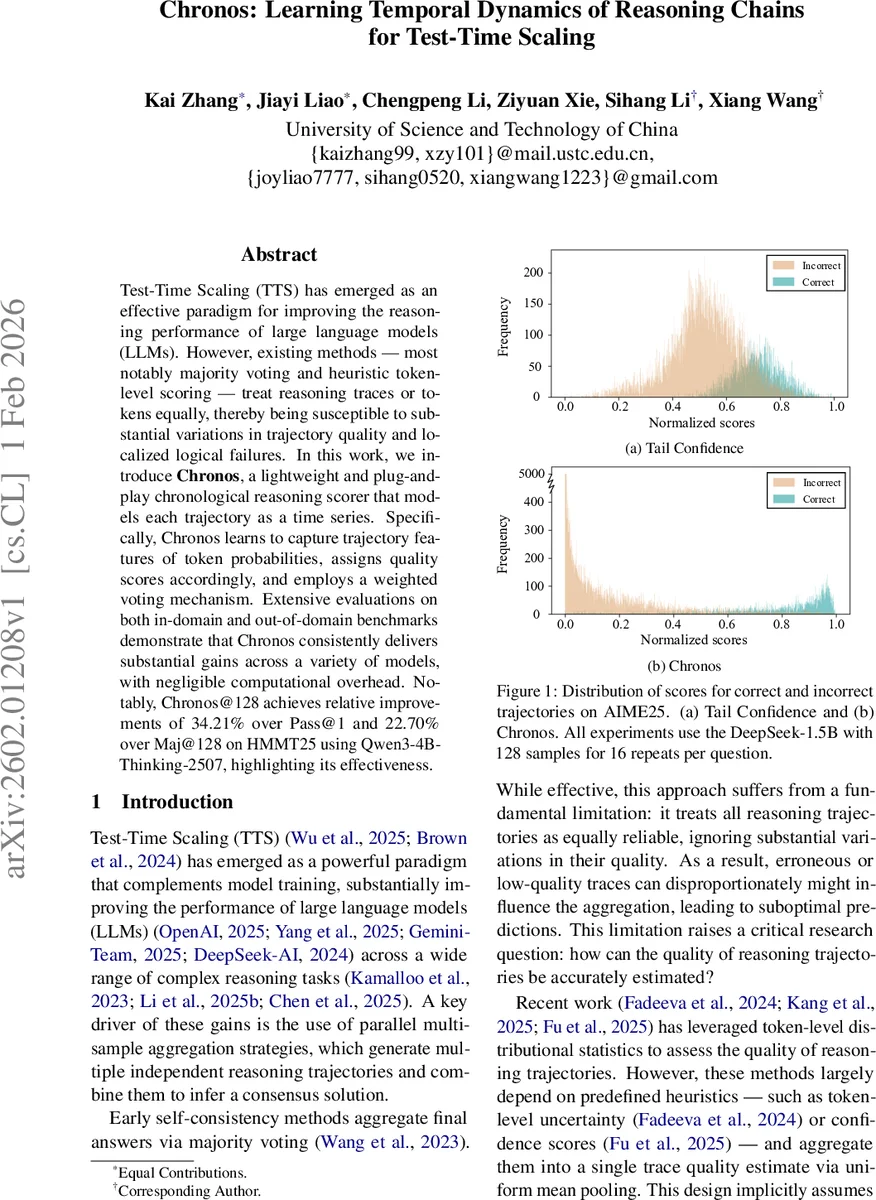

Test-Time Scaling (TTS) has emerged as an effective paradigm for improving the reasoning performance of large language models (LLMs). However, existing methods – most notably majority voting and heuristic token-level scoring – treat reasoning traces or tokens equally, thereby being susceptible to substantial variations in trajectory quality and localized logical failures. In this work, we introduce \textbf{Chronos}, a lightweight and plug-and-play chronological reasoning scorer that models each trajectory as a time series. Specifically, Chronos learns to capture trajectory features of token probabilities, assigns quality scores accordingly, and employs a weighted voting mechanism. Extensive evaluations on both in-domain and out-of-domain benchmarks demonstrate that Chronos consistently delivers substantial gains across a variety of models, with negligible computational overhead. Notably, Chronos@128 achieves relative improvements of 34.21% over Pass@1 and 22.70% over Maj@128 on HMMT25 using Qwen3-4B-Thinking-2507, highlighting its effectiveness.

💡 Research Summary

Chronos tackles a core limitation of current Test‑Time Scaling (TTS) methods for large language models (LLMs): the assumption that every reasoning trajectory, or even every token within a trajectory, contributes equally to the final answer. Existing approaches such as simple majority voting or token‑level confidence heuristics collapse the rich temporal information of a chain‑of‑thought into a single scalar via uniform averaging. This homogenization masks localized failures, especially in intermediate steps, and can let low‑quality or hallucinated traces dominate the aggregation.

The authors propose Chronos, a lightweight plug‑and‑play scorer that treats each generated reasoning chain as a time‑series of token‑level probability signals. For each decoding step t, they compute the negative mean log‑probability of the top‑k tokens, yielding a scalar (s_t). The sequence (s = (s_1, …, s_{L_{tail}})) preserves the chronological evolution of model confidence, allowing the scorer to detect spikes of uncertainty or sudden drops in confidence that are indicative of logical errors.

Chronos’ architecture is inspired by Inception‑style multi‑scale 1‑D convolutional networks. First, a 1×1 convolution projects the single‑channel signal into a higher‑dimensional space ((N_{Proj})). Parallel 1‑D convolutions with varying kernel lengths (e.g., 10, 20, 40 tokens) then process this representation. Short kernels capture fine‑grained fluctuations, while long kernels capture broader trends across the chain. The outputs are concatenated and passed through three residual blocks, each consisting of multi‑scale conv layers followed by ReLU. A final MLP with a sigmoid activation maps the deep temporal representation to a scalar quality score (\hat y \in

Comments & Academic Discussion

Loading comments...

Leave a Comment