Refining Context-Entangled Content Segmentation via Curriculum Selection and Anti-Curriculum Promotion

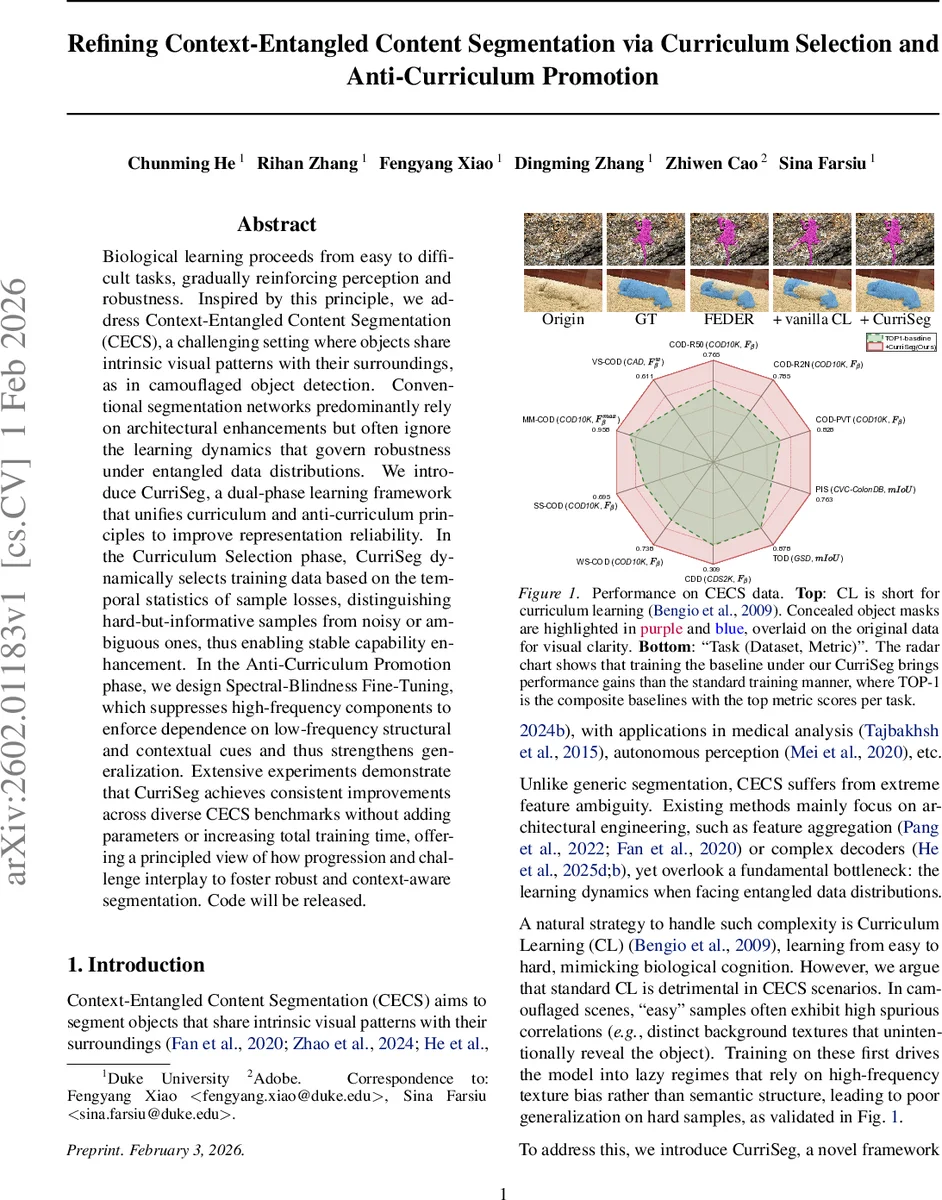

Biological learning proceeds from easy to difficult tasks, gradually reinforcing perception and robustness. Inspired by this principle, we address Context-Entangled Content Segmentation (CECS), a challenging setting where objects share intrinsic visual patterns with their surroundings, as in camouflaged object detection. Conventional segmentation networks predominantly rely on architectural enhancements but often ignore the learning dynamics that govern robustness under entangled data distributions. We introduce CurriSeg, a dual-phase learning framework that unifies curriculum and anti-curriculum principles to improve representation reliability. In the Curriculum Selection phase, CurriSeg dynamically selects training data based on the temporal statistics of sample losses, distinguishing hard-but-informative samples from noisy or ambiguous ones, thus enabling stable capability enhancement. In the Anti-Curriculum Promotion phase, we design Spectral-Blindness Fine-Tuning, which suppresses high-frequency components to enforce dependence on low-frequency structural and contextual cues and thus strengthens generalization. Extensive experiments demonstrate that CurriSeg achieves consistent improvements across diverse CECS benchmarks without adding parameters or increasing total training time, offering a principled view of how progression and challenge interplay to foster robust and context-aware segmentation. Code will be released.

💡 Research Summary

The paper tackles Context‑Entangled Content Segmentation (CECS), a setting where objects share visual patterns with their surroundings (e.g., camouflaged object detection). In such scenarios, conventional segmentation networks often fall into high‑frequency texture shortcuts, leading to poor generalization. Inspired by biological learning, the authors propose CurriSeg, a two‑phase training framework that combines curriculum learning (CL) with an anti‑curriculum stage, without altering the network architecture or adding parameters.

Phase 1 – Robust Curriculum Selection (RCS).

Instead of static difficulty measures, CurriSeg monitors the temporal evolution of each sample’s loss. At every epoch a checkpoint model evaluates all training images, computing a difficulty score dᵢ = 1 − IoU(pred,gt). A circular buffer stores the last K = 10 scores, from which the mean µᵢ and variance σᵢ² are derived. These statistics guide a sample‑level weight ωᵢ: high mean + low variance indicates a likely outlier (down‑weighted), while high mean + high variance marks “hard‑but‑informative” samples (up‑weighted). The final weight combines a lower bound Ws_min = 0.1, the mean‑based term, the variance‑based term, and an outlier penalty (Eq. 5).

Pixel‑level uncertainty is also incorporated. For each logit ˆyₕ,𝑤, the sigmoid probability pₕ,𝑤 yields an entropy Hₕ,𝑤. A curriculum‑aware coefficient β(t) = 1 − t/T_c gradually reduces the influence of high‑entropy pixels. The resulting pixel‑wise mask Wₕ,𝑤(t) (Eq. 7) attenuates noisy boundary regions early on while restoring full supervision later.

The overall loss for the curriculum phase is a weighted sum of binary cross‑entropy and IoU, each multiplied by the sample weight ωᵢ and the pixel mask Wᵢ (Eq. 8). This joint image‑ and pixel‑level curriculum stabilizes optimization, prevents noisy samples from dominating gradients, and lets the model first learn reliable low‑level cues.

Phase 2 – Anti‑Curriculum Promotion (ACP).

After the model reaches a stable regime, CurriSeg deliberately raises difficulty by suppressing high‑frequency information. Spectral‑Blindness Fine‑Tuning (SBFT) applies a low‑pass filter in the Fourier domain: the image is transformed, multiplied by a circular mask M_r that retains frequencies within radius r = 0.95 of the Nyquist limit, and inverse‑transformed back to obtain a blurred version ˜x. Training on ˜x forces the network to rely on low‑frequency structural cues (shape, context) rather than texture shortcuts. The loss in this phase (Eq. 11) is the same weighted BCE + IoU but computed on predictions from ˜x.

Implementation and Results.

CurriSeg requires no extra parameters and adds negligible overhead because the curriculum schedule is a bookkeeping operation and SBFT is only applied in the latter epochs. Experiments on multiple CECS benchmarks (COD10K, CHAMELEON, NC4K, etc.) and backbones (ResNet‑50, Transformers) show consistent gains of 2–3 percentage points over strong baselines, while training time remains comparable. Ablation studies confirm that (i) temporal loss statistics effectively separate noisy from informative hard samples, (ii) pixel‑level uncertainty weighting stabilizes early training, and (iii) SBFT mitigates texture bias and improves performance on the hardest cases.

Strengths and Limitations.

The main contributions are: (1) a dynamic, statistics‑driven curriculum that is robust to label noise and ambiguous samples, (2) seamless integration of pixel‑level uncertainty into the curriculum, (3) an anti‑curriculum mechanism that explicitly combats high‑frequency shortcuts, and (4) a plug‑and‑play framework that does not alter model capacity. Potential drawbacks include the fixed epoch split between phases, which may not be optimal for every dataset, and the risk that aggressive high‑frequency suppression could hurt tasks requiring fine details (e.g., medical imaging). Future work could explore adaptive phase transition criteria, domain‑specific spectral masks, and multi‑scale spectral attenuation.

In summary, CurriSeg offers a principled way to orchestrate learning progression for context‑entangled segmentation, demonstrating that carefully timed curriculum and anti‑curriculum interventions can substantially boost robustness without extra computational cost.

Comments & Academic Discussion

Loading comments...

Leave a Comment