EEmo-Logic: A Unified Dataset and Multi-Stage Framework for Comprehensive Image-Evoked Emotion Assessment

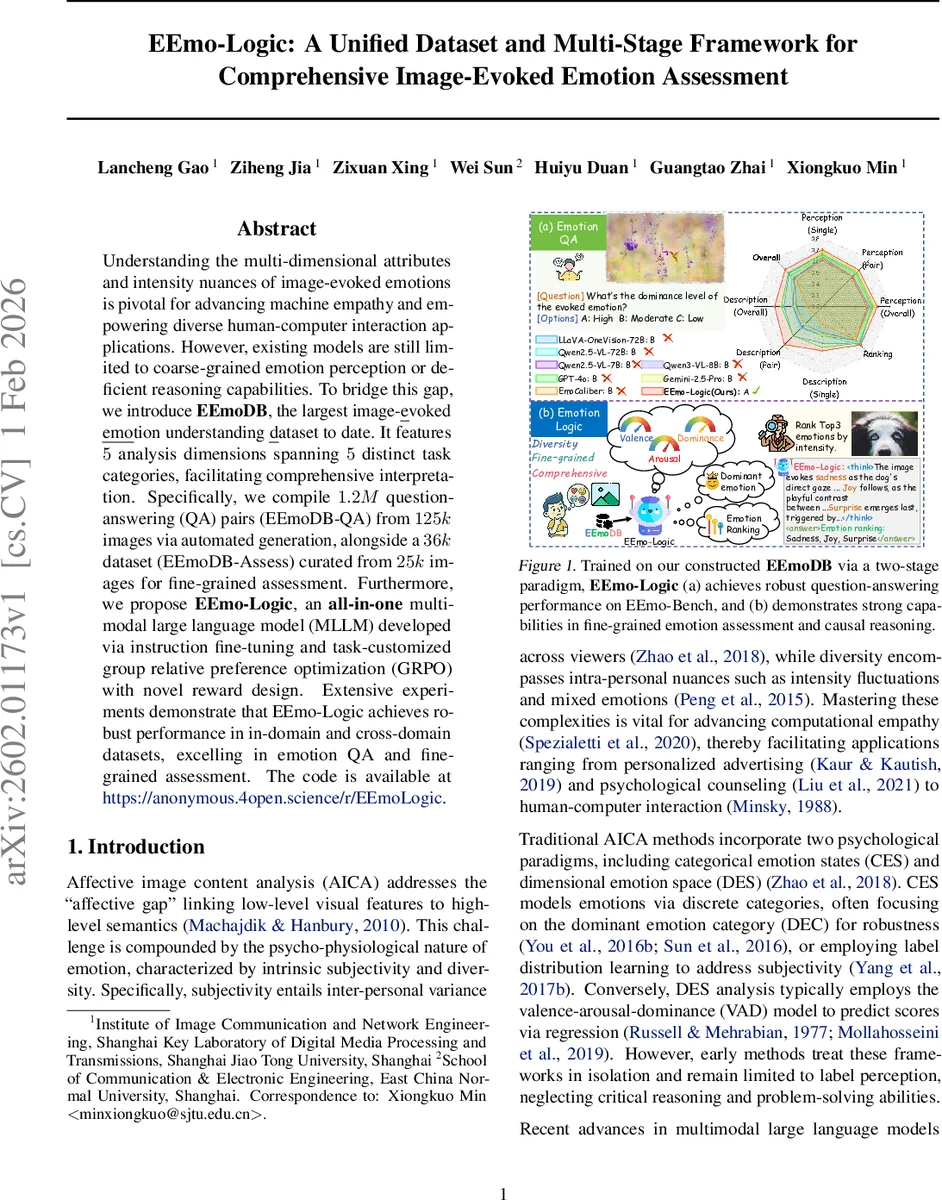

Understanding the multi-dimensional attributes and intensity nuances of image-evoked emotions is pivotal for advancing machine empathy and empowering diverse human-computer interaction applications. However, existing models are still limited to coarse-grained emotion perception or deficient reasoning capabilities. To bridge this gap, we introduce EEmoDB, the largest image-evoked emotion understanding dataset to date. It features $5$ analysis dimensions spanning $5$ distinct task categories, facilitating comprehensive interpretation. Specifically, we compile $1.2M$ question-answering (QA) pairs (EEmoDB-QA) from $125k$ images via automated generation, alongside a $36k$ dataset (EEmoDB-Assess) curated from $25k$ images for fine-grained assessment. Furthermore, we propose EEmo-Logic, an all-in-one multimodal large language model (MLLM) developed via instruction fine-tuning and task-customized group relative preference optimization (GRPO) with novel reward design. Extensive experiments demonstrate that EEmo-Logic achieves robust performance in in-domain and cross-domain datasets, excelling in emotion QA and fine-grained assessment. The code is available at https://anonymous.4open.science/r/EEmoLogic.

💡 Research Summary

EEmo-Logic addresses the longstanding gap in affective image content analysis (AICA) by providing a unified dataset and a two‑stage multimodal large language model (MLLM) framework that simultaneously handles categorical emotion states (CES) and dimensional emotion space (DES). The authors first construct EEmoDB, the largest instruction‑style dataset for image‑evoked emotion understanding to date. EEmoDB aggregates seven public AICA datasets (e.g., EMOTIC, GAPED, OASIS) and processes them through a four‑step pipeline: (1) collection of source images and raw labels, (2) refinement of emotion labels via synonym mapping to Ekman’s six basic emotions plus neutral and VAD‑based clustering for fine‑grained categories, (3) generation of continuous Valence‑Arousal‑Dominance (VAD) scores by extracting affective keywords from user comments and normalizing them, and (4) creation of description and reasoning texts using Qwen2.5‑VL for visual captioning followed by DeepSeek for structured reasoning grounded in expert comments. This pipeline yields 1.23 million multimodal instructions, split into two subsets: EEmoDB‑QA (≈1.2 M QA pairs covering perception, ranking, and description tasks) and EEmoDB‑Assess (≈36 k high‑quality instructions focused on fine‑grained assessment of VAD, dominance, dominant emotion, and emotion ranking).

The model, EEmo‑Logic, is trained in two stages. Stage 1 applies Low‑Rank Adaptation (LoRA) supervised fine‑tuning (SFT) on the massive QA subset to endow the model with basic emotion perception and question‑answering capabilities. Stage 2 introduces Group Relative Preference Optimization (GRPO), a novel preference‑learning algorithm tailored for the assessment subset. GRPO employs three hierarchical reward components: (i) answer correctness, (ii) label distribution uniformity, and (iii) semantic alignment, encouraging the model to capture subtle intensity differences and to produce coherent chain‑of‑thought explanations. The resulting MLLM can output not only the dominant emotion but also continuous VAD scores and a ranked list of top‑3 emotions, thereby unifying CES and DES analyses.

Extensive experiments demonstrate that EEmo‑Logic achieves state‑of‑the‑art performance on both in‑domain benchmarks (EEmo‑Bench) and cross‑domain datasets such as EMOTIC, ArtEmis, and AesBench. In emotion QA, it surpasses strong baselines (Qwen2.5‑VL, LLaVA‑OneVision, GPT‑4o) by 3–5 % in accuracy and F1. For VAD regression, it reduces RMSE by approximately 0.07 compared to the best prior methods. The emotion ranking task shows a Top‑3 accuracy of 78 %, indicating robust handling of mixed‑emotion scenarios. Qualitative analysis reveals that the model’s chain‑of‑thought reasoning aligns closely with human expert explanations, receiving high plausibility scores from human evaluators.

The paper’s contributions are threefold: (1) the release of EEmoDB, a massive, multi‑dimensional instruction dataset for affective image analysis; (2) the design of a two‑stage training pipeline combining LoRA SFT and GRPO to bridge coarse QA and fine‑grained assessment; (3) comprehensive empirical validation confirming both high accuracy and strong generalization across domains. Nonetheless, limitations include potential bias introduced by automated label generation, limited human validation beyond sampled subsets, and the complexity of the GRPO reward design which may hinder reproducibility without full code release. Future work should explore Bayesian uncertainty modeling for emotion labels, larger-scale human‑in‑the‑loop evaluations, and deployment in real‑world HCI applications such as personalized advertising and psychological counseling.

Comments & Academic Discussion

Loading comments...

Leave a Comment