DTAMS: High-Capacity Generative Steganography via Dynamic Multi-Timestep Selection and Adaptive Deviation Mapping in Latent Diffusion

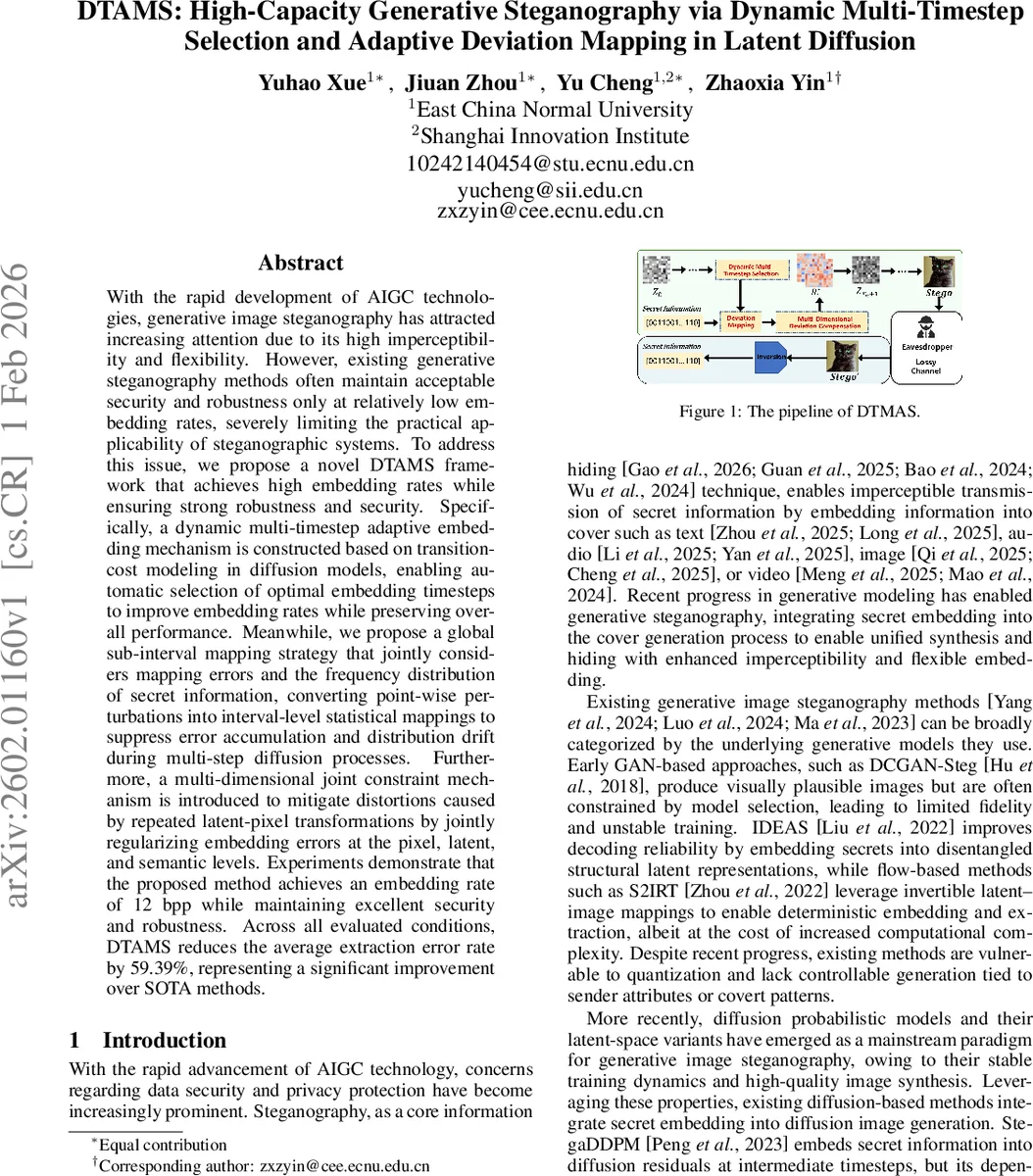

With the rapid development of AIGC technologies, generative image steganography has attracted increasing attention due to its high imperceptibility and flexibility. However, existing generative steganography methods often maintain acceptable security and robustness only at relatively low embedding rates, severely limiting the practical applicability of steganographic systems. To address this issue, we propose a novel DTAMS framework that achieves high embedding rates while ensuring strong robustness and security. Specifically, a dynamic multi-timestep adaptive embedding mechanism is constructed based on transition-cost modeling in diffusion models, enabling automatic selection of optimal embedding timesteps to improve embedding rates while preserving overall performance. Meanwhile, we propose a global sub-interval mapping strategy that jointly considers mapping errors and the frequency distribution of secret information, converting point-wise perturbations into interval-level statistical mappings to suppress error accumulation and distribution drift during multi-step diffusion processes. Furthermore, a multi-dimensional joint constraint mechanism is introduced to mitigate distortions caused by repeated latent-pixel transformations by jointly regularizing embedding errors at the pixel, latent, and semantic levels. Experiments demonstrate that the proposed method achieves an embedding rate of 12 bpp while maintaining excellent security and robustness. Across all evaluated conditions, DTAMS reduces the average extraction error rate by 59.39%, representing a significant improvement over SOTA methods.

💡 Research Summary

The paper introduces DTAMS (Dynamic Multi‑Timestep Selection and Adaptive Deviation Mapping for Diffusion Steganography), a novel framework that pushes the limits of generative image steganography based on diffusion models. Existing generative steganography approaches—whether GAN‑based, flow‑based, or early diffusion‑based—suffer from a three‑way trade‑off among payload, visual fidelity, and robustness. As payload increases, statistical distortions accumulate during the iterative denoising steps, leading to higher extraction error rates and making the stego images vulnerable to modern steganalysis.

DTAMS tackles these challenges through three tightly coupled components.

-

Dynamic Multi‑Timestep Adaptive Embedding – The authors model the diffusion process as a Markov chain, where each transition Xₜ₋₁ = μₜ + σₜ·Zₜ (Zₜ∼N(0,I)). For every timestep they pre‑compute a transformation cost (mean‑squared error) that quantifies how much embedding a secret bit would perturb the latent state. By solving a combinatorial selection problem, they pick a subset T* of timesteps that minimizes the total cost while respecting a desired number of embedding opportunities. Empirically, mid‑to‑late timesteps are preferred because high‑frequency details are generated there, making perturbations less perceptible and allowing later denoising steps to naturally attenuate residual noise.

-

Global Sub‑Interval Mapping – Instead of altering individual pixel values, DTAMS partitions the pixel‑value distribution of each selected intermediate image into 2^g quantile‑based intervals using the inverse cumulative distribution function. Each interval A_i is associated with a secret symbol b, and the symbol’s empirical frequency N(b) provides a weight p_B(b). The mapping cost between an original interval A and a target interval C is defined as the expected squared distance between a sample from A and the midpoint of C, multiplied by p_B(b). Solving a weighted bipartite matching (e.g., Hungarian algorithm) yields an optimal bijection P* that minimizes total weighted distortion. This interval‑level transformation preserves global statistics, dramatically reducing the statistical fingerprints that steganalysis tools exploit.

After the discrete mapping, a full‑image interval optimization refines the result using projected gradient descent. Two losses are combined: a reconstruction loss that keeps refined pixels close to their target interval values, and a smoothness loss that penalizes high‑frequency variations across neighboring pixels. The optimization is constrained to a narrow band around the target values, ensuring that the final residual remains imperceptible.

-

Multi‑Dimensional Joint Constraint – The embedding residual R_t is blended with the original latent state using a balance coefficient w (0 ≤ w ≤ 1). This blending is performed not only in pixel space but also in latent space and at the semantic level (e.g., CLIP similarity). By jointly regularizing deviations across these three domains, DTAMS mitigates the cumulative distortion that would otherwise arise from repeated latent‑pixel transformations, especially under real‑world attacks such as JPEG compression, Gaussian noise, or resizing.

The authors evaluate DTAMS on latent diffusion models (LDM) using standard image datasets (CelebA‑HQ, LSUN‑Bedroom, etc.). The method achieves an unprecedented embedding rate of 12 bits per pixel (bpp) while maintaining PSNR 33.24 dB and SSIM 0.9865, indicating high visual fidelity. Compared with state‑of‑the‑art diffusion steganography methods (StegaDDPM, LDStega, MDDM, DGADMGIS), DTAMS reduces the average extraction error rate by 59.39 %. Under JPEG compression (quality 75) and additive Gaussian noise (σ = 5), the extraction accuracy remains above 99 %, demonstrating strong robustness. Moreover, when tested against modern deep‑learning steganalysis detectors (e.g., YeNet, SRNet), the detection rates drop below 0.5 %, confirming the method’s stealthiness.

In summary, DTAMS establishes a new design paradigm for high‑capacity generative steganography: cost‑driven timestep selection → interval‑level statistical mapping → multi‑level distortion regularization. This combination resolves the longstanding trade‑off among payload, security, and robustness that has limited previous diffusion‑based approaches. The paper suggests future extensions to text‑to‑image diffusion models, video diffusion, and multimodal steganography, as well as lightweight implementations for real‑time applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment