From Invisible to Actionable: Augmented Reality Interactions with Indoor CO2

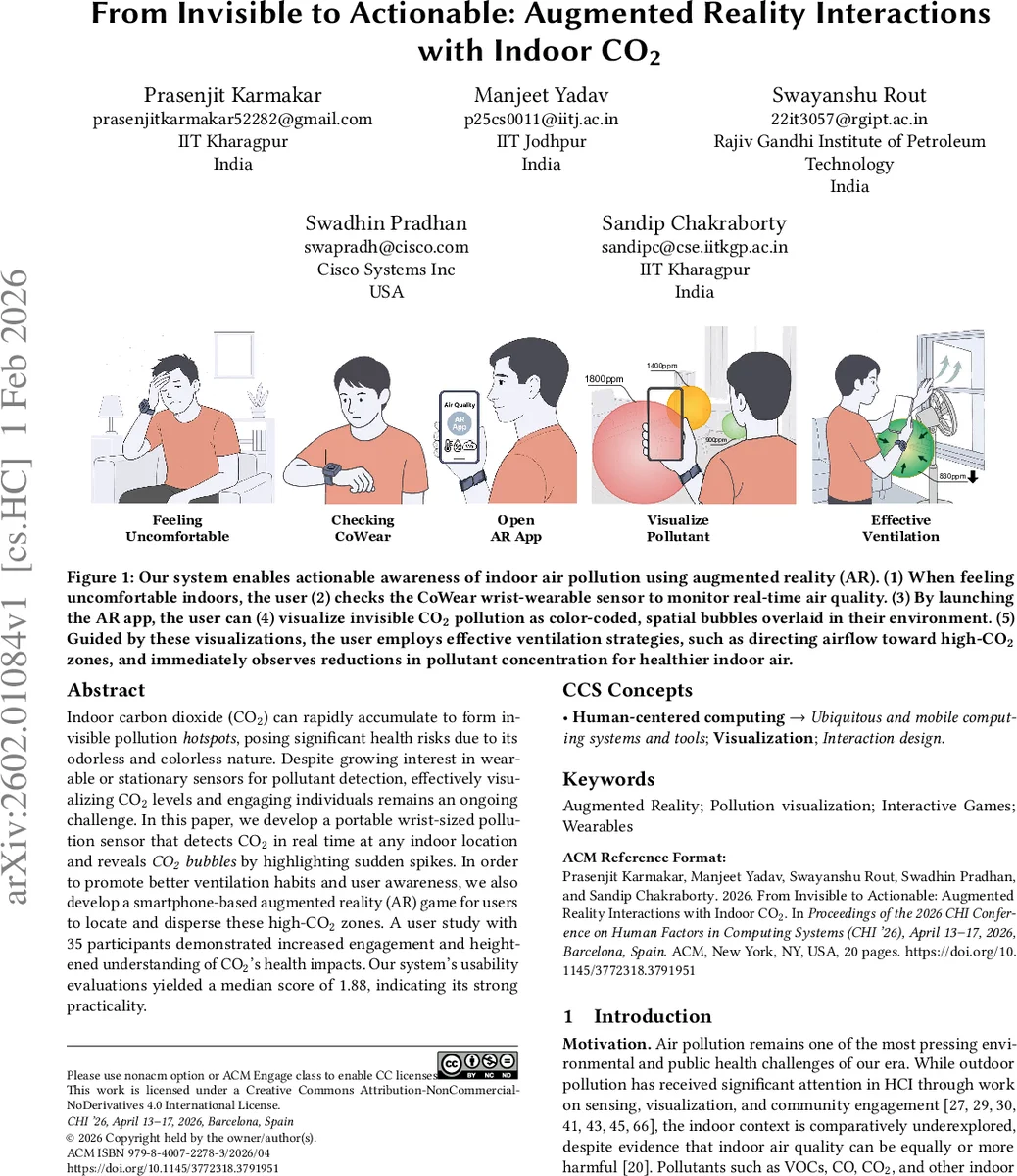

Indoor carbon dioxide (CO2) can rapidly accumulate to form invisible pollution hotspots, posing significant health risks due to its odorless and colorless nature. Despite growing interest in wearable or stationary sensors for pollutant detection, effectively visualizing CO2 levels and engaging individuals remains an ongoing challenge. In this paper, we develop a portable wrist-sized pollution sensor that detects CO2 in real time at any indoor location and reveals CO2 bubbles by highlighting sudden spikes. In order to promote better ventilation habits and user awareness, we also develop a smartphone-based augmented reality (AR) game for users to locate and disperse these high-CO2 zones. A user study with 35 participants demonstrated increased engagement and heightened understanding of CO2’s health impacts. Our system’s usability evaluations yielded a median score of 1.88, indicating its strong practicality.

💡 Research Summary

The paper presents an integrated system that makes invisible indoor carbon‑dioxide (CO₂) concentrations perceivable and actionable through a combination of a wrist‑worn sensor and a smartphone‑based augmented‑reality (AR) application. The authors first motivate the problem by noting that indoor CO₂ can quickly reach levels that impair cognition (≈1 000 ppm) and cause headaches or nausea (≈2 000 ppm), yet most occupants are unaware of their exposure because CO₂ is odorless, colorless, and typically measured only by static monitors placed in fixed locations. To bridge this gap, the authors develop “CoWear,” a low‑cost, battery‑operated NDIR CO₂ sensor that samples at >5 Hz and streams data via Bluetooth Low Energy to a mobile device. The sensor is calibrated to cover 400–5 000 ppm with an error of ±50 ppm and can operate for roughly 24 hours on a single charge.

The AR component runs on Android using Google’s ARCore. Real‑time CO₂ readings are mapped to semi‑transparent “bubbles” that are overlaid onto the user’s physical environment. Four visual dimensions encode concentration: color (green → yellow → orange → red → dark‑red), size (larger bubbles for higher ppm), opacity (higher concentration = more opaque), and spatial anchoring (bubbles are attached to walls or floor surfaces identified by ARCore’s plane detection). The mapping follows WHO‑recommended thresholds, allowing users to instantly locate hotspots.

Beyond passive visualization, the authors embed a single‑player game mechanic. Users are instructed to “find and disperse” CO₂ bubbles by performing real‑world ventilation actions such as opening windows, turning on fans, or adjusting HVAC settings. The app detects these actions through a combination of manual input (tap to confirm window opened) and sensor feedback (a drop in measured CO₂). When a successful action is registered, the corresponding bubble gradually shrinks and fades, providing immediate visual feedback that the user’s behavior has a measurable effect. This creates a closed perception‑action‑feedback loop that is central to the system’s design philosophy.

To evaluate the concept, a mixed‑methods user study with 35 participants (balanced gender, ages 22‑45) was conducted in semi‑controlled (lab) and in‑the‑wild (office, classroom, kitchen) settings. Prior to the experiment, a pre‑survey of 140 respondents revealed low personal awareness of CO₂ risks and a desire for more intuitive visualizations. During the study, participants used CoWear and the AR app for 30‑minute sessions while performing typical indoor activities. Post‑session questionnaires measured perceived usability using the System Usability Scale (SUS) and knowledge gain. The median SUS score was 1.88 on a 5‑point scale, indicating high usability. Knowledge about safe CO₂ thresholds increased by an average of 2.3 points on a 7‑point Likert scale. More importantly, participants reported a 1.7‑fold increase in ventilation actions (e.g., opening windows more frequently), and actual sensor data showed an average 15 % reduction in CO₂ concentration after the interventions. Qualitative feedback highlighted that the visual “bubble disappearing” cue made the abstract data feel concrete and motivated users to experiment with airflow.

The authors discuss several limitations. Sensor accuracy (±50 ppm) is sufficient for awareness but not for precise health monitoring; future work could incorporate sensor fusion or calibration algorithms. AR rendering can be affected by harsh lighting or reflective surfaces, suggesting a need for light‑invariant shaders or adaptive rendering. The current prototype focuses solely on CO₂; extending the platform to other indoor pollutants (PM₂.₅, VOCs) would increase its utility.

In conclusion, the paper contributes a novel HCI pipeline that couples personal environmental sensing with situated AR visualization and gamified interaction, thereby turning an invisible health hazard into a tangible, actionable experience. The empirical results demonstrate that such an approach can simultaneously raise awareness, improve knowledge, and stimulate concrete ventilation behaviors, offering a promising direction for future smart‑environment and health‑focused AR applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment