AutoHealth: An Uncertainty-Aware Multi-Agent System for Autonomous Health Data Modeling

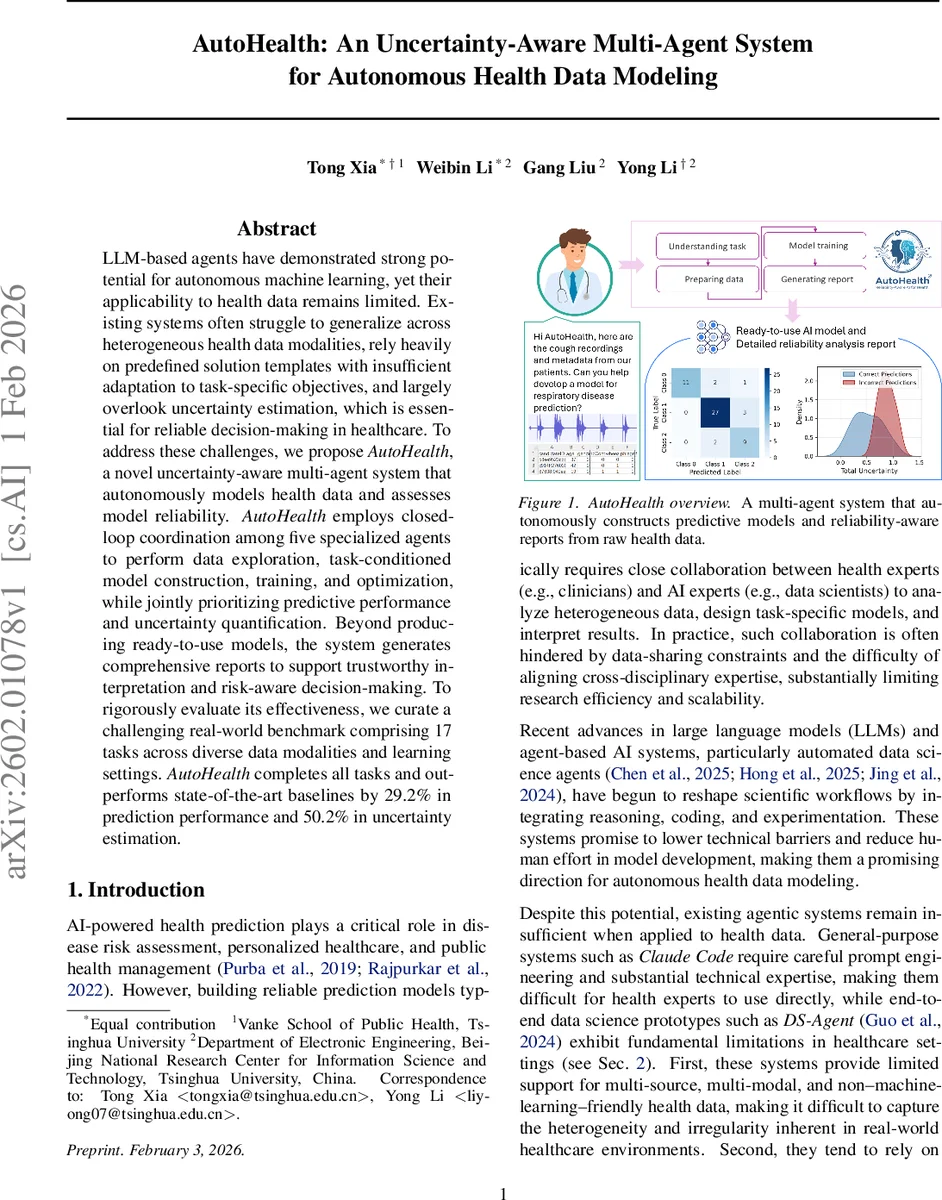

LLM-based agents have demonstrated strong potential for autonomous machine learning, yet their applicability to health data remains limited. Existing systems often struggle to generalize across heterogeneous health data modalities, rely heavily on predefined solution templates with insufficient adaptation to task-specific objectives, and largely overlook uncertainty estimation, which is essential for reliable decision-making in healthcare. To address these challenges, we propose \textit{AutoHealth}, a novel uncertainty-aware multi-agent system that autonomously models health data and assesses model reliability. \textit{AutoHealth} employs closed-loop coordination among five specialized agents to perform data exploration, task-conditioned model construction, training, and optimization, while jointly prioritizing predictive performance and uncertainty quantification. Beyond producing ready-to-use models, the system generates comprehensive reports to support trustworthy interpretation and risk-aware decision-making. To rigorously evaluate its effectiveness, we curate a challenging real-world benchmark comprising 17 tasks across diverse data modalities and learning settings. \textit{AutoHealth} completes all tasks and outperforms state-of-the-art baselines by 29.2% in prediction performance and 50.2% in uncertainty estimation.

💡 Research Summary

AutoHealth is an uncertainty‑aware multi‑agent framework designed to autonomously build predictive models from heterogeneous health data while simultaneously delivering calibrated uncertainty estimates and comprehensive reports. The system consists of five specialized agents coordinated by a central Meta‑Agent: (1) Data‑Agent performs code‑driven exploration of raw datasets, automatically generating structured data profiles that capture modality‑specific characteristics (tabular, time‑series, image, segmentation, audio, free‑text, graph) and quality diagnostics; (2) Design‑Agent translates these profiles and high‑level task objectives into task‑conditioned experimental plans, proposing model architectures, feature configurations, loss functions, and training strategies that are iteratively refined using feedback from previous rounds; (3) Coding‑Agent converts the experimental plan into executable Python code, implementing the chosen models and integrating uncertainty quantification techniques such as Bayesian neural networks, deep ensembles, and test‑time data augmentation; (4) Execution proceeds with model training and evaluation, producing performance metrics and uncertainty scores that are fed back as structured feedback; (5) Report‑Agent synthesizes all experimental decisions, results, calibration curves, confidence intervals, and limitations into a readable, risk‑aware report for clinicians and stakeholders.

The workflow is closed‑loop: after each iteration, the Meta‑Agent updates the global plan based on the latest feedback, allowing the system to adapt its design and code until convergence. To evaluate the approach, the authors curated a benchmark of 17 real‑world health prediction tasks covering six modalities and six learning settings (classification, regression, segmentation, survival analysis, forecasting, link prediction). Compared with strong baselines—including AutoML‑GPT, HuggingGPT, DS‑Agent, and OpenLens AI—AutoHealth achieved a 100 % task success rate, a 29.2 % average improvement in predictive performance (e.g., AUC, RMSE), and a 50.2 % boost in uncertainty estimation quality (e.g., lower Expected Calibration Error and Negative Log‑Likelihood).

Key contributions are: (i) a closed‑loop, uncertainty‑first optimization paradigm that treats reliability as a first‑class objective; (ii) a multi‑agent coordination scheme that enables task‑conditioned reasoning beyond rigid template pipelines; (iii) a publicly released benchmark and codebase that demonstrate substantial gains across diverse health data scenarios. Limitations include reliance on large‑language‑model API calls, computational overhead for extensive ensembles, and the need for further scalability to massive electronic health record repositories. Future work will explore lightweight LLMs, distributed execution, and domain‑specific prompt engineering to improve efficiency and broaden applicability. In sum, AutoHealth advances the state of autonomous health AI by integrating heterogeneous data handling, adaptive model design, and rigorous uncertainty quantification into a single, end‑to‑end system.

Comments & Academic Discussion

Loading comments...

Leave a Comment