Superposition unifies power-law training dynamics

We investigate the role of feature superposition in the emergence of power-law training dynamics using a teacher-student framework. We first derive an analytic theory for training without superposition, establishing that the power-law training exponent depends on both the input data statistics and channel importance. Remarkably, we discover that a superposition bottleneck induces a transition to a universal power-law exponent of $\sim 1$, independent of data and channel statistics. This one over time training with superposition represents an up to tenfold acceleration compared to the purely sequential learning that takes place in the absence of superposition. Our finding that superposition leads to rapid training with a data-independent power law exponent may have important implications for a wide range of neural networks that employ superposition, including production-scale large language models.

💡 Research Summary

The paper investigates how feature superposition influences the emergence of power‑law training dynamics, using a carefully designed teacher‑student framework that abstracts key aspects of large language models (LLMs). The authors first derive an analytic theory for the regime without superposition (i.e., when the student’s latent dimension K equals the input dimension N). In this setting, the input features are generated with a power‑law frequency decay p_i ∝ i^‑a, and the teacher’s channel importance decays as A_ii ∝ i^‑b. Assuming a small random initialization, the student weight matrix remains diagonal throughout training, and each diagonal entry follows a simple linear differential equation ds_i/dt = η λ_i (a_i – s_i), where λ_i ∝ i^‑a reflects the data covariance spectrum and a_i ∝ i^‑b encodes the teacher’s channel strength. Solving this ODE yields a closed‑form expression for the loss: L(t) = ½ Σ_i i^{‑(a+2b)} exp(‑2η t i^{‑a). By approximating the sum with an integral for large N and focusing on the mid‑training regime (where many modes are still unlearned), the loss decays as a power law L(t) ∝ t^{‑α} with exponent

α = (a + 2b – 1) / a (when a + 2b > 1).

Thus, in the absence of superposition the training speed is tightly coupled to both the sparsity of the data (parameter a) and the decay of channel importance (parameter b). Larger a or b lead to faster learning because the model has fewer informative modes to acquire.

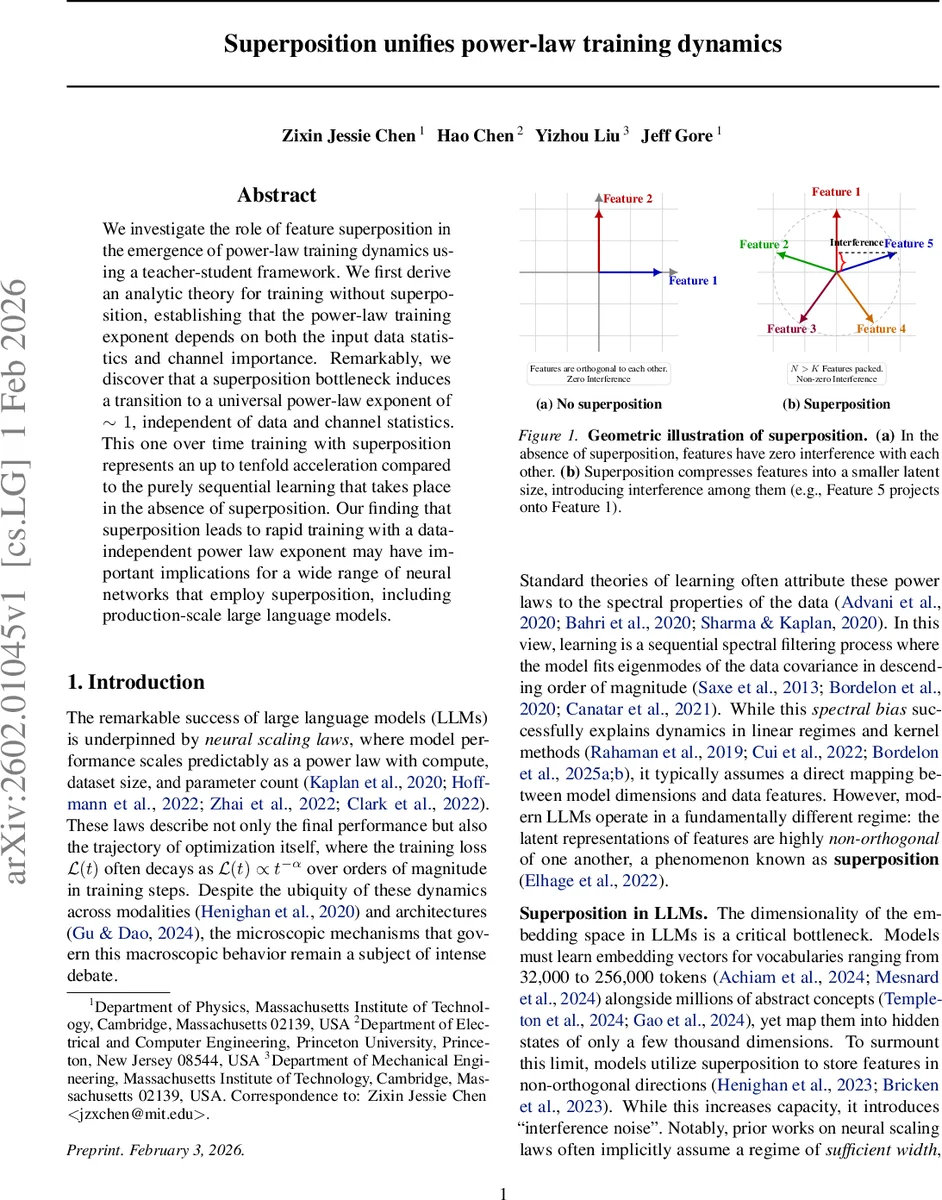

The second part of the study introduces a bottleneck: the student’s latent dimension K is strictly smaller than N, forcing the model to store N features in a K‑dimensional space. This creates superposition: features become non‑orthogonal, and interference (“noise”) arises. The authors model this by a fixed random projection W ∈ ℝ^{K×N} (column‑normalized) that compresses the sparse input, a trainable matrix B ∈ ℝ^{K×K} that processes the latent representation, and a transpose projection W^⊤ that reconstructs the output. To mitigate negative interference, a learnable bias and a ReLU nonlinearity are added at the output. In this regime, the dynamics no longer decompose into independent modes; instead, the random projection mixes all features across all channels, effectively equalising their learning behavior.

Because of this mixing, the authors find empirically that the loss follows a universal power‑law decay with exponent α ≈ 1, regardless of the values of a and b or the compression ratio N/K (tested for K = 128, 256, 512 with N = 1024). The fitted exponent remains close to 1 across a wide range of data sparsities (a ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment