MedSpeak: A Knowledge Graph-Aided ASR Error Correction Framework for Spoken Medical QA

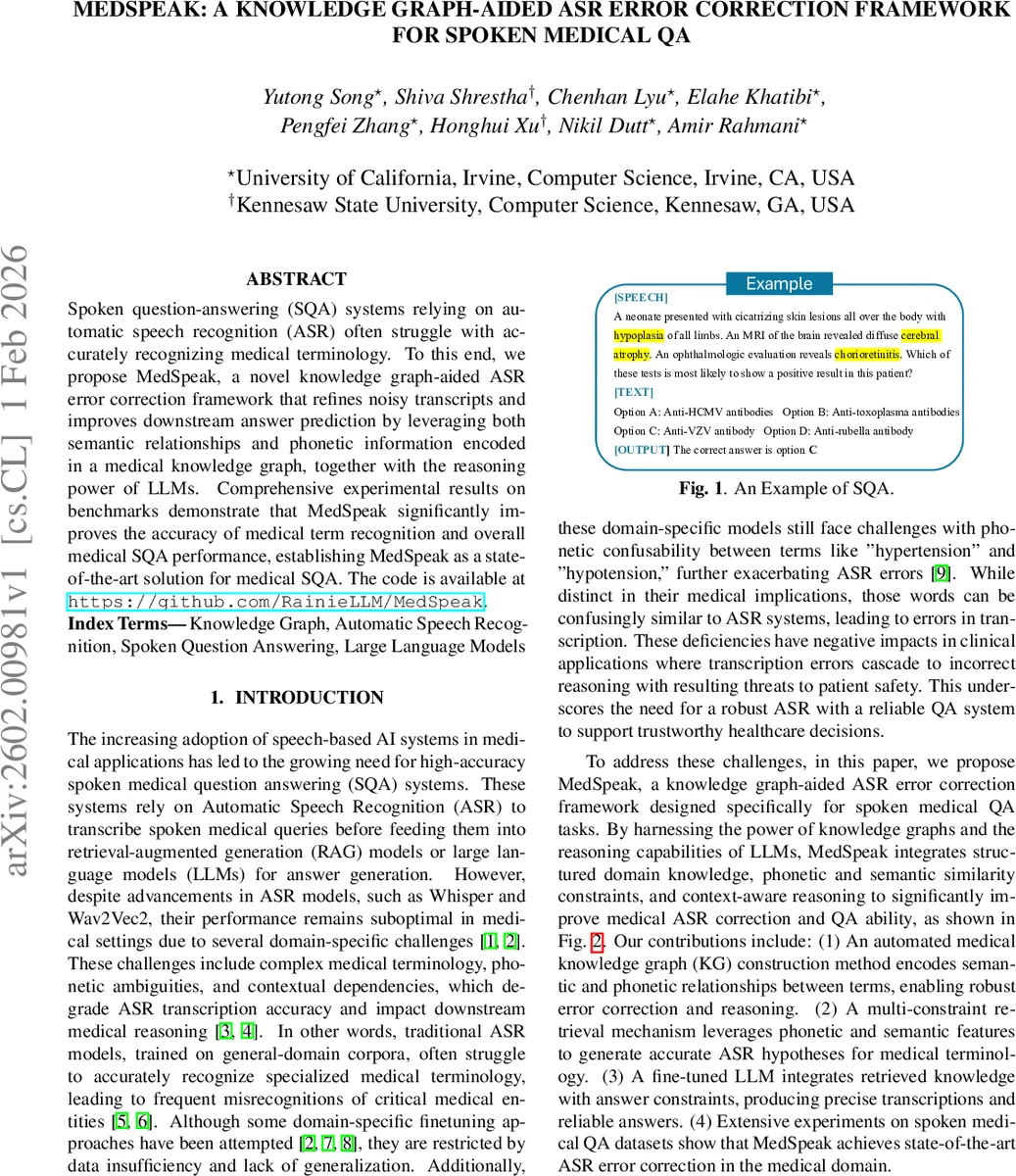

Spoken question-answering (SQA) systems relying on automatic speech recognition (ASR) often struggle with accurately recognizing medical terminology. To this end, we propose MedSpeak, a novel knowledge graph-aided ASR error correction framework that refines noisy transcripts and improves downstream answer prediction by leveraging both semantic relationships and phonetic information encoded in a medical knowledge graph, together with the reasoning power of LLMs. Comprehensive experimental results on benchmarks demonstrate that MedSpeak significantly improves the accuracy of medical term recognition and overall medical SQA performance, establishing MedSpeak as a state-of-the-art solution for medical SQA. The code is available at https://github.com/RainieLLM/MedSpeak.

💡 Research Summary

The paper introduces MedSpeak, a novel framework that tackles the persistent problem of automatic speech recognition (ASR) errors in spoken medical question‑answering (SQA) systems. Conventional ASR models, trained on general‑domain corpora, often misrecognize specialized medical terminology and struggle with phonetic ambiguities such as “hypertension” versus “hypotension.” These transcription errors cascade into downstream reasoning errors, jeopardizing clinical decision‑making.

MedSpeak addresses this challenge by integrating a domain‑specific medical knowledge graph (KG) with a large language model (LLM). The KG is automatically constructed from the Unified Medical Language System (UMLS) MRREL table, capturing semantic relationships (e.g., “classifies,” “constitutes”) between diseases, symptoms, pathogens, and tests. To encode phonetic similarity, the authors augment the KG with entries derived from the CMU Pronouncing Dictionary, applying Double Metaphone together with Levenshtein distance to filter false positives. Each node stores a unique identifier and term string; each edge carries a relationship tag, including a dedicated “phonetic” tag that signals potential ASR confusion.

The LLM component is based on Llama‑3.1‑8B‑Instruct, fine‑tuned end‑to‑end on a dialogue‑style dataset that combines (i) noisy transcripts generated by Whisper Small, (ii) the four‑choice answer options, and (iii) a budgeted KG context (600 tokens for semantic edges, 300 tokens for phonetic edges). A fixed system prompt forces the model to produce a strict two‑line output: “Corrected Text: …” and “Correct Option: …”. Training optimizes the standard causal language‑modeling loss, thereby jointly learning to correct the transcript (t*) and to select the correct answer (o*). This unified formulation enables the model to exploit KG information while reasoning about the question, effectively bridging transcription correction and answer inference.

For evaluation, the authors synthesize spoken versions of three established medical QA benchmarks—MMLU‑Medical (1,089 questions), MedQA (1,273 USMLE‑style questions), and MedMCQA (4,183 Indian medical exam items)—using a pyttsx3 text‑to‑speech engine at 16 kHz. The resulting corpus comprises over 47 hours of audio (≈6.79 M tokens). Baselines include: (1) Zero‑shot ASR (raw Whisper output with an un‑fine‑tuned LLM), (2) Zero‑shot GT (LLM with ground‑truth transcripts only), (3) FT+Whisp (fine‑tuned LLM receiving Whisper transcripts but no KG), and (4) FT‑LLM (fine‑tuned LLM with perfect transcripts).

Two metrics are reported: QA accuracy (percentage of correctly chosen options) and word error rate (WER) on the corrected transcripts. MedSpeak achieves an average QA accuracy of 93.4 %, closely matching the FT‑LLM upper bound of 92.5 % while operating on noisy speech. Notably, in the Virology and MedQA subsets, MedSpeak improves accuracy by more than four percentage points over FT‑LLM, highlighting the benefit of KG‑driven context for terminology‑heavy domains. The overall WER drops to 29.9 %, a dramatic reduction from 77.2 % for the zero‑shot ASR baseline and from 35.8 % for FT+Whisp. Error reductions are especially pronounced in Anatomy and Virology, where phonetic confusion is frequent.

Key contributions of the work are: (1) an automated pipeline for constructing a medical KG that encodes both semantic and phonetic relations, (2) a multi‑constraint retrieval mechanism that supplies the LLM with relevant KG subgraphs during inference, and (3) a unified two‑line supervision format that merges ASR correction and multiple‑choice reasoning into a single generation task. The experiments demonstrate that combining structured domain knowledge with LLM reasoning yields state‑of‑the‑art performance in spoken medical QA, both in terms of answer correctness and transcription quality.

The authors suggest future directions such as real‑time deployment in clinical settings, extension to other specialized domains (legal, finance), and dynamic KG updating to incorporate emerging medical terminology. Overall, MedSpeak showcases a powerful synergy between knowledge graphs and large language models for robust, domain‑aware speech understanding.

Comments & Academic Discussion

Loading comments...

Leave a Comment