FreeChunker: A Cross-Granularity Chunking Framework

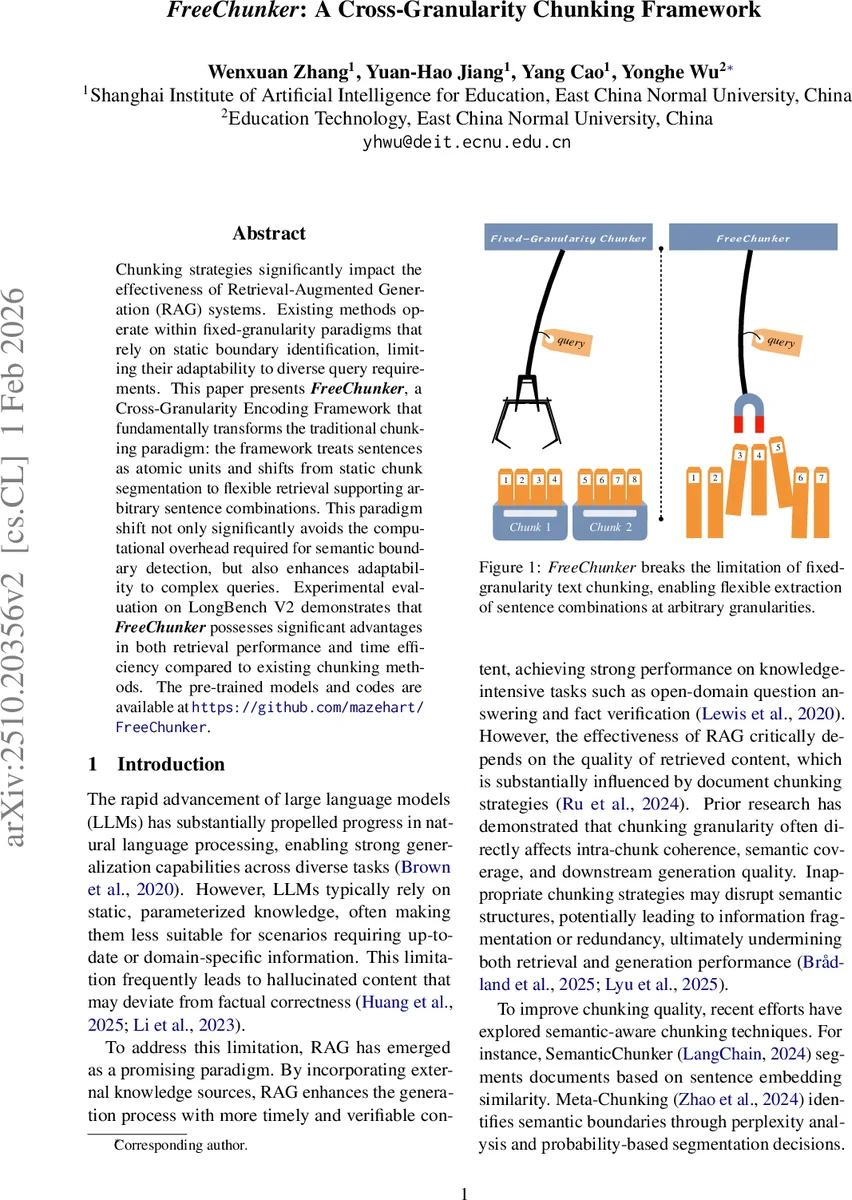

Chunking strategies significantly impact the effectiveness of Retrieval-Augmented Generation (RAG) systems. Existing methods operate within fixed-granularity paradigms that rely on static boundary identification, limiting their adaptability to diverse query requirements. This paper presents FreeChunker, a Cross-Granularity Encoding Framework that fundamentally transforms the traditional chunking paradigm: the framework treats sentences as atomic units and shifts from static chunk segmentation to flexible retrieval supporting arbitrary sentence combinations. This paradigm shift not only significantly avoids the computational overhead required for semantic boundary detection, but also enhances adaptability to complex queries. Experimental evaluation on LongBench V2 demonstrates that FreeChunker possesses significant advantages in both retrieval performance and time efficiency compared to existing chunking methods. The pre-trained models and codes are available at https://github.com/mazehart/FreeChunker.

💡 Research Summary

The paper “FreeChunker: A Cross‑Granularity Chunking Framework” addresses a core bottleneck in Retrieval‑Augmented Generation (RAG) pipelines: the way documents are split into chunks before retrieval. Existing approaches fall into two families. Fixed‑granularity chunkers divide a document into equally sized pieces (e.g., 512‑token windows). While simple, they cannot simultaneously provide fine‑grained details and broader context, leading to information fragmentation or redundancy. Semantic‑aware chunkers (SemanticChunker, Meta‑Chunking, LumberChunker) try to locate natural boundaries using sentence‑level embedding similarity, perplexity changes, or large‑language‑model predictions. Although they improve intra‑chunk coherence, they still produce a single granularity per document and require expensive model inference for boundary detection, which hurts latency at scale.

FreeChunker proposes a fundamentally different paradigm. It treats each sentence as an atomic unit and eliminates the need for explicit boundary detection. Instead, it builds a “Chunk Pattern Mask” that encodes multiple granularity patterns (e.g., 1‑sentence, 2‑sentence, …, k‑sentence chunks) in a single matrix. By feeding this mask together with sentence embeddings into a modified transformer encoder, the model can compute embeddings for all desired chunk sizes in one forward pass. The mask contains zeros for positions that belong to a particular chunk and –∞ for all others; the softmax attention therefore only aggregates the selected sentences. This design reuses the same sentence‑level embeddings across all granularity levels, reducing the computational cost from O(m·n) (encoding each chunk separately) to O(n) (encoding each sentence once).

The pipeline consists of four stages:

- Sentenizer – splits a document into sentences and encodes each sentence with a pretrained token‑level model (the authors experiment with three models ranging from 33 M to 570 M parameters). The result is a matrix E ∈ ℝⁿˣᵈ.

- Chunk Pattern Mask Construction – creates a mask P ∈ {0, −∞}^{m×n} where each row corresponds to a specific granularity g and start position s. The mask can represent any combination of contiguous sentences, enabling simultaneous generation of many chunk sizes.

- Cross‑Granularity Encoder – introduces a learnable chunk token h_chk that is replicated m times to form H. Queries Q, K, V are computed as linear projections of H and E, and attention is performed as softmax(QKᵀ/√d + P) · V. The transformer layers then produce the final chunk embeddings. Because the mask blocks irrelevant sentences, each chunk embedding is effectively a weighted sum of only its constituent sentences.

- Deduplicate‑and‑Concatenate – after retrieval, overlapping chunks are de‑duplicated by keeping the earliest start position and concatenated in order to reconstruct a coherent context for downstream generation.

Training uses a contrastive cosine loss: for each granularity (2, 4, 8, 16, 32 sentences) the model’s output v̂ is forced to match a “ground‑truth” embedding ê obtained by feeding the concatenated sentences through the same base encoder. The loss is L = 1 − cos(ê, v̂). The authors train for two epochs with AdamW (lr = 1e‑4) on 100 K documents from The Pile (each truncated to 8 k tokens). Validation shows smooth convergence; the smallest model (33 M parameters) reaches a cosine loss around 0.01–0.02 for virtually all samples, while larger models exhibit slightly higher variance, likely because a fixed 330 M‑parameter sentence encoder is shared across all experiments.

A theoretical analysis quantifies how the approximation error between v̂ and ê propagates to retrieval quality. Defining ρ = cos(ê, v̂) (embedding similarity) and s = cos(q̂, ê) (query‑to‑true‑chunk similarity), the authors derive an upper bound on the deviation of cosine similarity when the approximate embedding is used. Leveraging concentration of measure in high‑dimensional spaces (d ≥ 768), they show that the probability of a random query aligning with the error direction decays exponentially, implying that a high ρ (≈ 1) guarantees negligible impact on ranking.

Empirical evaluation on the LongBench V2 benchmark (covering open‑domain QA, fact verification, and other knowledge‑intensive tasks) demonstrates that FreeChunker consistently outperforms both fixed‑granularity baselines and semantic‑aware chunkers. Gains include a 4‑7 % increase in average R‑Recall@10 and a 30‑50 % reduction in encoding time. Importantly, the framework allows the retrieval system to select the most appropriate granularity on‑the‑fly: fine‑grained chunks for detail‑heavy queries and coarse‑grained chunks for context‑rich queries, all without additional inference passes.

In summary, FreeChunker introduces a cross‑granularity encoding paradigm that (1) removes the need for costly boundary detection, (2) enables simultaneous generation of multiple chunk sizes in a single forward pass, and (3) improves both retrieval effectiveness and efficiency in RAG pipelines. The authors release code and pretrained models, facilitating reproducibility and future extensions.

Comments & Academic Discussion

Loading comments...

Leave a Comment