WUGNECTIVES: Novel Entity Inferences of Language Models from Discourse Connectives

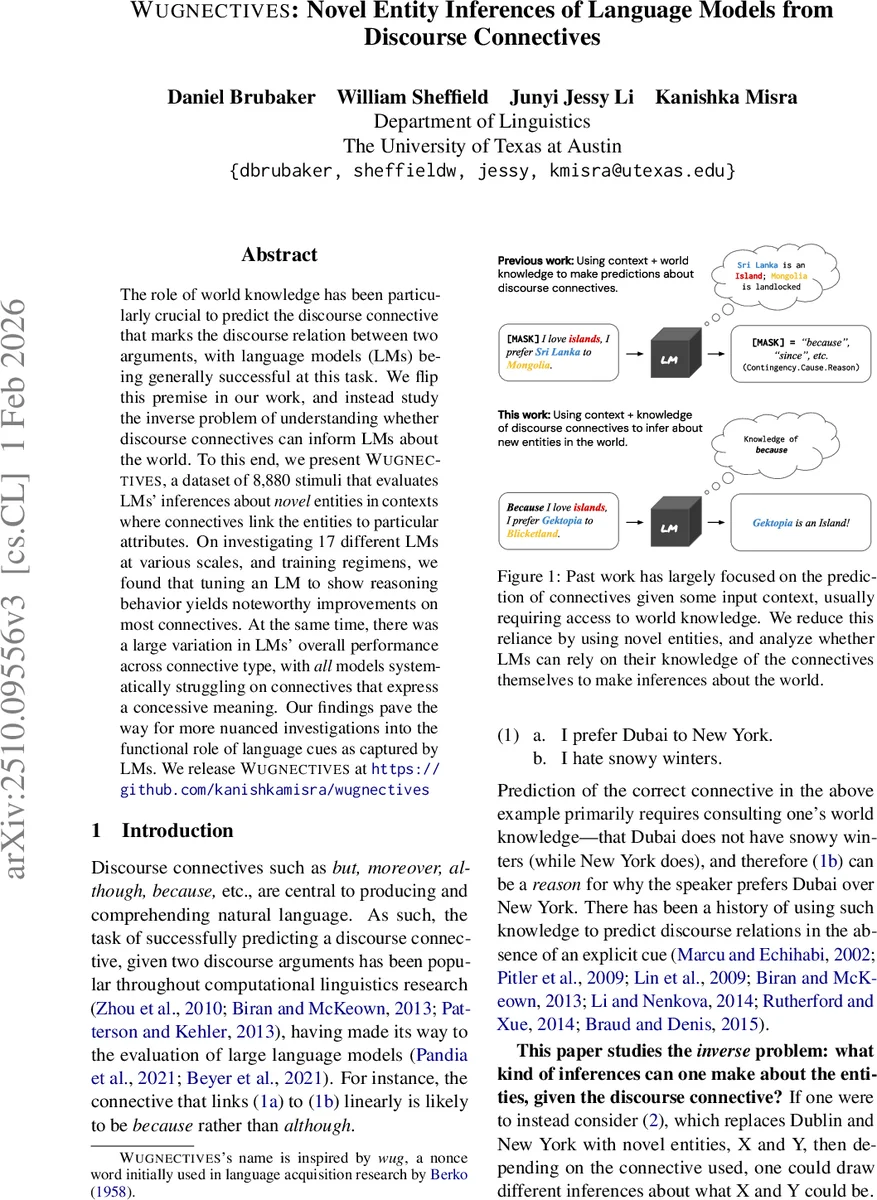

The role of world knowledge has been particularly crucial to predict the discourse connective that marks the discourse relation between two arguments, with language models (LMs) being generally successful at this task. We flip this premise in our work, and instead study the inverse problem of understanding whether discourse connectives can inform LMs about the world. To this end, we present WUGNECTIVES, a dataset of 8,880 stimuli that evaluates LMs’ inferences about novel entities in contexts where connectives link the entities to particular attributes. On investigating 17 different LMs at various scales, and training regimens, we found that tuning an LM to show reasoning behavior yields noteworthy improvements on most connectives. At the same time, there was a large variation in LMs’ overall performance across connective type, with all models systematically struggling on connectives that express a concessive meaning. Our findings pave the way for more nuanced investigations into the functional role of language cues as captured by LMs. We release WUGNECTIVES at https://github.com/kanishkamisra/wugnectives

💡 Research Summary

The paper “WUGNECTIVES: Novel Entity Inferences of Language Models from Discourse Connectives” presents a novel investigation into the ability of large language models (LMs) to understand the functional meaning of discourse connectives (e.g., “because,” “although”) and use them to make inferences about novel entities. The study flips the traditional paradigm in discourse analysis, which typically evaluates how world knowledge helps predict the correct connective between two arguments. Instead, it asks: given a specific connective, what can an LM infer about the world, specifically about novel entities whose properties are unknown?

To answer this, the authors introduce the WUGNECTIVES dataset, comprising 8,880 evaluation stimuli. The core design uses nonce words (like “wug” and “dax”) as novel entities to minimize the influence of pre-existing world knowledge stored in LMs. Each stimulus consists of a premise sentence that uses a discourse connective to establish a relation between two nonce words, followed by an inference statement. The LM’s task is to judge whether the inference is entailed or contradicted by the premise. The stimuli are categorized into three families based on the type of inference licensed: 1) Instantiation: Testing if an entity is a type of another (e.g., using “for example”). 2) Preference: Testing causal (“because”) and concessive (“although”) relations between a speaker’s preference and an entity’s property. 3) Temporal: Testing precedence/succession relations between events (e.g., “before”, “after”).

The study evaluates 17 open-source LMs of varying scales (from 1B to 70B parameters) and training regimens (base models, instruction-tuned models, and reasoning-tuned models). The key findings are:

- Overall Performance: LMs generally performed above chance on stimuli involving temporal relations and causal preference, indicating some grasp of these connective meanings.

- The Concession Challenge: A striking and systematic failure was observed across all models on connectives expressing concessive relations (e.g., “although,” “even though,” “despite that”). Performance on these was near random, suggesting a fundamental difficulty in processing the complex pragmatic expectations and negated causal implications inherent in concession.

- Impact of Training: While model scale and standard instruction-tuning showed no clear benefit, models specifically fine-tuned for reasoning behavior (following Guo et al., 2025) achieved the best overall performance across most connective senses, though they still struggled with concession.

- Role of World Knowledge: A follow-up experiment replacing nonce words with real-world entities (e.g., “Dubai,” “New York”) showed a significant performance boost, confirming that world knowledge aids connective-based inference. However, the improvement was weakest for concessive connectives, reinforcing the conclusion that their meaning is particularly difficult for LMs to capture abstractly.

The paper concludes that while LMs can leverage some functional meanings of connectives, their understanding remains incomplete, especially for logically complex relations like concession. The WUGNECTIVES benchmark provides a valuable tool for disentangling world knowledge from knowledge of linguistic form, enabling more nuanced investigations into how LMs acquire and use semantic knowledge from language exposure alone. The findings highlight a specific weakness in current LMs’ discursive reasoning and pave the way for future work aimed at improving their comprehension of nuanced discourse cues.

Comments & Academic Discussion

Loading comments...

Leave a Comment