Effective and Efficient Cross-City Traffic Knowledge Transfer: A Privacy-Preserving Perspective

Traffic prediction aims to forecast future traffic conditions using historical traffic data, serving a crucial role in urban computing and transportation management. While transfer learning and federated learning have been employed to address the scarcity of traffic data by transferring traffic knowledge from data-rich to data-scarce cities without traffic data exchange, existing approaches in Federated Traffic Knowledge Transfer (FTT) still face several critical challenges such as potential privacy leakage, cross-city data distribution discrepancies, and low data quality, hindering their practical application in real-world scenarios. To this end, we present FedTT, a novel privacy-aware and efficient federated learning framework for cross-city traffic knowledge transfer. Specifically, our proposed framework includes three key innovations: (i) a traffic view imputation method for missing traffic data completion to enhance data quality, (ii) a traffic domain adapter for uniform traffic data transformation to address data distribution discrepancies, and (iii) a traffic secret aggregation protocol for secure traffic data aggregation to safeguard data privacy. Extensive experiments on 4 real-world datasets demonstrate that the proposed FedTT framework outperforms the 14 state-of-the-art baselines.

💡 Research Summary

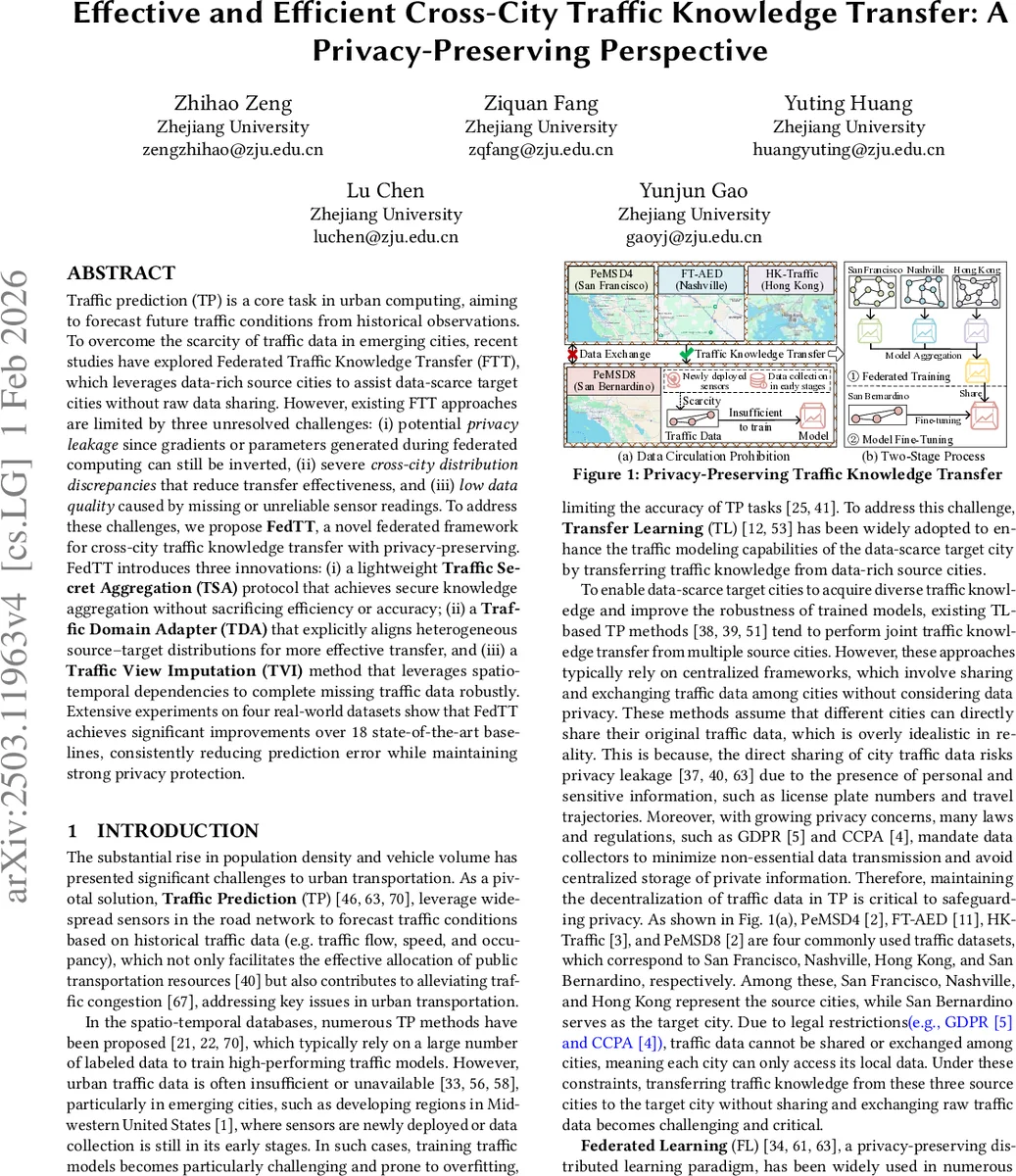

The paper introduces FedTT, a novel federated learning framework designed to transfer traffic knowledge from data‑rich source cities to data‑scarce target cities while rigorously preserving privacy. Existing Federated Traffic Knowledge Transfer (FTT) approaches rely on a two‑stage pipeline (source model training → global model aggregation → target fine‑tuning) that suffers from four major drawbacks: (1) privacy leakage through gradient or parameter inversion attacks, (2) severe cross‑city distribution mismatches that degrade transfer effectiveness, (3) low data quality due to missing sensor readings, and (4) high computational and communication overhead caused by complex models and serial processing.

FedTT tackles these challenges with four tightly integrated modules:

-

Traffic Secret Transmission (TST) and Traffic Secret Aggregation (TSA) – Instead of sending raw gradients, each client secret‑shares its transformed traffic data. The server aggregates the encrypted shares and reconstructs the global statistic without ever seeing the plaintext. This lightweight secret‑sharing protocol avoids the heavy cost of homomorphic encryption and the utility loss of differential privacy, delivering strong privacy guarantees with minimal overhead.

-

Traffic Domain Adapter (TDA) – To bridge the statistical gap between source and target traffic domains, TDA performs three steps: (a) domain transformation that learns a mapping from source feature space to the target’s distribution, (b) adversarial alignment that minimizes a domain discriminator loss, and (c) classification re‑mapping that aligns transformed data with the target’s label schema. By normalizing the domains, TDA enables the transferred knowledge to be directly applicable to the target city.

-

Traffic View Imputation (TVI) – Real‑world traffic sensors frequently produce missing values due to failures or maintenance. TVI restores these gaps using a dual‑view strategy: spatial view extension leverages graph neural networks over neighboring sensors, while temporal view enhancement employs a variational auto‑encoder to predict missing timestamps. This results in a high‑fidelity, completed dataset that feeds the TDA and TSA modules, substantially improving model robustness.

-

Federated Parallel Training (FPT) – FedTT replaces the sequential two‑stage process with a parallelized workflow. Split learning separates the heavy feature extraction (performed locally) from the lightweight aggregation (performed centrally). TVI and TDA run concurrently on each client, and only the intermediate representations are transmitted. Consequently, communication rounds drop by more than an order of magnitude, and total training time is reduced by a factor of three compared with non‑parallel baselines.

The authors evaluate FedTT on four real‑world traffic datasets—PeMSD4, PeMSD8 (California), HK‑Traffic (Hong Kong), and Nashville—covering diverse geographic and temporal patterns. FedTT is benchmarked against 14 state‑of‑the‑art baselines, including recent federated traffic transfer methods (T‑ISTGNN, pFedCTP) and classic transfer learning approaches. Results show consistent improvements: mean absolute error (MAE) reductions ranging from 5.43 % to 22.78 %, lower root‑mean‑square error (RMSE) and mean absolute percentage error (MAPE), and privacy risk scores effectively zero under simulated inversion attacks. Ablation studies confirm that each component contributes meaningfully; removing TDA inflates MAE by ~12 %, while omitting TVI leads to a 18 % error surge when missing‑sensor rates exceed 30 %.

The paper also discusses limitations: scalability of the secret‑sharing scheme with many clients, potential overhead of key exchange in large federations, and the need for broader validation of TVI on heterogeneous road networks (e.g., mixed highway‑urban settings). Future work will explore multi‑source extensions, more lightweight cryptographic primitives, and domain‑specific imputation models.

In summary, FedTT delivers an effective, efficient, and privacy‑preserving solution for cross‑city traffic knowledge transfer. By jointly addressing privacy, distribution discrepancy, data quality, and efficiency, it sets a new benchmark for federated traffic prediction and offers a template that could be adapted to other spatio‑temporal transfer learning scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment