Achieving Time Series Reasoning Requires Rethinking Model Design, Tasks Formulation, and Evaluation

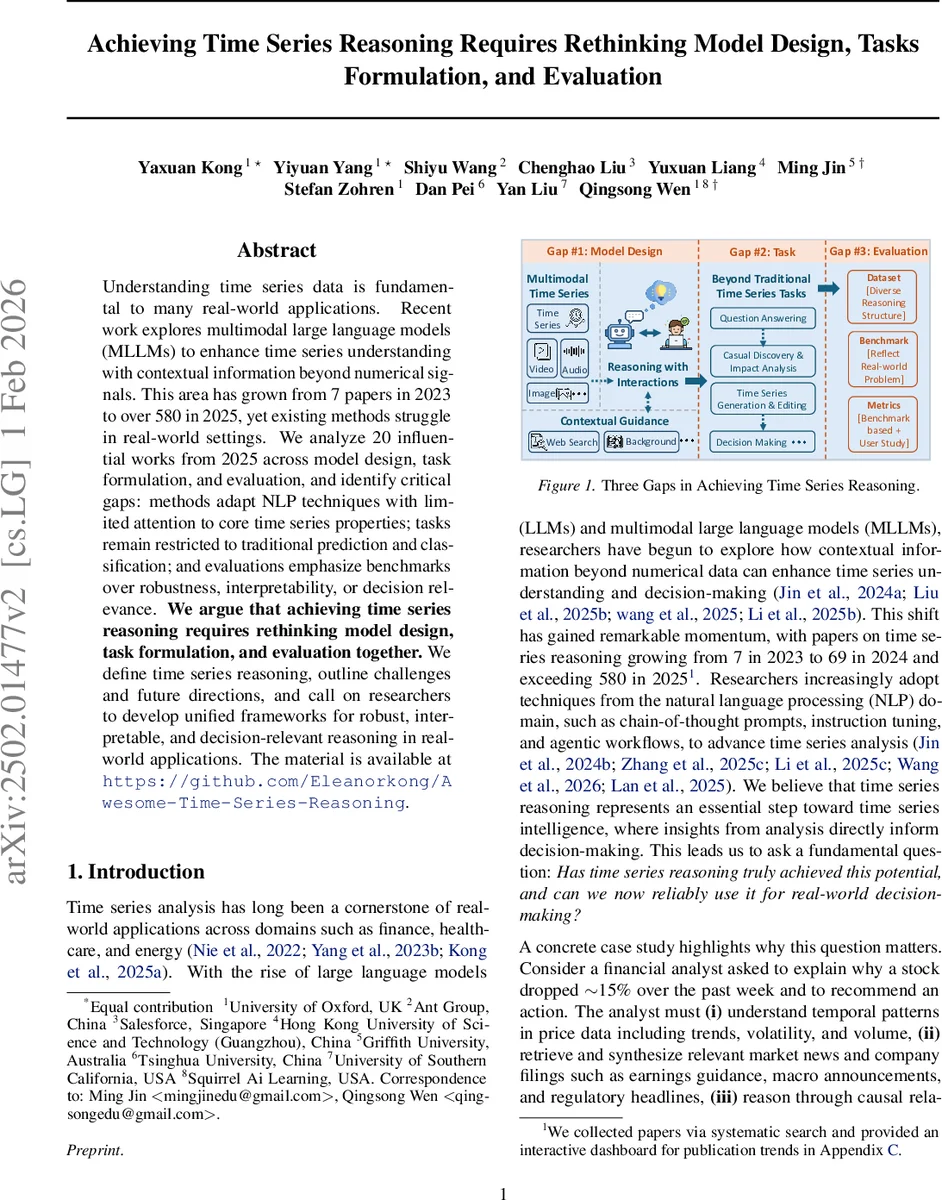

Understanding time series data is fundamental to many real-world applications. Recent work explores multimodal large language models (MLLMs) to enhance time series understanding with contextual information beyond numerical signals. This area has grown from 7 papers in 2023 to over 580 in 2025, yet existing methods struggle in real-world settings. We analyze 20 influential works from 2025 across model design, task formulation, and evaluation, and identify critical gaps: methods adapt NLP techniques with limited attention to core time series properties; tasks remain restricted to traditional prediction and classification; and evaluations emphasize benchmarks over robustness, interpretability, or decision relevance. We argue that achieving time series reasoning requires rethinking model design, task formulation, and evaluation together. We define time series reasoning, outline challenges and future directions, and call on researchers to develop unified frameworks for robust, interpretable, and decision-relevant reasoning in real-world applications. The material is available at https://github.com/Eleanorkong/Awesome-Time-Series-Reasoning.

💡 Research Summary

The paper “Achieving Time Series Reasoning Requires Rethinking Model Design, Tasks Formulation, and Evaluation” provides a critical meta‑analysis of the rapidly expanding field of time‑series reasoning, a sub‑area that seeks to move beyond simple forecasting or classification toward models that can understand, explain, and support decisions based on temporal data. The authors observe that the number of papers on the topic has exploded from 7 in 2023 to over 580 in 2025, yet most of these works still fall short when applied to real‑world decision‑making scenarios.

To pinpoint the shortcomings, the authors systematically review 20 influential 2025 papers across three dimensions: (1) Model Design, (2) Task Formulation, and (3) Evaluation. Their analysis uncovers three major gaps.

Gap 1 – Model Design: The majority of existing approaches simply transplant natural‑language‑processing (NLP) techniques—prompting, chain‑of‑thought (CoT), instruction tuning, preference optimization—into the time‑series domain. This leads to three problematic representation strategies: (i) raw numeric values serialized as text, which quickly hit token limits and miss high‑frequency patterns; (ii) tokenization/bucketing that improves interpretability but sacrifices precision and inflates vocabularies for multivariate series; (iii) learned encoders (patch‑based, frequency‑domain, graph‑based) that compress long histories but become black‑boxes, making reasoning opaque; and (iv) visual‑to‑vision‑LLM pipelines that capture macro trends but lose fine‑grained numeric detail. Consequently, there is no unified representation that simultaneously guarantees interpretability, computational efficiency, and precise temporal alignment.

Gap 2 – Task Formulation: Current benchmarks focus on next‑value prediction, pattern classification, or templated question‑answering. Real‑world problems, however, demand richer capabilities such as causal discovery, multimodal integration, counterfactual analysis, and decision‑making under uncertainty. The authors introduce a taxonomy that separates (a) reasoning structures—end‑to‑end, forward, backward, and forward‑backward hybrid—and (b) reasoning types—deductive, inductive, etiological, causal, analogical, counterfactual, mathematical, and abductive. Mapping these to task objectives reveals that many practical scenarios (e.g., explaining a stock crash, diagnosing a medical event, assessing energy‑policy impact) are not covered by existing task definitions.

Gap 3 – Evaluation: Most papers evaluate on public time‑series benchmarks (M4, UCR, etc.) and automatic text similarity metrics, which measure surface accuracy but not the quality of reasoning, evidence grounding, robustness to conflicting information, or relevance to downstream decisions. The authors argue for a four‑dimensional evaluation protocol: (1) multi‑step reasoning accuracy, (2) coherence and fidelity of natural‑language explanations, (3) robustness to contradictory or noisy external context, and (4) decision relevance assessed via user studies or domain‑expert validation.

Based on these observations, the paper proposes a forward‑looking research agenda. First, develop hybrid encoders that preserve temporal nuances while producing language‑compatible tokens, possibly combining tokenization with learned embeddings. Second, integrate dynamic external knowledge retrieval with proper time‑alignment mechanisms, enabling models to fetch and fuse news, reports, or sensor metadata on the fly. Third, embed iterative feedback loops where the model can self‑verify its conclusions, request clarification, or refine its answer—mirroring human reasoning cycles. Fourth, create standardized “time‑series reasoning” benchmarks that include multivariate, multimodal, and causal annotations, together with evaluation suites that test the four dimensions above.

In sum, the paper convincingly argues that achieving genuine time‑series reasoning—where models not only predict but also explain why patterns occur and how they should influence actions—requires a holistic redesign of model architectures, task specifications, and evaluation methodologies. By articulating clear gaps and offering concrete directions, the work lays a roadmap for the next generation of AI systems that can serve as trustworthy decision‑support partners across finance, healthcare, energy, and many other domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment