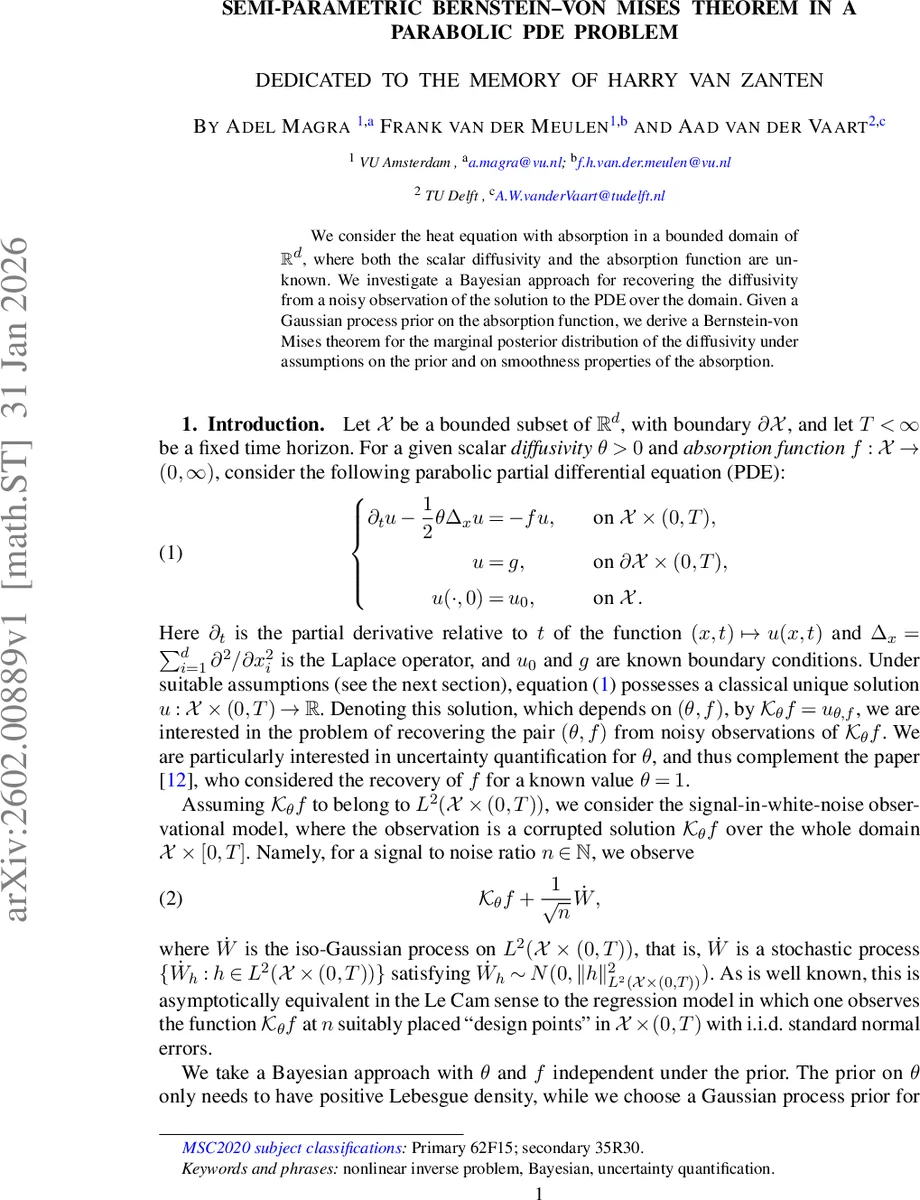

Semi-parametric Bernstein-von Mises Theorem in a Parabolic PDE Problem

We consider the heat equation with absorption in a bounded domain of $\mathbb{R}^d$, where both the scalar diffusivity and the absorption function are unknown. We investigate a Bayesian approach for recovering the diffusivity from a noisy observation of the solution to the PDE over the domain. Given a Gaussian process prior on the absorption function, we derive a Bernstein-von Mises theorem for the marginal posterior distribution of the diffusivity under assumptions on the prior and on smoothness properties of the absorption.

💡 Research Summary

This paper studies a Bayesian inverse problem for the heat equation with an absorption term on a bounded spatial domain. The forward model is the parabolic partial differential equation

∂ₜu – (θ/2)Δₓu = – f u, u|_{∂X×(0,T)} = g, u(·,0) = u₀,

where the diffusivity θ > 0 and the absorption function f : X → (0,∞) are both unknown. Observations consist of the whole space‑time solution corrupted by Gaussian white noise, i.e.

Yⁿ = u_{θ,f} + n^{–½}·Ẇ,

with Ẇ an isonormal Gaussian process on L²(X×(0,T)). This model is asymptotically equivalent, in the Le Cam sense, to a regression experiment with n independent normal errors at design points.

A prior is placed on (θ,f) assuming independence. The prior for θ is any distribution with a positive Lebesgue density on a compact interval. For f, a Gaussian process prior is defined on a Sobolev space H^β(X) (β > 2 + d/2) and a smooth link function Φ : ℝ → (f_min,∞) is used to enforce positivity, so that f = Φ(F) with F ∈ H^β(X).

The central contribution is a semi‑parametric Bernstein‑von Mises (BvM) theorem for the marginal posterior of θ. The authors first establish that the forward map K_θ(F) = u_{θ,Φ(F)} is Fréchet differentiable in both arguments, with derivatives

∂θK_θ(F) = L{θ,F}^{–1}(–½ΔₓK_θ(F)),

∂F K_θ(F)·h = L{θ,F}^{–1}(h Φ′(F) K_θ(F)),

where L_{θ,F} = θ²Δₓ – ∂ₜ – Φ(F) is an isomorphism from the parabolic Sobolev space H^{2,1}B,0(Q) onto L²(Q). Using Fredholm theory they prove that L{θ,F}^{–1} exists and is continuous.

With these derivatives the log‑likelihood

ℓ_n(θ,F) = n⟨Xⁿ, K_θ(F)⟩₂ – (n/2)‖K_θ(F)‖₂²

admits a locally asymptotically normal (LAN) expansion along one‑dimensional sub‑models (θ+s, F+tG). The LAN structure yields an efficient Fisher information

I(θ₀) = E_{θ₀,F₀}

Comments & Academic Discussion

Loading comments...

Leave a Comment