Environment-Aware Adaptive Pruning with Interleaved Inference Orchestration for Vision-Language-Action Models

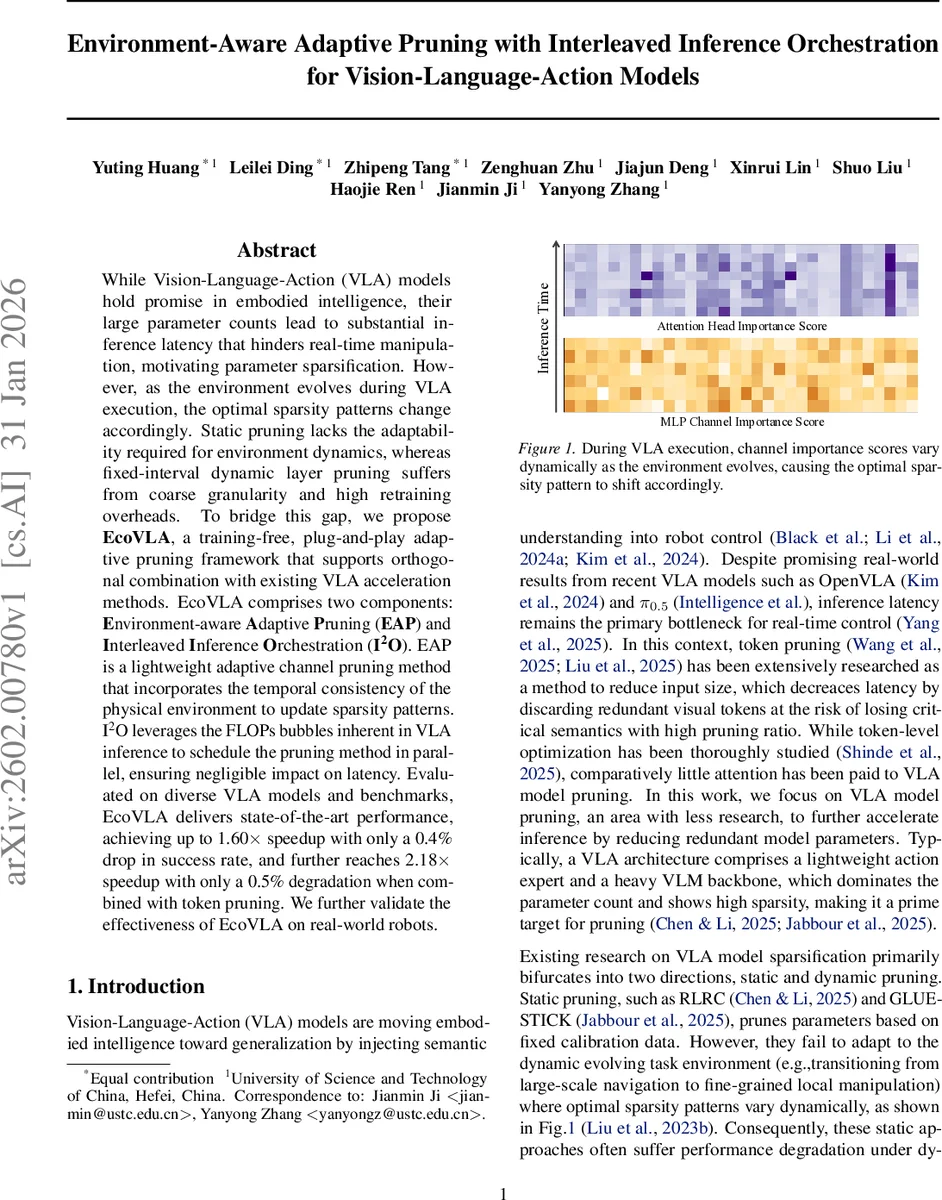

While Vision-Language-Action (VLA) models hold promise in embodied intelligence, their large parameter counts lead to substantial inference latency that hinders real-time manipulation, motivating parameter sparsification. However, as the environment evolves during VLA execution, the optimal sparsity patterns change accordingly. Static pruning lacks the adaptability required for environment dynamics, whereas fixed-interval dynamic layer pruning suffers from coarse granularity and high retraining overheads. To bridge this gap, we propose EcoVLA, a training-free, plug-and-play adaptive pruning framework that supports orthogonal combination with existing VLA acceleration methods. EcoVLA comprises two components: Environment-aware Adaptive Pruning (EAP) and Interleaved Inference Orchestration ($I^2O$). EAP is a lightweight adaptive channel pruning method that incorporates the temporal consistency of the physical environment to update sparsity patterns. $I^2O$ leverages the FLOPs bubbles inherent in VLA inference to schedule the pruning method in parallel, ensuring negligible impact on latency. Evaluated on diverse VLA models and benchmarks, EcoVLA delivers state-of-the-art performance, achieving up to 1.60$\times$ speedup with only a 0.4% drop in success rate, and further reaches 2.18$\times$ speedup with only a 0.5% degradation when combined with token pruning. We further validate the effectiveness of EcoVLA on real-world robots.

💡 Research Summary

Vision‑Language‑Action (VLA) models have shown great promise for embodied AI, but their large Vision‑Language Model (VLM) backbones cause prohibitive inference latency for real‑time robot control. Existing sparsification approaches fall into two categories. Static pruning (e.g., RLRC, GLUE‑STICK) fixes a sparsity mask based on offline calibration data; it cannot adapt to the ever‑changing physical environment and requires costly retraining. Dynamic pruning methods that select layers at fixed intervals (e.g., MoLe‑VLA, DeeR‑VLA) introduce auxiliary routers, incur extra training, and only adjust sparsity at a coarse layer level, missing fine‑grained intra‑layer redundancy. Consequently, a training‑free, fine‑grained, environment‑aware pruning technique is needed.

The paper introduces EcoVLA, a plug‑and‑play framework that consists of (1) Environment‑aware Adaptive Pruning (EAP) and (2) Interleaved Inference Orchestration (I²O). EcoVLA requires no additional training and can be combined orthogonally with existing VLA acceleration methods such as token pruning.

Environment‑aware Adaptive Pruning (EAP).

EAP performs structured channel pruning that reacts to visual changes in the robot’s surroundings. At each time step it extracts token features from the VLA visual encoder and computes an average cosine similarity sₜ between the current frame and the previous one. A sliding window Hₜ of the last T similarity scores is maintained; if sₜ falls below the p‑th quantile of Hₜ, a sparsity update is triggered. This dynamic threshold makes the system robust to distribution shifts: during rapid motion the quantile drops, suppressing unnecessary updates, while in stable phases the quantile rises, allowing fine‑grained adjustments.

When an update is triggered, EAP first runs a dense forward pass for the current frame to obtain intermediate hidden states Xₗ,int,τ. For each structured channel k in block l, an instantaneous feature εₗ,τ,k is computed by aggregating activations across the sequence dimension. To enforce temporal consistency, a historical feature Eₗ,τ‑1,k is kept and fused with the instantaneous feature using an inertia parameter α: Ẽₗ,τ,k = α·Eₗ,τ‑1,k + (1‑α)·εₗ,τ,k. The historical feature itself is updated via an exponential moving average with momentum λ, providing a stable prior across frames.

Channel importance scores are then calculated as

Sₗ,τ,k = ∑{i}|W{l,final}^{i,k}|²·Ẽₗ,τ,k,

where W_{l,final} is the final weight matrix of block l. Channels with the lowest scores are pruned, and because the pruning is structured, the corresponding input channels of the final linear projection, the output channels of the intermediate transformations Tₗ, and the associated attention heads are removed simultaneously. This maintains hardware‑friendly memory layouts and maximizes actual speed‑up.

Interleaved Inference Orchestration (I²O).

Even a lightweight pruning step adds computation, which is problematic for VLA models that process a single sample at a time. I²O addresses this by exploiting “FLOPs bubbles” – idle periods that naturally appear in the VLA pipeline due to memory stalls or synchronization points. The framework splits execution into two parallel streams: an Inference Stream that continuously generates actions, and a Pruning Stream that computes new sparsity masks when triggered. The Pruning Stream runs asynchronously within the FLOPs bubbles, so its latency is effectively hidden from the end‑to‑end response time. The updated mask is applied starting from the next frame, guaranteeing that pruning never blocks action generation.

Experimental Evaluation.

EcoVLA was tested on two simulators (LIBERO and SIMPLER) and three state‑of‑the‑art VLA models (OpenVLA‑OFT, π0.5, CogACT). Results show:

- Up to 1.60× speed‑up with only a 0.4 % drop in task success rate when using EcoVLA alone.

- When combined with token pruning (FastV with 50 % token removal), EcoVLA raises speed‑up to 2.18× while limiting performance loss to 0.5 %, effectively closing the gap introduced by token pruning alone.

- Real‑world validation on a 7‑DoF Kinova Gen3 robot confirms that the simulated gains translate to physical systems, with smooth, jitter‑free control.

Significance and Limitations.

EcoVLA demonstrates that a training‑free, environment‑aware pruning strategy can adapt sparsity patterns on the fly without sacrificing real‑time responsiveness. Its plug‑and‑play nature allows it to be stacked with other acceleration techniques, offering a modular path toward efficient embodied AI. The current work focuses on channel‑level pruning; extending the approach to block‑ or head‑level sparsity, and integrating multimodal sensors (depth, lidar) are promising directions for future research.

In summary, EcoVLA provides a practical solution for deploying large VLA models on resource‑constrained robotic platforms, achieving substantial latency reductions while preserving task performance, and paving the way for more capable, real‑time embodied agents.

Comments & Academic Discussion

Loading comments...

Leave a Comment