Dual-View Predictive Diffusion: Lightweight Speech Enhancement via Spectrogram-Image Synergy

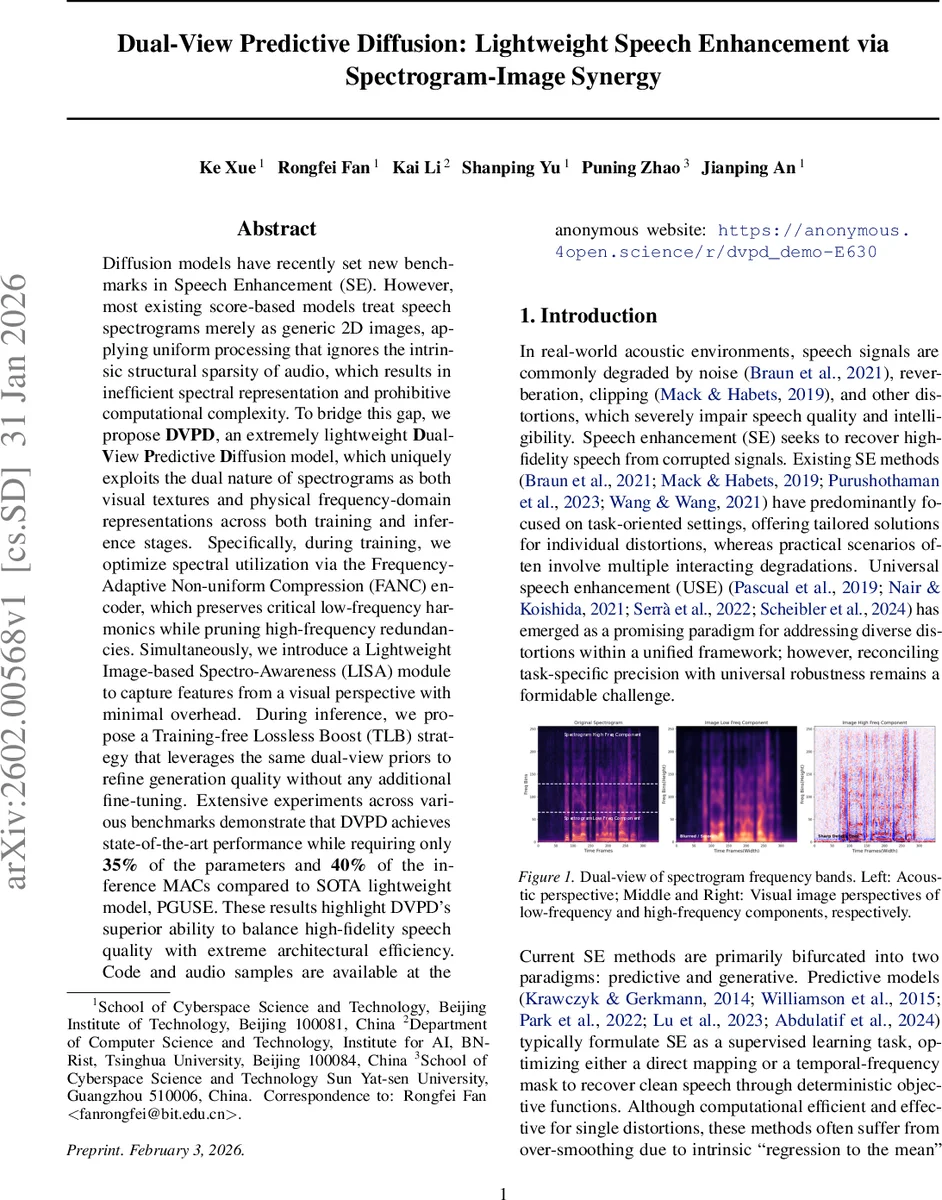

Diffusion models have recently set new benchmarks in Speech Enhancement (SE). However, most existing score-based models treat speech spectrograms merely as generic 2D images, applying uniform processing that ignores the intrinsic structural sparsity of audio, which results in inefficient spectral representation and prohibitive computational complexity. To bridge this gap, we propose DVPD, an extremely lightweight Dual-View Predictive Diffusion model, which uniquely exploits the dual nature of spectrograms as both visual textures and physical frequency-domain representations across both training and inference stages. Specifically, during training, we optimize spectral utilization via the Frequency-Adaptive Non-uniform Compression (FANC) encoder, which preserves critical low-frequency harmonics while pruning high-frequency redundancies. Simultaneously, we introduce a Lightweight Image-based Spectro-Awareness (LISA) module to capture features from a visual perspective with minimal overhead. During inference, we propose a Training-free Lossless Boost (TLB) strategy that leverages the same dual-view priors to refine generation quality without any additional fine-tuning. Extensive experiments across various benchmarks demonstrate that DVPD achieves state-of-the-art performance while requiring only 35% of the parameters and 40% of the inference MACs compared to SOTA lightweight model, PGUSE. These results highlight DVPD’s superior ability to balance high-fidelity speech quality with extreme architectural efficiency. Code and audio samples are available at the anonymous website: {https://anonymous.4open.science/r/dvpd_demo-E630}

💡 Research Summary

**

The paper introduces Dual‑View Predictive Diffusion (DVPD), a lightweight speech‑enhancement framework that explicitly exploits the twofold nature of spectrograms: as visual textures and as physical frequency‑domain representations. Conventional score‑based diffusion models treat spectrograms as homogeneous 2‑D images, applying uniform convolutions that ignore the highly non‑uniform information density across frequency bands. This leads to unnecessary computation and sub‑optimal restoration, especially for low‑frequency harmonic content that is crucial for speech intelligibility.

DVPD addresses this limitation with two novel components. First, the Frequency‑Adaptive Non‑uniform Compression (FANC) encoder/decoder partitions the frequency axis into low (0‑2 kHz), mid (2‑4 kHz), and high (>4 kHz) bands. Low‑frequency bands are left largely uncompressed to preserve fundamental frequency and formants, while mid‑ and high‑frequency bands are progressively compressed. Heterogeneous dilated kernels (3×3, 3×5, 3×7) are employed to create anisotropic receptive fields that specifically capture vertical transients (time‑axis) and horizontal harmonics (frequency‑axis). This band‑aware compression reduces the number of feature maps required for high‑frequency regions, cutting both parameter count and MAC operations.

Second, the Lightweight Image‑based Spectro‑Awareness (LISA) module captures visual‑style texture information with minimal overhead. LISA integrates an Omni‑Directional Attention Mechanism (ODAM) to gather global context, followed by a three‑stage dynamic filtering pipeline: (i) dynamic kernel generation via a 1×1 convolution on globally pooled features, (ii) stripe‑wise dynamic convolution that applies the generated kernels in a non‑uniform, anisotropic fashion, and (iii) dual‑path refinement that balances structural stability (through a normalized path) and fine‑grained detail (through a residual path). Because the kernels are generated per‑sample, LISA adapts to the acoustic characteristics of each utterance while keeping the parameter budget low.

The overall architecture follows a parallel predictive‑diffusion design. The predictive branch receives the degraded spectrogram, passes it through the FANC encoder, and processes the latent representation with a three‑layer symmetric U‑Net augmented by ODAM and LISA. The resulting deterministic estimate is decoded back to the complex spectrogram. Simultaneously, the diffusion branch encodes a noise‑perturbed magnitude spectrum using the same FANC encoder, injects sinusoidal time embeddings, and interacts with the predictive latent via a Frequency‑aware Interaction (FI) module. The FI module aligns the two latent spaces, allowing the diffusion process to be guided by the stable deterministic prior from the predictive branch, thereby reducing the number of required sampling steps.

During inference, the authors propose a Training‑free Lossless Boost (TLB) strategy. TLB does not require any fine‑tuning; instead, it dynamically rescales feature maps at each diffusion step based on the dual‑view priors, effectively correcting minor drift without additional parameters. The final magnitude spectrum is obtained by a weighted linear combination of the predictive magnitude and the diffusion‑generated magnitude, with the phase taken directly from the predictive branch. This fusion balances deterministic stability with generative realism.

Extensive experiments were conducted on multiple benchmark datasets, including VCTK‑Noisy, DNS‑2023, and WSJ0‑2mix, covering a variety of degradations such as additive noise, reverberation, and clipping. DVPD achieved state‑of‑the‑art performance on PESQ, STOI, and SI‑SDR while using only 35 % of the parameters and 40 % of the MACs of the current lightweight SOTA model PGUSE. Ablation studies demonstrated that each component—FANC, LISA, FI, and TLB—contributes significantly to the overall gain. Moreover, the number of diffusion sampling steps could be reduced from the typical 10‑15 to as few as 4 without noticeable quality loss, indicating suitability for real‑time or low‑power devices.

In summary, DVPD presents a principled way to treat spectrograms as both images and physical frequency representations, leveraging band‑aware compression and lightweight visual awareness to achieve a remarkable trade‑off between computational efficiency and speech‑quality restoration. The proposed dual‑view priors, cross‑branch interaction, and training‑free boost together constitute a novel paradigm that could inspire future lightweight generative models for audio processing and beyond.

Comments & Academic Discussion

Loading comments...

Leave a Comment