SRL Proxemics: Spatial Guidelines for Supernumerary Robotic Limbs in Near-Body Interactions

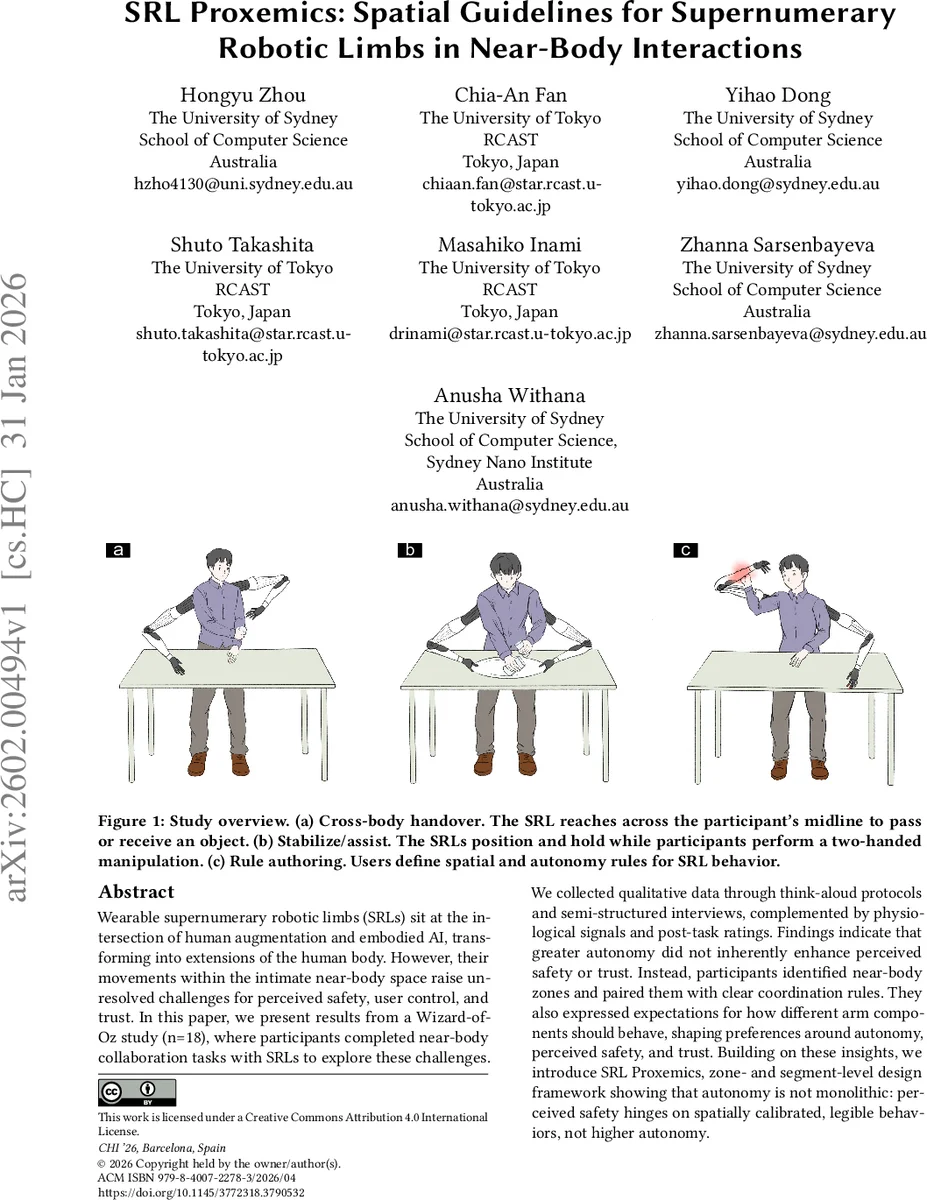

Wearable supernumerary robotic limbs (SRLs) sit at the intersection of human augmentation and embodied AI, transforming into extensions of the human body. However, their movements within the intimate near-body space raise unresolved challenges for perceived safety, user control, and trust. In this paper, we present results from a Wizard-of-Oz study (n=18), where participants completed near-body collaboration tasks with SRLs to explore these challenges. We collected qualitative data through think-aloud protocols and semi-structured interviews, complemented by physiological signals and post-task ratings. Findings indicate that greater autonomy did not inherently enhance perceived safety or trust. Instead, participants identified near-body zones and paired them with clear coordination rules. They also expressed expectations for how different arm components should behave, shaping preferences around autonomy, perceived safety, and trust. Building on these insights, we introduce SRL Proxemics, a zone- and segment-level design framework showing that autonomy is not monolithic: perceived safety hinges on spatially calibrated, legible behaviors, not higher autonomy.

💡 Research Summary

The paper investigates how wearable supernumerary robotic limbs (SRLs) should behave when operating in the intimate peripersonal space surrounding a human body. While prior work has demonstrated the mechanical feasibility of SRLs and their potential to augment industrial, medical, and everyday tasks, little attention has been paid to the user’s sense of assurance—perceived safety, predictability, trust, and embodiment—especially when the robot moves close to vulnerable body regions.

To fill this gap, the authors conducted a mixed‑methods Wizard‑of‑Oz study with 18 participants. Participants performed two near‑body collaboration tasks (cross‑body handover and stabilization/assist) while a hidden experimenter controlled a physical on‑body SRL prototype. The study combined think‑aloud protocols, semi‑structured interviews, post‑task questionnaires (measuring perceived safety, trust, and embodiment), and event‑locked skin‑conductance responses (SCR) to capture both subjective and physiological reactions.

Two research questions guided the work: (RQ1) What zone‑ and component‑specific boundaries, motion strategies, and cueing requirements do users define to feel assured in peripersonal space? (RQ2) How do high‑autonomy (fully autonomous baseline) versus participant‑defined rule conditions affect physiological arousal during standardized near‑body entries? (RQ3) How do these autonomy arrangements shape subjective assurance and embodiment?

Key findings:

- Participants spontaneously partitioned their bodies into “high‑sensitivity zones” (head, face, torso) and “low‑sensitivity zones” (forearms, hands, legs). In high‑sensitivity zones they demanded tighter control, explicit confirmation, and slower, more predictable motions. In low‑sensitivity zones they were comfortable granting the SRL greater autonomy.

- Greater autonomy did not automatically increase perceived safety or trust. When the SRL entered a high‑sensitivity zone autonomously, participants reported higher anxiety, lower trust, and exhibited elevated SCR, indicating physiological stress.

- Expectations differed across SRL components. The base segment was expected to approach smoothly and at low speed; joints were to respect limited angular ranges; the wrist and gripper were required to provide clear multimodal cues (visual, auditory, haptic) before contact, effectively “warning” the user.

Based on these insights the authors propose SRL Proxemics, a design framework that treats autonomy as a spatially variable property rather than a monolithic setting. The framework consists of three intertwined layers:

- Zone‑specific autonomy gradients – autonomy levels adapt dynamically according to the body region currently being approached.

- Component‑level motion constraints – each limb segment has its own speed, acceleration, and trajectory limits that reflect its proximity to the user and its functional role.

- Adaptive cueing strategies – multimodal signals (motor hum attenuation, vibration, LEDs, spoken prompts) are triggered contextually to make the SRL’s intent legible.

Practical design recommendations derived from the study include:

- Embed a user‑defined “safety map” that continuously tracks body pose and determines the current zone.

- Require explicit confirmation (button press, voice command) and provide predictive visualizations when the SRL plans to enter a high‑sensitivity zone.

- Use pre‑contact warnings (e.g., a brief vibration or tone) for wrist and gripper actions to reduce surprise.

- Offer an intuitive rule‑authoring interface so users can personalize zone boundaries, autonomy levels, and cue preferences, accommodating individual and cultural differences.

The authors argue that for SRLs to be accepted as true extensions of the body, designers must move beyond guaranteeing physical safety to engineering cognitive safety—the assurance that the robot will behave predictably and remain under the user’s ultimate control. Spatially calibrated autonomy, combined with legible multimodal feedback, is the key to achieving this.

In sum, the paper provides empirical evidence that perceived safety and trust in wearable robotic limbs are highly context‑dependent, proposes a concrete proxemic framework for spatially adaptive autonomy, and outlines actionable guidelines for future SRL hardware and control software. These contributions advance the field toward safe, trustworthy, and embodied human‑augmentation technologies.

Comments & Academic Discussion

Loading comments...

Leave a Comment