CHOIR: A Chatbot-mediated Organizational Memory Leveraging Communication in University Research Labs

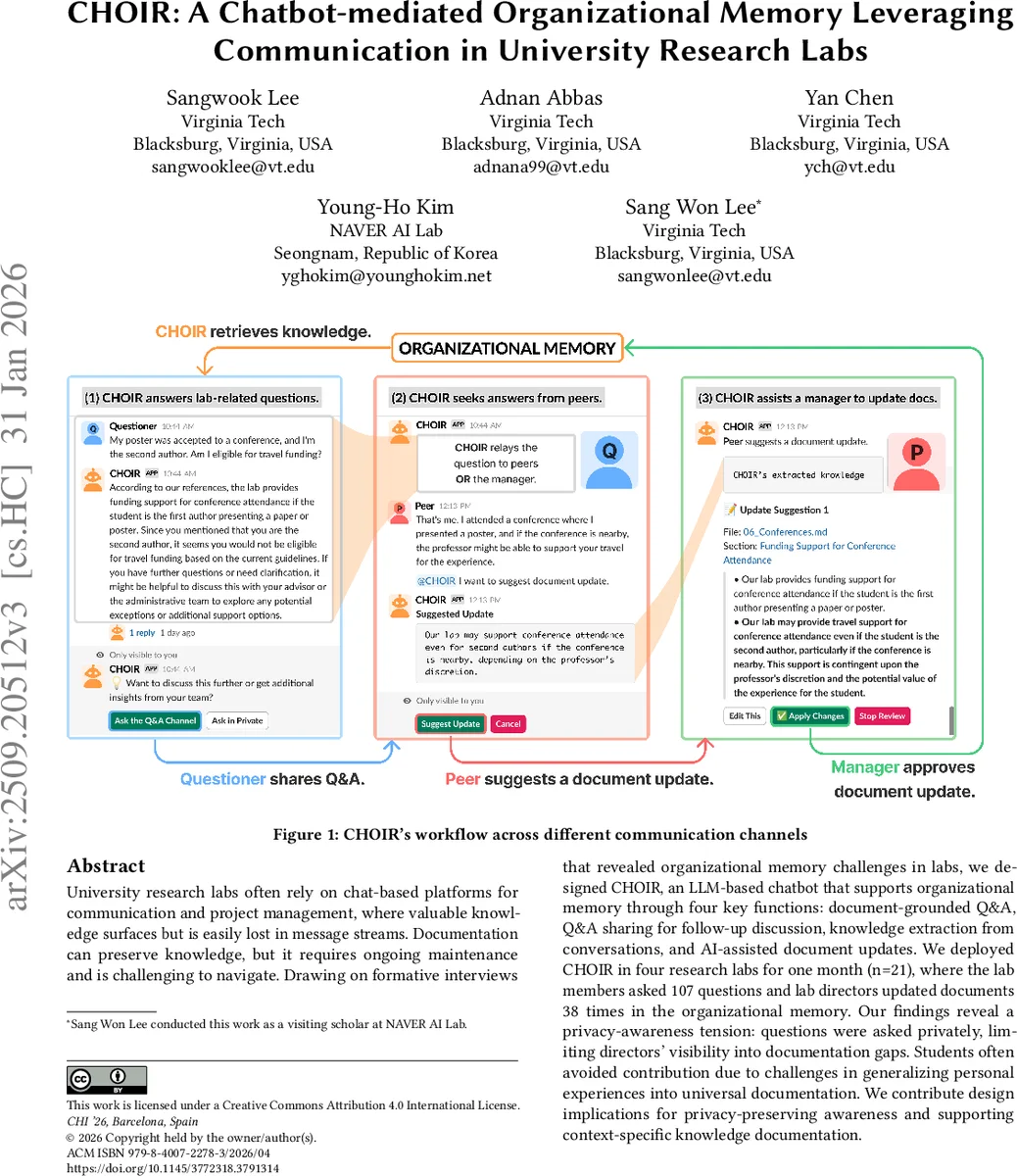

University research labs often rely on chat-based platforms for communication and project management, where valuable knowledge surfaces but is easily lost in message streams. Documentation can preserve knowledge, but it requires ongoing maintenance and is challenging to navigate. Drawing on formative interviews that revealed organizational memory challenges in labs, we designed CHOIR, an LLM-based chatbot that supports organizational memory through four key functions: document-grounded Q&A, Q&A sharing for follow-up discussion, knowledge extraction from conversations, and AI-assisted document updates. We deployed CHOIR in four research labs for one month (n=21), where the lab members asked 107 questions and lab directors updated documents 38 times in the organizational memory. Our findings reveal a privacy-awareness tension: questions were asked privately, limiting directors’ visibility into documentation gaps. Students often avoided contribution due to challenges in generalizing personal experiences into universal documentation. We contribute design implications for privacy-preserving awareness and supporting context-specific knowledge documentation.

💡 Research Summary

University research labs increasingly rely on instant‑messaging platforms such as Slack and Microsoft Teams for daily coordination, yet the knowledge that surfaces in these channels is often lost because messages are stored chronologically without semantic structure. Traditional documentation (handbooks, wikis, Google Docs) can preserve this knowledge, but maintaining such artifacts requires sustained effort and suffers from low adoption, especially among faculty who are already over‑burdened. To understand the specific challenges faced by labs, the authors first conducted formative interviews with 15 faculty members and graduate students. The interviews revealed three core pain points: (1) valuable tacit knowledge is embedded in chat streams but is hard to retrieve; (2) existing documentation is rarely updated because of time constraints and lack of incentives; and (3) there is a tension between privacy (students do not want to expose “basic” questions) and the need for organizational awareness of knowledge gaps.

Guided by these findings, the authors designed CHOIR (Chat‑based Helper for Organizational Intelligence Repository), an LLM‑powered chatbot that lives inside Slack. CHOIR implements four tightly coupled functions:

-

Document‑grounded Q&A – When a user asks a question, CHOIR performs Retrieval‑Augmented Generation (RAG) over a curated corpus of lab documents (handbooks, policy files, project reports). The large language model returns an answer that is explicitly grounded in cited passages, improving trust and reducing hallucination.

-

Q&A Sharing for Follow‑up Discussion – If the answer is insufficient, the user can broadcast the Q&A to a public channel, inviting peers to add context or alternative solutions. This mechanism transforms private queries into shared knowledge, making gaps visible to the whole lab.

-

Knowledge Extraction from Conversation – CHOIR continuously monitors Slack conversations, using the LLM to identify new facts, tips, or procedural steps that emerge organically. Extracted snippets are stored with metadata and placed in a review queue; lab directors approve them before they become part of the official organizational memory.

-

AI‑assisted Document Update – When a document needs revision, CHOIR helps locate the relevant section, suggests edits based on recent conversational extracts, and allows the director to edit, approve, or reject the changes. The LLM also ensures stylistic consistency across the corpus.

The system was deployed for one month in four distinct research labs, involving 21 participants (students, post‑docs, and faculty). During the field study, users asked a total of 107 questions, and lab directors performed 38 document updates. Interaction logs showed that most questions originated as private direct messages; only about 23 % of the Q&As were shared publicly. Post‑deployment interviews highlighted a “privacy‑awareness tension”: students preferred private queries to avoid embarrassment or perceived burden on peers, yet this secrecy prevented directors from recognizing recurring knowledge gaps. Moreover, students expressed reluctance to contribute to documentation because translating personal, context‑specific experiences into generalized, “universal” statements felt awkward and time‑consuming.

From these observations, the authors derive several design implications. First, systems should mediate the privacy‑awareness trade‑off by aggregating anonymized question statistics (topic frequency, repeat rates) and surfacing them only to administrators, thereby preserving individual anonymity while still revealing systemic gaps. Second, contribution incentives are crucial; visible acknowledgment (badges, citation in lab handbooks, or inclusion in annual reports) can motivate students to document their insights. Third, the boundary of what is considered “documentable” should be expanded through “context snapshots” that automatically capture code snippets, parameter settings, or data links mentioned in chat and embed them as inline references, reducing the cognitive load of manual abstraction.

In sum, CHOIR demonstrates that a conversational, LLM‑enhanced approach can lower the technical barriers to building and sustaining an organizational memory in research labs, but success hinges on addressing enduring social challenges—privacy concerns, incentive structures, and the difficulty of generalizing personal experience. The paper contributes (1) an empirical characterization of organizational memory challenges in academic labs, (2) a socio‑technical workflow that integrates conversational Q&A, knowledge extraction, and AI‑assisted documentation, and (3) real‑world usage data and design lessons that inform future AI‑mediated knowledge management systems. Future work should explore longitudinal effects on knowledge retention, scalability across larger, more heterogeneous organizations, and robust verification mechanisms to mitigate LLM hallucinations in critical scientific contexts.

Comments & Academic Discussion

Loading comments...

Leave a Comment