A Multi-Agent Framework for Mitigating Dialect Biases in Privacy Policy Question-Answering Systems

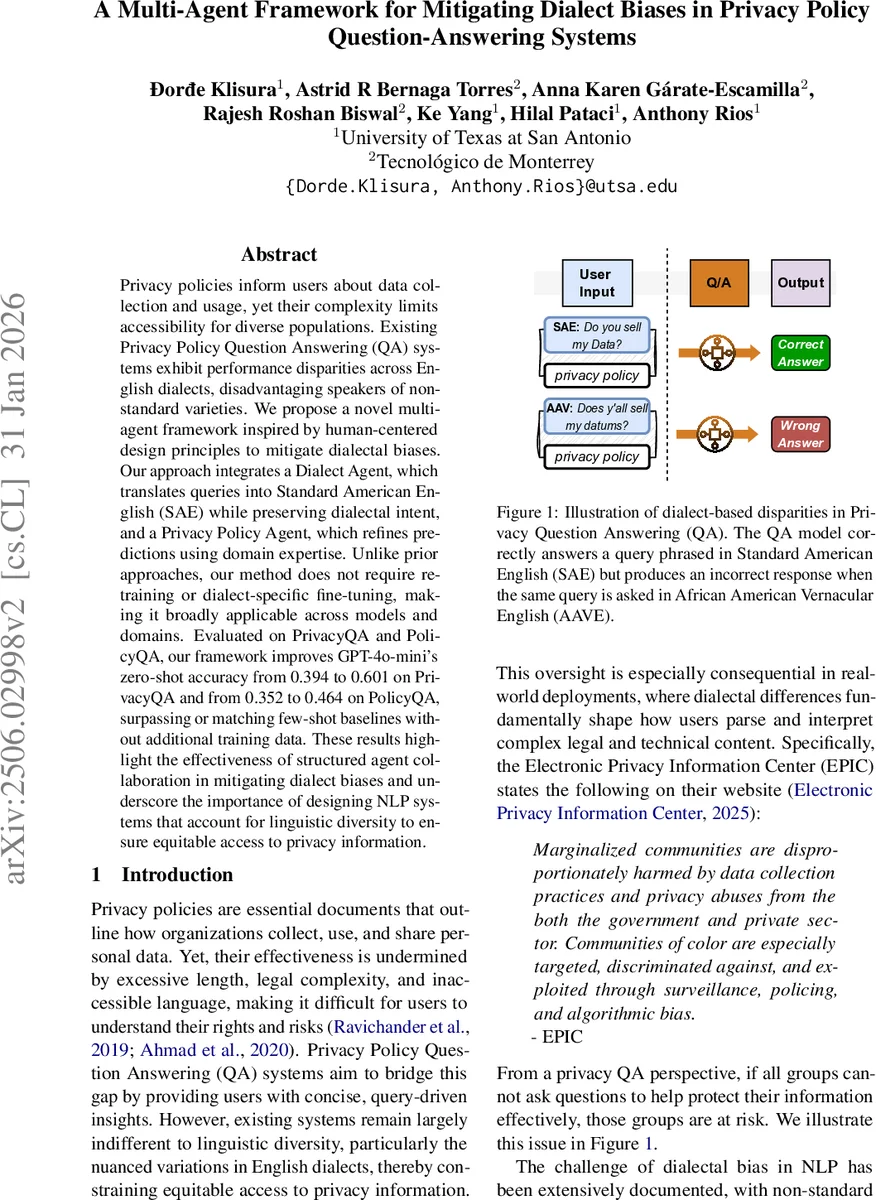

Privacy policies inform users about data collection and usage, yet their complexity limits accessibility for diverse populations. Existing Privacy Policy Question Answering (QA) systems exhibit performance disparities across English dialects, disadvantaging speakers of non-standard varieties. We propose a novel multi-agent framework inspired by human-centered design principles to mitigate dialectal biases. Our approach integrates a Dialect Agent, which translates queries into Standard American English (SAE) while preserving dialectal intent, and a Privacy Policy Agent, which refines predictions using domain expertise. Unlike prior approaches, our method does not require retraining or dialect-specific fine-tuning, making it broadly applicable across models and domains. Evaluated on PrivacyQA and PolicyQA, our framework improves GPT-4o-mini’s zero-shot accuracy from 0.394 to 0.601 on PrivacyQA and from 0.352 to 0.464 on PolicyQA, surpassing or matching few-shot baselines without additional training data. These results highlight the effectiveness of structured agent collaboration in mitigating dialect biases and underscore the importance of designing NLP systems that account for linguistic diversity to ensure equitable access to privacy information.

💡 Research Summary

Privacy policies are essential for informing users about data collection and usage, yet their length and legal language make them difficult to understand for many people. Recent Privacy Policy Question‑Answering (QA) systems aim to bridge this gap by providing concise answers to user queries. However, these systems have been shown to perform significantly worse when the queries are phrased in non‑standard English dialects such as African American Vernacular English (AAVE), Chicano English, or other regional varieties. This disparity creates an equity problem: speakers of marginalized dialects receive poorer assistance, potentially exposing them to greater privacy risks.

The paper proposes a lightweight, prompt‑based multi‑agent framework that mitigates dialect bias without any model retraining or large dialect‑specific datasets. The framework consists of two specialized large language model (LLM) agents that communicate through structured prompts:

-

Dialect Agent – receives the user’s original query together with a concise description of the relevant dialect’s phonological, grammatical, lexical, and cultural characteristics. Using a prompt that frames the model as an “expert linguist” for that dialect, the agent translates the query into Standard American English (SAE) while preserving the original intent and cultural nuance. The translation is also used later for validation.

-

Privacy Policy Agent – takes the SAE‑translated question and the relevant snippet of the privacy policy. Prompted as a “privacy policy expert,” it extracts a concise, fact‑based answer directly from the policy text and provides a brief rationale linking the answer to the policy excerpt.

After the Privacy Policy Agent produces its answer, the Dialect Agent is fed the original dialectal query, the policy snippet, and the generated answer. It evaluates whether the answer captures the user’s intent and flags any mismatch. If a mismatch is detected, the Dialect Agent signals disagreement and supplies concrete suggestions for refinement. The Privacy Policy Agent then revises its answer accordingly, and the Dialect Agent performs a final check before delivering the response to the user.

Formally, for a dialect (d) and a policy snippet (p), a QA model (f) yields an answer (A = f(p, q_d)). Accuracy on dialect (d) is denoted (\Phi_d(f)). The overall disparity is (\Delta(f) = \max_{i,j} |\Phi_{d_i}(f) - \Phi_{d_j}(f)|). The goal of the framework (F) is to minimize (\Delta) while maintaining or improving average accuracy, all without altering the underlying model parameters.

Experimental Setup

The authors evaluate on two benchmark datasets, PrivacyQA and PolicyQA, each augmented with dialectal variations generated via the Multi‑VALUE stress‑test framework covering 50 English dialects. The baseline is the zero‑shot GPT‑4o‑mini model, which exhibits notable performance gaps across dialects. The proposed multi‑agent pipeline is applied on top of the same base model.

Results

- On PrivacyQA, zero‑shot accuracy rises from 0.394 to 0.601 (a 0.207 absolute gain).

- On PolicyQA, accuracy improves from 0.352 to 0.464 (a 0.112 gain).

- The maximum F1 difference between the best‑ and worst‑performing dialects drops by up to 82 %, indicating a substantial reduction in bias.

- Compared with few‑shot prompting (e.g., 5‑shot), the multi‑agent approach matches or exceeds performance while requiring no additional training examples.

Contributions

- First systematic quantification of dialect bias in privacy‑policy QA.

- Introduction of a prompt‑driven, two‑agent architecture that injects dialect knowledge at inference time, eliminating the need for costly fine‑tuning.

- Comprehensive ablation studies and error analysis that clarify the distinct impact of translation quality, policy reasoning, and the feedback loop.

Limitations and Future Work

The Dialect Agent relies on manually crafted dialect descriptors, which may not scale to all existing or emerging varieties. The current pipeline handles a single turn (translation → answer → validation) and does not yet support multi‑turn dialogues or more complex user intents. Future research directions include automating dialect‑to‑SAE mappings, expanding the agent suite to include planners or critics for dynamic negotiation, and applying the framework to other high‑stakes domains such as legal or medical information retrieval.

Conclusion

By orchestrating two specialized LLM agents through carefully designed prompts, the paper demonstrates that dialect‑related fairness issues in privacy‑policy QA can be mitigated effectively without any model retraining. The approach yields sizable accuracy gains, dramatically narrows performance gaps across dialects, and offers a cost‑effective, easily deployable solution. Its success suggests that similar multi‑agent, prompt‑centric designs could be valuable for addressing linguistic bias across a wide range of domain‑specific NLP applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment