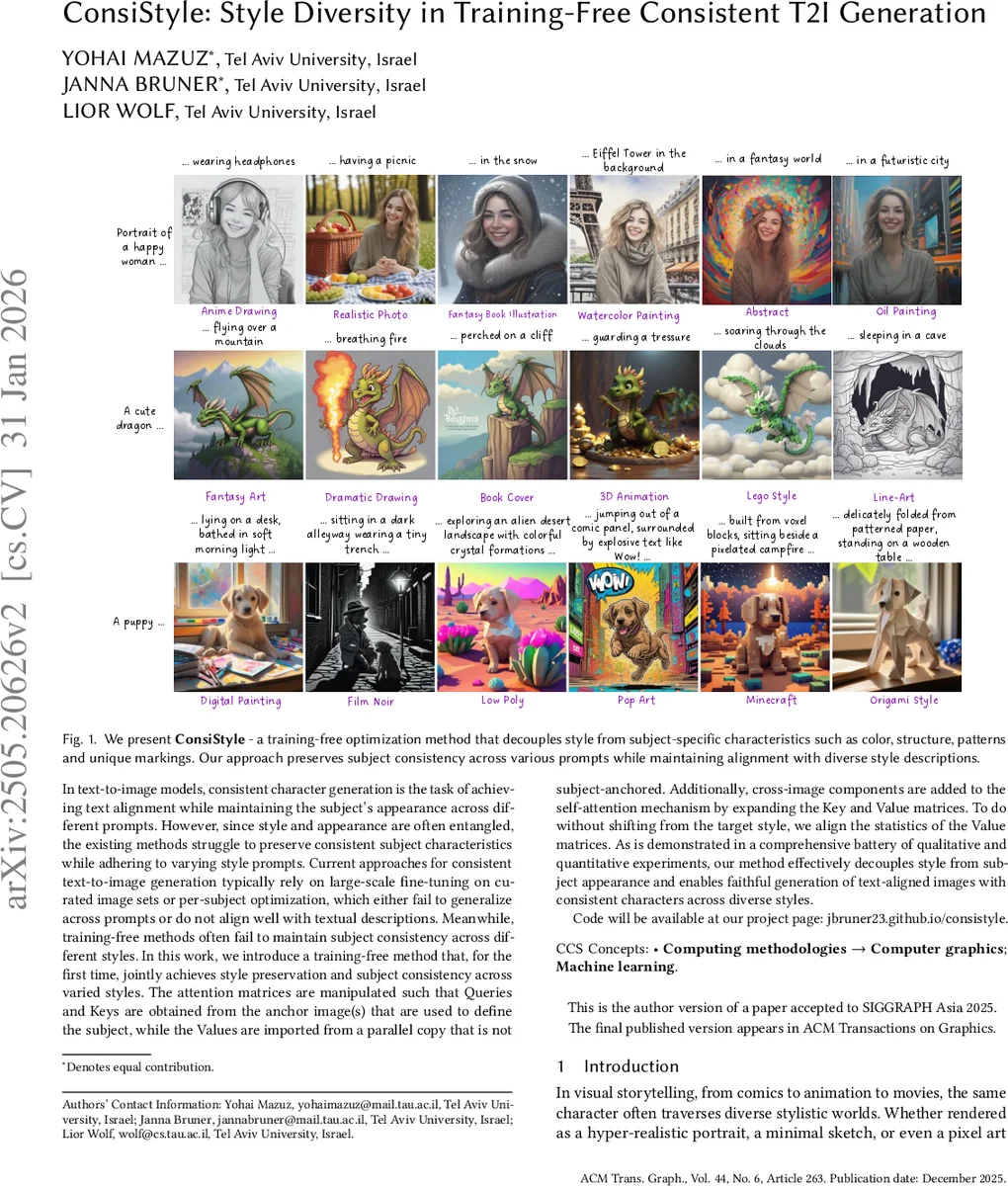

ConsiStyle: Style Diversity in Training-Free Consistent T2I Generation

In text-to-image models, consistent character generation is the task of achieving text alignment while maintaining the subject’s appearance across different prompts. However, since style and appearance are often entangled, the existing methods struggle to preserve consistent subject characteristics while adhering to varying style prompts. Current approaches for consistent text-to-image generation typically rely on large-scale fine-tuning on curated image sets or per-subject optimization, which either fail to generalize across prompts or do not align well with textual descriptions. Meanwhile, training-free methods often fail to maintain subject consistency across different styles. In this work, we introduce a training-free method that, for the first time, jointly achieves style preservation and subject consistency across varied styles. The attention matrices are manipulated such that Queries and Keys are obtained from the anchor image(s) that are used to define the subject, while the Values are imported from a parallel copy that is not subject-anchored. Additionally, cross-image components are added to the self-attention mechanism by expanding the Key and Value matrices. To do without shifting from the target style, we align the statistics of the Value matrices. As is demonstrated in a comprehensive battery of qualitative and quantitative experiments, our method effectively decouples style from subject appearance and enables faithful generation of text-aligned images with consistent characters across diverse styles.

💡 Research Summary

ConsiStyle tackles a long‑standing problem in text‑to‑image diffusion: generating a set of images that follow different style prompts while keeping the underlying subject (e.g., a character or object) visually identical across all outputs. Existing solutions fall into two camps. Fine‑tuned personalization methods such as DreamBooth or textual inversion can lock a subject’s appearance but struggle when the style description changes, often producing style‑inconsistent results. Training‑free approaches (Prompt‑to‑Prompt, Cross‑Image Attention, Consistory, etc.) can freely vary style but lack mechanisms to preserve subject identity across prompts.

The authors propose a novel, training‑free pipeline that manipulates the self‑attention matrices of a diffusion model (specifically SDXL) to separate style from identity. The method proceeds in three stages. First, a vanilla SDXL pass is performed on the desired prompts, and the Value matrices (V_sd) from each self‑attention layer are stored; these matrices encode fine‑grained texture, color, and lighting information that define the target style. Second, a correspondence map is computed using DIFT, which aligns patches belonging to the subject across the batch of images. This map provides the indices (Cα(sα)) needed to transfer subject‑specific queries and keys without mixing style information. Third, during the final diffusion run, three operations are applied:

- Query/Key Transfer – For each image i, the query and key entries corresponding to the subject region are replaced by those from an anchor image a, using the DIFT mapping (Q_i

Comments & Academic Discussion

Loading comments...

Leave a Comment