Instance Temperature Knowledge Distillation

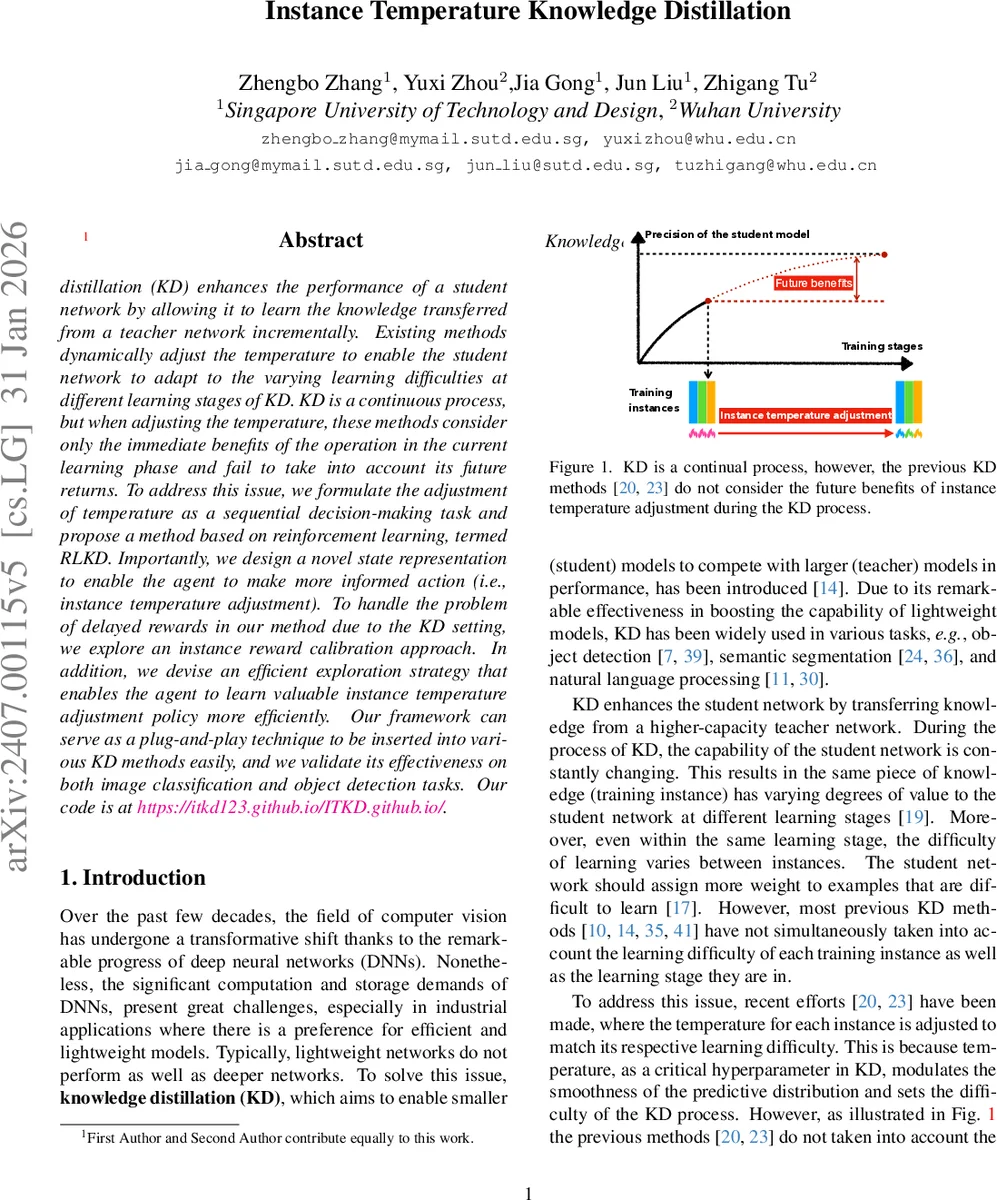

Knowledge distillation (KD) enhances the performance of a student network by allowing it to learn the knowledge transferred from a teacher network incrementally. Existing methods dynamically adjust the temperature to enable the student network to adapt to the varying learning difficulties at different learning stages of KD. KD is a continuous process, but when adjusting the temperature, these methods consider only the immediate benefits of the operation in the current learning phase and fail to take into account its future returns. To address this issue, we formulate the adjustment of temperature as a sequential decision-making task and propose a method based on reinforcement learning, termed RLKD. Importantly, we design a novel state representation to enable the agent to make more informed action (i.e. instance temperature adjustment). To handle the problem of delayed rewards in our method due to the KD setting, we explore an instance reward calibration approach. In addition,we devise an efficient exploration strategy that enables the agent to learn valuable instance temperature adjustment policy more efficiently. Our framework can serve as a plug-and-play technique to be inserted into various KD methods easily, and we validate its effectiveness on both image classification and object detection tasks. Our project is at https://itkd123.github.io/ITKD.github.io/.

💡 Research Summary

**

Knowledge distillation (KD) improves a compact student network by transferring the soft predictions of a larger teacher network. A crucial hyper‑parameter in KD is the temperature, which controls the smoothness of the teacher‑student probability distribution and thus the difficulty of the learning signal. Recent works such as CTKD and MKD have introduced instance‑wise or stage‑wise temperature adaptation, but they treat temperature adjustment as a myopic operation: the temperature is chosen to minimize the current loss without considering how this choice will affect the student’s future learning trajectory.

The authors of “Instance Temperature Knowledge Distillation” reconceptualize temperature adjustment as a sequential decision‑making problem and solve it with reinforcement learning (RL). In their framework, called RLKD, each training instance constitutes a decision step. The agent observes a state, selects an instance‑specific temperature (the action), and receives a reward that reflects the improvement of the student network after the batch update. By maximizing cumulative reward, the policy implicitly accounts for long‑term benefits of temperature choices.

State design – The state vector for an instance x consists of three components: (1) the teacher’s confidence on x (the probability of the teacher’s top‑predicted class), (2) the student’s confidence on x (the probability of the student’s top‑predicted class), and (3) an uncertainty score derived from the student’s output distribution. The uncertainty score is defined as 1 − (pₛ − max_i pₛ,i), where pₛ is the student’s softmax vector; it is high when the student is uncertain (multiple classes have similar probabilities) and low when the student is confident. This triad captures both the difficulty of the sample for the teacher, the current competence of the student, and the degree to which the student has already mastered the sample—information that previous KD methods ignore.

Action space – Temperature is treated as a continuous scalar. The policy network (the actor in PPO) outputs the mean μ and variance σ² of a Gaussian distribution; a temperature value is sampled from N(μ,σ²). Continuous actions enable fine‑grained adjustments and avoid the instability associated with discrete temperature bins.

Reward and delayed‑reward calibration – In standard KD the student updates its parameters only after processing an entire mini‑batch (typically 32 instances). Consequently, the reward for a single instance’s temperature choice is observed only after the batch update, leading to a severe delayed‑reward problem. The authors introduce an instance reward calibration technique: the batch‑level performance gain (e.g., change in validation accuracy or loss) is decomposed proportionally among the instances based on their state‑action values. This yields an approximate immediate reward for each instance, facilitating credit assignment and stabilizing policy gradients.

Efficient exploration – Random exploration in the early training phase would waste many steps because the temperature action space is large. To guide the agent toward informative experiences, the authors adopt a mix‑up based exploration strategy. They rank instances by a combination of teacher confidence, student confidence, and uncertainty, then select the top 10‑20 % (high‑value) and the bottom 40‑50 % (low‑value) samples. These subsets are mixed using the Mix‑up augmentation, producing synthetic instances that preserve the informative characteristics of both groups. The agent then focuses its early exploration on these curated samples, accelerating the acquisition of a useful temperature policy.

Learning framework – RLKD is built on Proximal Policy Optimization (PPO). The actor proposes temperatures; the critic estimates the state‑action value (advantage). After each batch, the student network is trained with the chosen temperatures, the calibrated rewards are computed, and both actor and critic are updated using PPO’s clipped objective. The student and the RL agent are trained online and jointly, allowing the policy to adapt as the student’s capabilities evolve.

Experiments – The method is evaluated on three benchmarks: CIFAR‑100, a subset of ImageNet, and COCO object detection. RLKD is applied as a plug‑in to several baseline KD methods (vanilla KD, CRD, DIST, etc.). Across all settings, RLKD consistently improves top‑1 accuracy by 1‑2 percentage points and yields higher mAP in detection. Ablation studies demonstrate that (a) removing the uncertainty component from the state degrades performance, (b) omitting reward calibration leads to unstable learning, and (c) disabling the mix‑up exploration slows convergence dramatically.

Key contributions

- Formulation – Recasting instance‑wise temperature adjustment as a sequential decision‑making problem, enabling the policy to optimize long‑term student performance.

- State representation – Introducing a compact three‑dimensional state that captures teacher confidence, student confidence, and student uncertainty.

- Reward calibration – Providing a principled solution to the delayed‑reward issue inherent in KD, allowing per‑instance credit assignment.

- Efficient exploration – Leveraging mix‑up on carefully selected high‑ and low‑value samples to guide early exploration.

- Plug‑and‑play – Demonstrating that RLKD can be attached to any existing KD pipeline without architectural changes, delivering state‑of‑the‑art results on classification and detection tasks.

In summary, “Instance Temperature Knowledge Distillation” presents a novel RL‑based framework that intelligently adapts the temperature for each training sample, taking into account both immediate and future learning benefits. By integrating teacher‑student performance cues, uncertainty estimation, reward calibration, and a smart exploration scheme, the proposed RLKD achieves consistent performance gains and establishes a new direction for adaptive hyper‑parameter control in knowledge distillation.

Comments & Academic Discussion

Loading comments...

Leave a Comment