Mitigating Error Accumulation in Continuous Navigation via Memory-Augmented Kalman Filtering

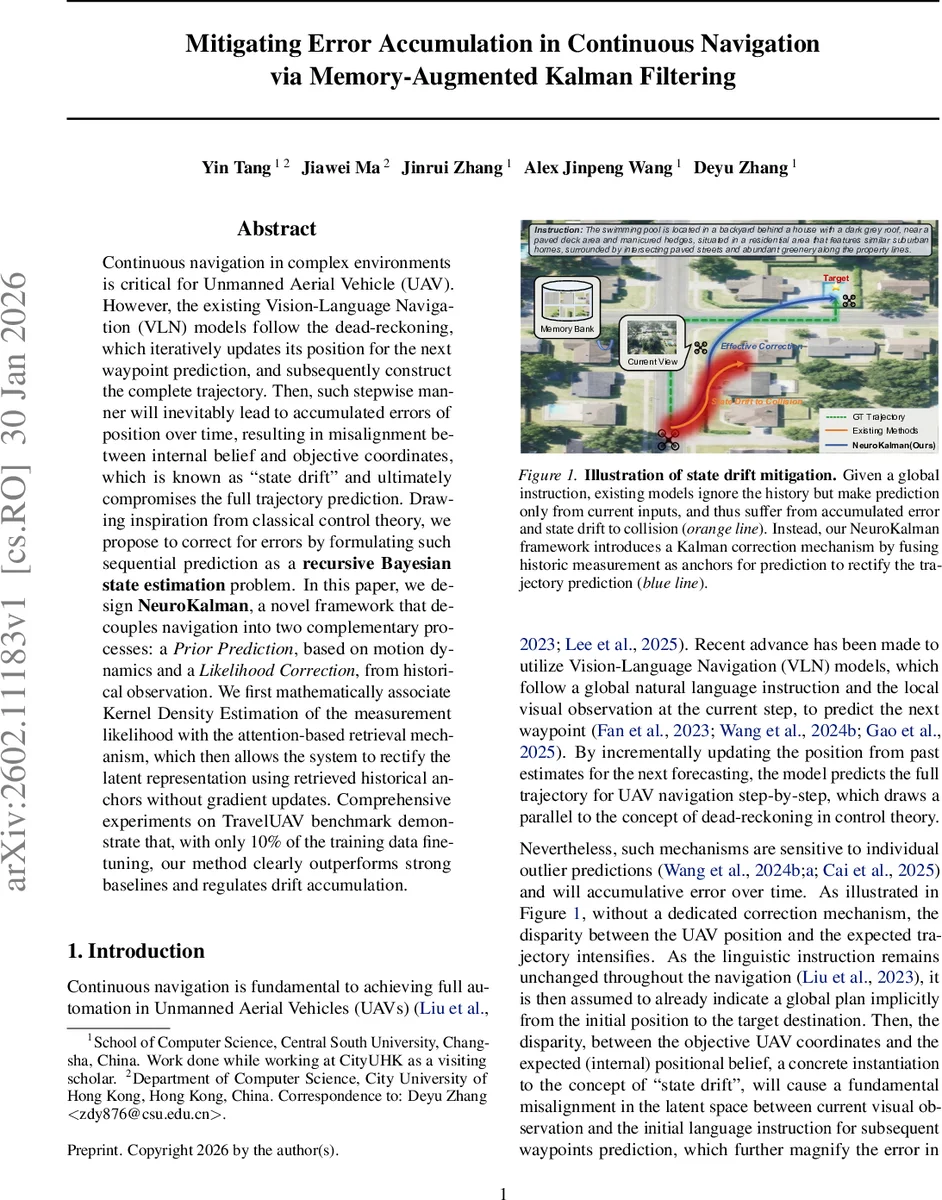

Continuous navigation in complex environments is critical for Unmanned Aerial Vehicle (UAV). However, the existing Vision-Language Navigation (VLN) models follow the dead-reckoning, which iteratively updates its position for the next waypoint prediction, and subsequently construct the complete trajectory. Then, such stepwise manner will inevitably lead to accumulated errors of position over time, resulting in misalignment between internal belief and objective coordinates, which is known as “state drift” and ultimately compromises the full trajectory prediction. Drawing inspiration from classical control theory, we propose to correct for errors by formulating such sequential prediction as a recursive Bayesian state estimation problem. In this paper, we design NeuroKalman, a novel framework that decouples navigation into two complementary processes: a Prior Prediction, based on motion dynamics and a Likelihood Correction, from historical observation. We first mathematically associate Kernel Density Estimation of the measurement likelihood with the attention-based retrieval mechanism, which then allows the system to rectify the latent representation using retrieved historical anchors without gradient updates. Comprehensive experiments on TravelUAV benchmark demonstrate that, with only 10% of the training data fine-tuning, our method clearly outperforms strong baselines and regulates drift accumulation.

💡 Research Summary

The paper addresses the persistent problem of state drift in continuous Vision‑Language Navigation (VLN) for Unmanned Aerial Vehicles (UAVs). Existing VLN models rely on a dead‑reckoning approach: at each step they predict the next waypoint using only the current visual observation and the global natural‑language instruction, then feed the predicted position forward. Small prediction errors accumulate over time, causing a growing mismatch between the UAV’s internal belief and its true coordinates, which the authors term “state drift.”

To mitigate this, the authors reformulate navigation as a recursive Bayesian state‑estimation problem and introduce NeuroKalman, a framework that explicitly separates navigation into a predictive prior and a likelihood correction, mirroring the classic Kalman filter cycle. The predictive prior is modeled by a Gated Recurrent Unit (GRU)‑based recurrent neural network that learns a motion‑dynamics transition function P(zₜ|zₜ₋₁, wₜ₋₁), where zₜ is a high‑dimensional latent belief encoding both position and semantic context, and wₜ₋₁ is the previous waypoint displacement. This component provides a “blind” dead‑reckoning estimate ẑₜ.

The likelihood correction is realized through a multimodal large language model (MLLM) augmented with an episodic memory bank. The memory bank stores high‑confidence visual anchors (key‑value pairs of visual features) from previous steps. At time t, the current multi‑view visual input vₜ is combined with retrieved anchors via an attention mechanism. The authors mathematically equate this attention weighting with Kernel Density Estimation (KDE) of the measurement likelihood, thereby interpreting the retrieved memory as probabilistic evidence. The MLLM processes the attention‑augmented visual features together with the global instruction l and the UAV’s current 3‑D position pₜ, outputting a measurement representation rₜ and an uncertainty scalar σₜ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment