Auditing Sybil: Explaining Deep Lung Cancer Risk Prediction Through Generative Interventional Attributions

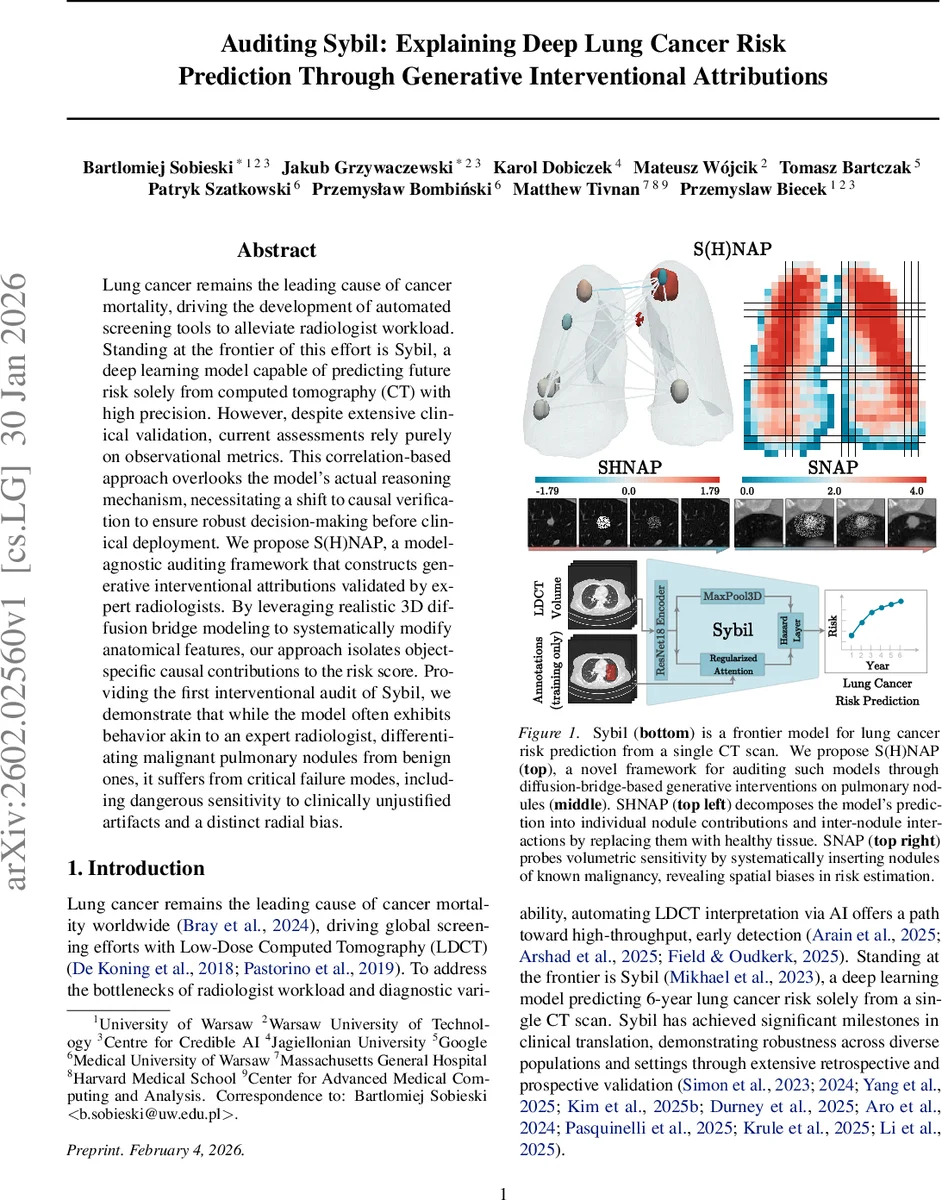

Lung cancer remains the leading cause of cancer mortality, driving the development of automated screening tools to alleviate radiologist workload. Standing at the frontier of this effort is Sybil, a deep learning model capable of predicting future risk solely from computed tomography (CT) with high precision. However, despite extensive clinical validation, current assessments rely purely on observational metrics. This correlation-based approach overlooks the model’s actual reasoning mechanism, necessitating a shift to causal verification to ensure robust decision-making before clinical deployment. We propose S(H)NAP, a model-agnostic auditing framework that constructs generative interventional attributions validated by expert radiologists. By leveraging realistic 3D diffusion bridge modeling to systematically modify anatomical features, our approach isolates object-specific causal contributions to the risk score. Providing the first interventional audit of Sybil, we demonstrate that while the model often exhibits behavior akin to an expert radiologist, differentiating malignant pulmonary nodules from benign ones, it suffers from critical failure modes, including dangerous sensitivity to clinically unjustified artifacts and a distinct radial bias.

💡 Research Summary

The paper addresses a critical gap in the evaluation of Sybil, a state‑of‑the‑art deep learning system that predicts six‑year lung‑cancer risk from a single low‑dose CT scan. While Sybil has demonstrated impressive performance across multiple retrospective and prospective studies, existing validation relies exclusively on observational metrics such as AUROC, AUPRC, and calibration curves. These metrics confirm that the model works on average but do not reveal why it works, nor do they expose the conditions under which it may fail—information that is essential before deploying a high‑stakes diagnostic aid in clinical practice.

To fill this gap, the authors propose S(H)NAP, a model‑agnostic auditing framework that combines generative diffusion‑bridge interventions with game‑theoretic attribution. The core technical contribution is the use of a System‑Embedded Diffusion Bridge (SDB), a variant of diffusion models that can inpaint or edit a masked region while leaving the rest of the image untouched. By leveraging a theoretical result from Verdu (2009) on the convergence of KL‑divergences under diffusion, the authors show that a counterfactual distribution (e.g., a scan where a nodule has been replaced by healthy tissue) becomes statistically indistinguishable from the original data distribution after a suitable diffusion time. This guarantees that the generated interventions remain on the data manifold, preserving realism and avoiding out‑of‑distribution artifacts.

Two concrete auditing procedures are built on top of SDB:

-

SHapley Nodule Attribution Profiles (SHNAP) – By systematically removing every possible subset of detected nodules (using SDB to replace them with healthy tissue) the authors construct a full lattice of 2ⁿ coalition samples, where n is the number of nodules in a scan (typically 5‑7). For each coalition they evaluate Sybil’s base‑risk logit and fit a Linear Model with Pairwise Interactions (LMPI) using 2‑Shapley values (nSV, n=2). The resulting coefficients capture (a) the main effect of each nodule, and (b) pairwise interaction effects that reflect how the presence of one nodule modulates the contribution of another. The LMPI approximation achieves a local‑fidelity R² of >0.92, indicating that Sybil’s decision surface is effectively linear in the binary presence‑variables of nodules.

-

Substitutive Nodule Attribution Probing (SNAP) – Here the authors insert a synthetic nodule of known volume, density, and shape at arbitrary coordinates within a scan, again using SDB to blend it seamlessly into the surrounding anatomy. The change in the model’s logit (Δlog‑odds) is recorded as an attribution score ψc for each location c. This experiment reveals spatial sensitivities: nodules placed near the central airways produce a substantially larger risk increase than identical nodules placed near the peripheral pleura, exposing a radial bias in the model. Moreover, inserting non‑clinical artifacts such as simulated metal implants leads to an unwarranted risk surge, demonstrating dangerous sensitivity to spurious cues.

The methodology is validated on three datasets:

- NLST (≈28 k training, 6 k test scans) – used to train the diffusion bridge.

- LUNA25 (4 069 scans with biopsy‑confirmed malignancy or 2‑year stability) – serves as the primary testbed for SHNAP and SNAP.

- iLDCT (243 scans, out‑of‑distribution, higher prevalence of severe cases) – provides an external robustness check.

Key findings include:

- LMPI Validity – Sybil’s risk function can be expressed as μx + ∑i ϕi ni + ∑i<j ϕij ni nj, where ni indicates the presence of the i‑th nodule. Main‑effect coefficients ϕi correlate strongly with clinically assessed malignancy risk (larger, spiculated nodules receive higher positive weights). Interaction terms ϕij are positive for spatially proximate nodules, reflecting a synergistic risk increase when multiple lesions cluster.

- Radial Sensitivity – SNAP shows a systematic increase in ψc as the insertion point moves from the lung periphery toward the hilum, with up to an 18 % higher log‑odds contribution for central locations. This bias likely stems from the training data distribution, where malignant nodules are over‑represented near central airways.

- Artifact Vulnerability – Simulated metal artifacts cause the model to over‑predict risk by up to 0.22 in log‑odds, despite no pathological relevance. Radiologists confirmed that such artifacts are clinically irrelevant, highlighting a potential safety hazard.

- Clinical Alignment – When SHNAP attributions are compared with expert radiologist assessments, there is high concordance for large malignant nodules, but discrepancies appear for small benign nodules, suggesting that Sybil may sometimes over‑emphasize subtle texture cues.

Overall, the paper demonstrates that interventional, causal auditing can uncover both strengths (accurate nodule‑level reasoning) and weaknesses (spatial bias, artifact sensitivity) that are invisible to traditional observational validation. S(H)NAP is presented as a scalable, model‑agnostic toolkit that can be applied to any imaging‑based risk predictor, offering a pathway toward safer, more transparent AI deployment in radiology.

Comments & Academic Discussion

Loading comments...

Leave a Comment