DROGO: Default Representation Objective via Graph Optimization in Reinforcement Learning

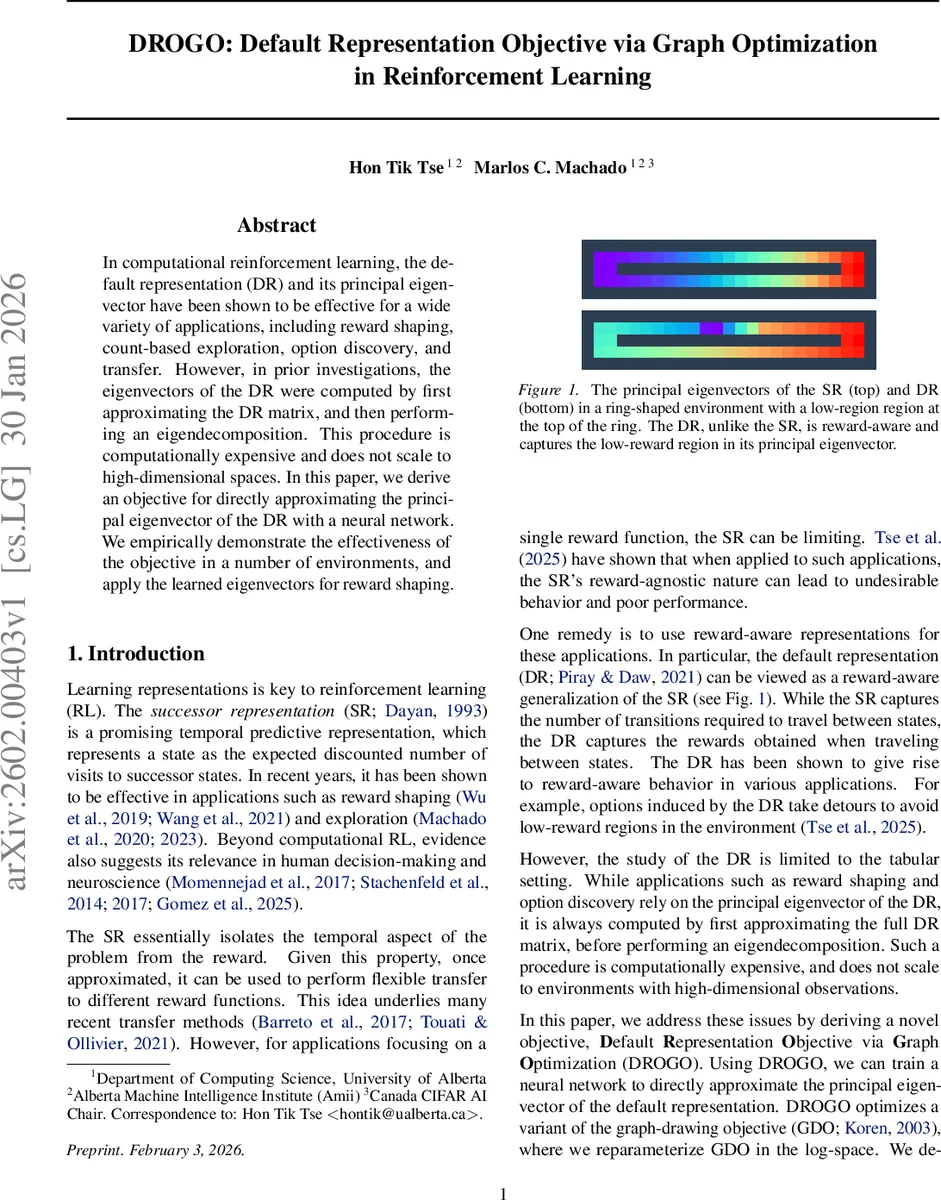

In computational reinforcement learning, the default representation (DR) and its principal eigenvector have been shown to be effective for a wide variety of applications, including reward shaping, count-based exploration, option discovery, and transfer. However, in prior investigations, the eigenvectors of the DR were computed by first approximating the DR matrix, and then performing an eigendecomposition. This procedure is computationally expensive and does not scale to high-dimensional spaces. In this paper, we derive an objective for directly approximating the principal eigenvector of the DR with a neural network. We empirically demonstrate the effectiveness of the objective in a number of environments, and apply the learned eigenvectors for reward shaping.

💡 Research Summary

The paper addresses a fundamental scalability bottleneck in using the Default Representation (DR) for reinforcement learning. The DR, a reward‑aware generalization of the Successor Representation, captures the expected discounted occupancy of states while weighting transitions by a reward‑dependent factor (R = \operatorname{diag}(\exp(-r/\lambda))). Its principal eigenvector (e) has proven useful for reward shaping, option discovery, and transfer learning. However, prior work obtains (e) by first estimating the full DR matrix and then performing an eigendecomposition, an approach that scales as (\mathcal{O}(|S|^3)) and is infeasible for high‑dimensional or continuous state spaces.

Core Contribution – DROGO

The authors propose DROGO (Default Representation Objective via Graph Optimization), a novel objective that directly learns the logarithm of the principal eigenvector, (\log e), with a neural network. The method proceeds in several logical steps:

- Graph‑Drawing Objective (GDO) Adaptation

By symmetrizing the transition matrix (\tilde P = (P+P^\top)/2) and noting that the principal eigenvector of (Z = (R-\tilde P)^{-1}) coincides with the smallest eigenvector of (I+R-\tilde P), the authors formulate a classic graph‑drawing problem:

\

Comments & Academic Discussion

Loading comments...

Leave a Comment