PLACID: Identity-Preserving Multi-Object Compositing via Video Diffusion with Synthetic Trajectories

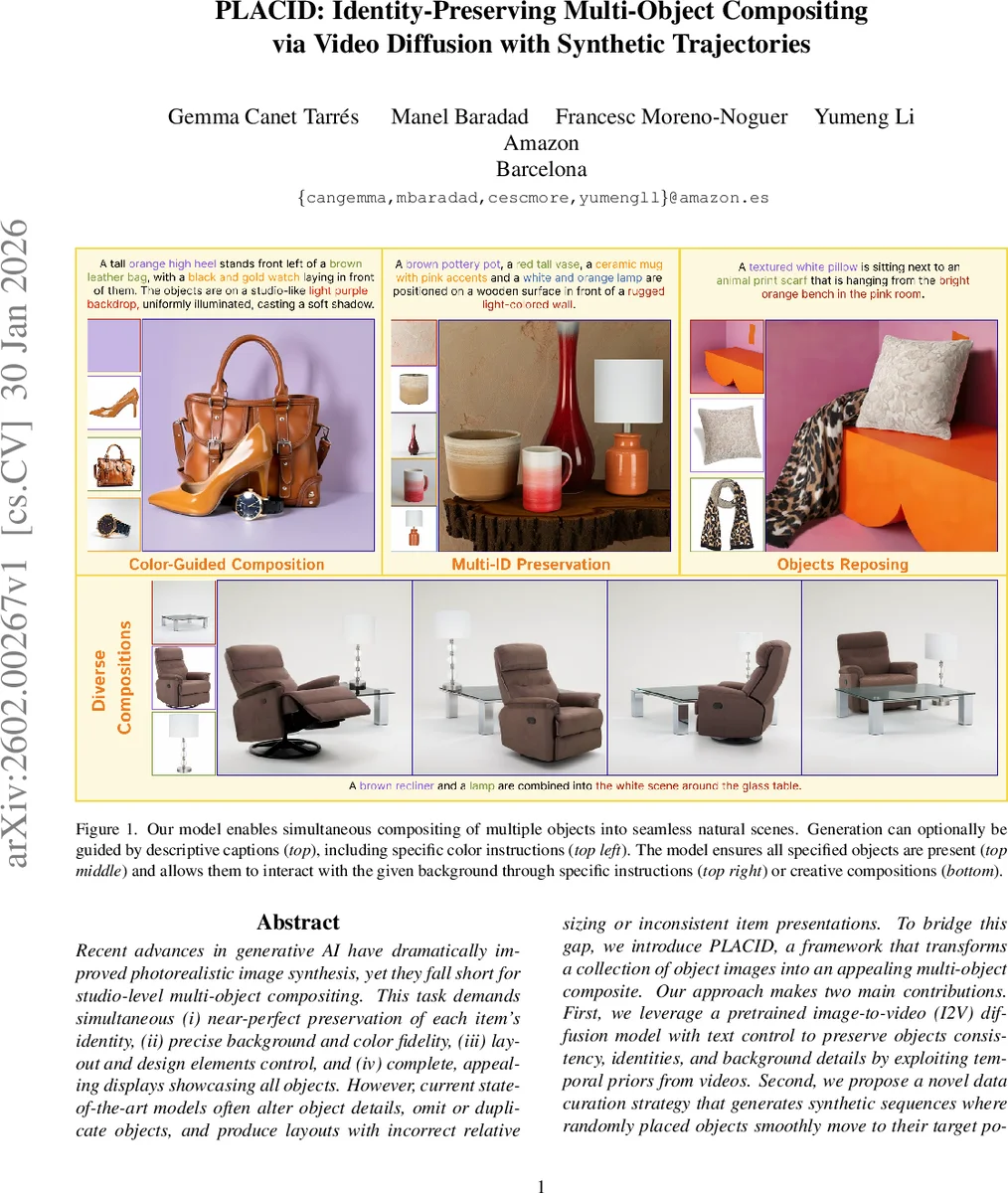

Recent advances in generative AI have dramatically improved photorealistic image synthesis, yet they fall short for studio-level multi-object compositing. This task demands simultaneous (i) near-perfect preservation of each item’s identity, (ii) precise background and color fidelity, (iii) layout and design elements control, and (iv) complete, appealing displays showcasing all objects. However, current state-of-the-art models often alter object details, omit or duplicate objects, and produce layouts with incorrect relative sizing or inconsistent item presentations. To bridge this gap, we introduce PLACID, a framework that transforms a collection of object images into an appealing multi-object composite. Our approach makes two main contributions. First, we leverage a pretrained image-to-video (I2V) diffusion model with text control to preserve objects consistency, identities, and background details by exploiting temporal priors from videos. Second, we propose a novel data curation strategy that generates synthetic sequences where randomly placed objects smoothly move to their target positions. This synthetic data aligns with the video model’s temporal priors during training. At inference, objects initialized at random positions consistently converge into coherent layouts guided by text, with the final frame serving as the composite image. Extensive quantitative evaluations and user studies demonstrate that PLACID surpasses state-of-the-art methods in multi-object compositing, achieving superior identity, background, and color preservation, with less omitted objects and visually appealing results.

💡 Research Summary

The paper introduces PLACID, a novel framework for studio‑grade multi‑object compositing that simultaneously preserves the identity of each object, maintains background and color fidelity, offers layout control, and produces visually appealing final images. The core insight is to exploit a pretrained image‑to‑video (I2V) diffusion model, which inherently learns strong temporal priors about object consistency and re‑posing across frames. By treating the compositing process as a short video generation task, PLACID can guide objects from random initial placements to their target positions while keeping their visual details intact.

To adapt the I2V model for this purpose, the authors make two architectural modifications: (1) they concatenate full‑resolution object images (and an optional background) to the initial composite frame and feed each through a CLIP encoder, allowing cross‑attention to preserve fine‑grained details that would otherwise be lost in a down‑sampled representation; (2) they introduce special textual tokens (

Training data is a key contribution. Real video datasets rarely contain static, inanimate objects moving independently, so the authors synthesize short videos from still images. Given a target multi‑object layout, they randomly scatter the same objects on the background to create an initial frame, then generate linear synthetic trajectories that move each object smoothly to its final position, producing temporally coherent intermediate frames. This synthetic video aligns with the video model’s priors, enabling effective fine‑tuning with a lightweight LoRA adapter and a Flow‑Matching loss that predicts the velocity field across the whole video.

Extensive experiments compare PLACID against state‑of‑the‑art methods such as AnyDoor, IMPRINT, and DSD. Quantitative metrics (identity preservation, LPIPS, CLIP‑Score) and user studies show that PLACID consistently outperforms baselines, achieving higher identity scores, better color accuracy, and far fewer omitted or duplicated objects. Visual examples demonstrate that background textures and object colors remain virtually unchanged, while the final frame respects the textual layout instructions.

Limitations include reliance on linear trajectories, which may not capture complex physical interactions, and increased memory/computation when handling many objects. Future work aims to incorporate non‑linear, physics‑based motion, more efficient scaling, and advanced lighting/shadow handling.

In summary, PLACID leverages video diffusion’s temporal knowledge and a clever synthetic data pipeline to deliver high‑fidelity, controllable multi‑object compositing, potentially replacing labor‑intensive studio workflows in e‑commerce, advertising, and graphic design.

Comments & Academic Discussion

Loading comments...

Leave a Comment