Learning Robust Reasoning through Guided Adversarial Self-Play

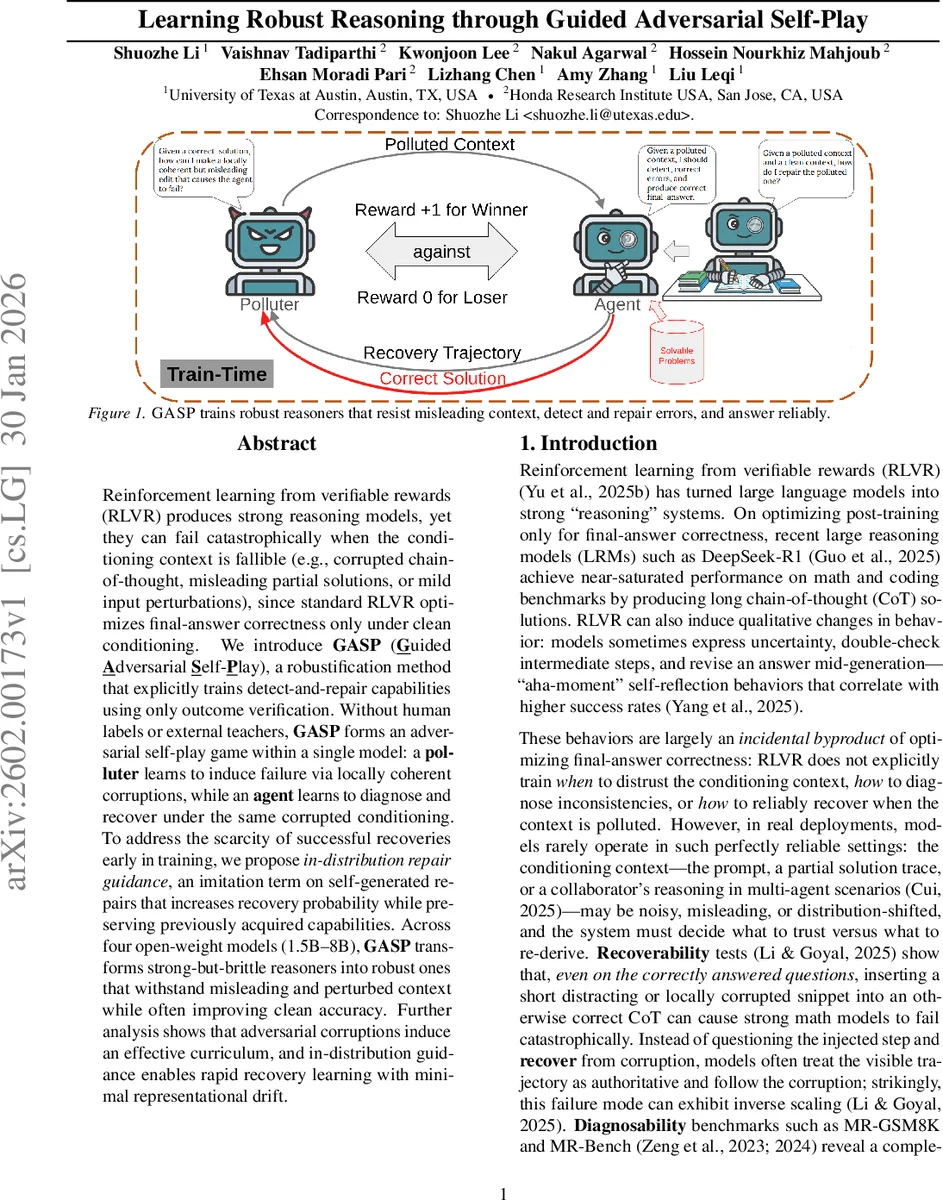

Reinforcement learning from verifiable rewards (RLVR) produces strong reasoning models, yet they can fail catastrophically when the conditioning context is fallible (e.g., corrupted chain-of-thought, misleading partial solutions, or mild input perturbations), since standard RLVR optimizes final-answer correctness only under clean conditioning. We introduce GASP (Guided Adversarial Self-Play), a robustification method that explicitly trains detect-and-repair capabilities using only outcome verification. Without human labels or external teachers, GASP forms an adversarial self-play game within a single model: a polluter learns to induce failure via locally coherent corruptions, while an agent learns to diagnose and recover under the same corrupted conditioning. To address the scarcity of successful recoveries early in training, we propose in-distribution repair guidance, an imitation term on self-generated repairs that increases recovery probability while preserving previously acquired capabilities. Across four open-weight models (1.5B–8B), GASP transforms strong-but-brittle reasoners into robust ones that withstand misleading and perturbed context while often improving clean accuracy. Further analysis shows that adversarial corruptions induce an effective curriculum, and in-distribution guidance enables rapid recovery learning with minimal representational drift.

💡 Research Summary

The paper tackles a critical weakness of large language models (LLMs) trained with Reinforcement Learning from Verifiable Rewards (RLVR): while they achieve high accuracy on clean tasks, they collapse when the conditioning context (e.g., a chain‑of‑thought, partial solution, or collaborator’s reasoning) contains errors or mild perturbations. Standard RLVR optimizes only for final‑answer correctness under pristine conditions, leaving models without explicit mechanisms to detect, diagnose, or repair faulty context.

To address this, the authors introduce GASP (Guided Adversarial Self‑Play), a robustification framework that trains “detect‑and‑repair” capabilities using only outcome verification. GASP treats a single model as two role‑conditioned agents in an adversarial self‑play game:

- Polluter – conditioned on a question and a prefix of a correct reasoning trace, it generates a short “polluted window” that is locally coherent but deliberately misleading.

- Agent (Repairer) – receives the polluted context (question, prefix, corrupted window) and must produce a continuation that leads to the correct final answer.

Both roles are optimized with Group Relative Policy Optimization (GRPO), which uses only a binary terminal reward (correct/incorrect answer). The polluter receives reward when it causes the agent to fail (1 – correctness), while the agent receives reward when it succeeds. Because the polluter adapts to the current agent, the interaction creates an automatic curriculum: as the agent becomes harder to fool, the polluter must discover increasingly subtle corruptions, and vice‑versa.

A major obstacle is the scarcity of successful repair trajectories early in training; outcome‑only RL provides almost no gradient signal when the agent rarely recovers from corrupted context. The authors solve this with in‑distribution repair guidance: the agent constructs a diagnosis prompt that includes the question, the clean prefix, and both the clean and polluted windows, then samples its own “repair snippet” from its policy. This self‑generated snippet is used as an imitation target, up‑weighting its log‑probability under the polluted context. Compared to using an external teacher model (e.g., GPT‑5), self‑generated repairs have far higher policy probability, yielding larger first‑order improvements per update and causing far less representational drift. The guidance term is added to the GRPO loss with a weight λ, preserving the outcome‑only objective while dramatically increasing the frequency of informative training groups.

Empirical evaluation spans four open‑weight models (1.5 B, 3 B, 6 B, 8 B). The authors test three stress‑test families that together define robustness to fallible context:

- Diagnosability (MR‑GSM8K, MR‑Bench): ability to locate and explain erroneous steps in a provided solution.

- Recoverability: ability to ignore or correct a deliberately corrupted chain‑of‑thought and still answer correctly.

- Perturbation reliability (RUPBench): resilience to mild lexical, syntactic, or semantic edits of the input.

Across all models, GASP yields large gains in diagnosability, recoverability, and perturbation robustness. Notably, clean‑condition accuracy also improves modestly, suggesting that the training encourages more cautious, self‑checking reasoning. Ablation studies confirm that the adversarial self‑play supplies an effective curriculum and that in‑distribution repair guidance is essential for early learning; without it, the agent’s success rate remains near zero for many training steps. Representation analyses show that in‑distribution guidance preserves earlier capabilities, whereas teacher‑guided imitation induces larger parameter shifts and greater drift.

In summary, GASP demonstrates that robust reasoning under unreliable conditioning can be achieved without any human‑annotated step‑level data or external teacher models, relying solely on verifiable final‑answer rewards. By coupling an adversarial polluter with a self‑play repairer and augmenting learning with self‑generated repair imitation, the method equips LLMs with the ability to detect and fix contextual errors, making them far more reliable for real‑world deployments where inputs are seldom perfect.

Comments & Academic Discussion

Loading comments...

Leave a Comment