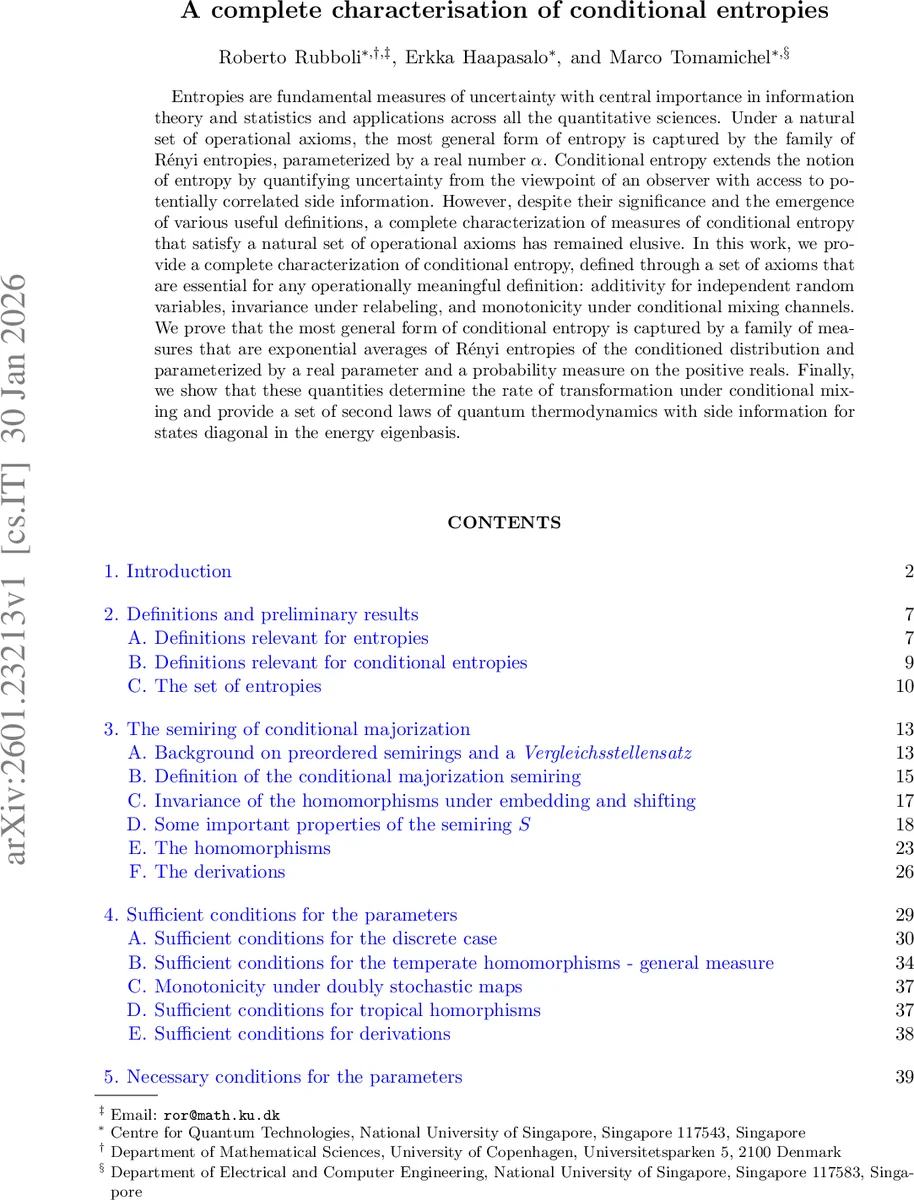

A complete characterisation of conditional entropies

Entropies are fundamental measures of uncertainty with central importance in information theory and statistics and applications across all the quantitative sciences. Under a natural set of operational axioms, the most general form of entropy is captured by the family of Rényi entropies, parameterized by a real number $α$. Conditional entropy extends the notion of entropy by quantifying uncertainty from the viewpoint of an observer with access to potentially correlated side information. However, despite their significance and the emergence of various useful definitions, a complete characterization of measures of conditional entropy that satisfy a natural set of operational axioms has remained elusive. In this work, we provide a complete characterization of conditional entropy, defined through a set of axioms that are essential for any operationally meaningful definition: additivity for independent random variables, invariance under relabeling, and monotonicity under conditional mixing channels. We prove that the most general form of conditional entropy is captured by a family of measures that are exponential averages of Rényi entropies of the conditioned distribution and parameterized by a real parameter and a probability measure on the positive reals. Finally, we show that these quantities determine the rate of transformation under conditional mixing and provide a set of second laws of quantum thermodynamics with side information for states diagonal in the energy eigenbasis.

💡 Research Summary

**

This paper delivers a complete axiomatic characterization of conditional entropy, a fundamental quantity that measures uncertainty of a random variable X when side information Y is available. Building on the operational framework of “conditionally mixing channels” – a broad class of transformations that includes doubly stochastic maps on X, stochastic maps on Y, and Y‑dependent stochastic maps on X – the authors formulate four natural axioms: (i) invariance under relabeling and embedding, (ii) monotonicity under conditional mixing, (iii) additivity for independent variables, and (iv) normalization (the entropy of a fair coin is one bit).

The central result is that any function satisfying these axioms must be a convex combination of Rényi entropies taken inside an exponential‑average (or “log‑moment‑generating”) functional. Concretely, for a probability measure τ on the non‑negative reals and a real parameter t, the conditional entropy is uniquely expressed as

\

Comments & Academic Discussion

Loading comments...

Leave a Comment