Rethinking Transferable Adversarial Attacks on Point Clouds from a Compact Subspace Perspective

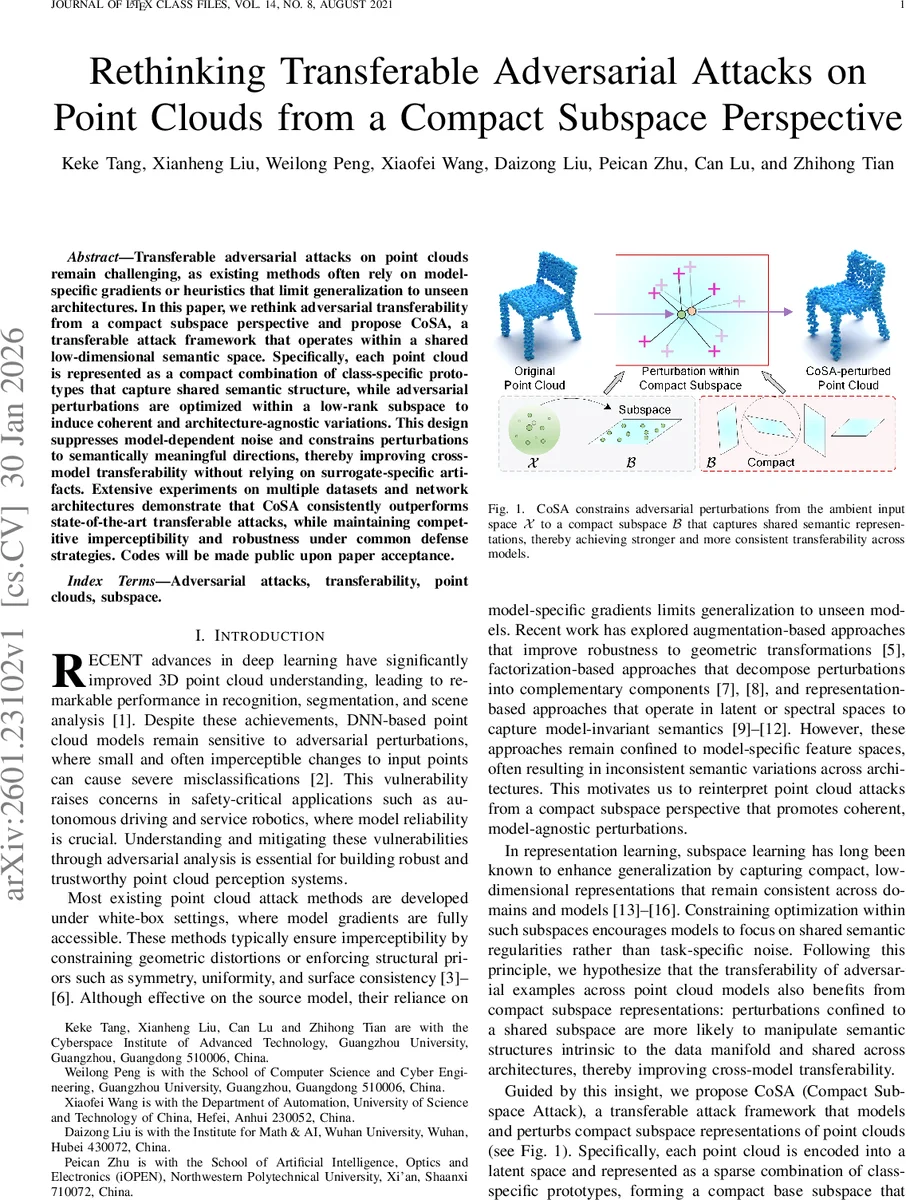

Transferable adversarial attacks on point clouds remain challenging, as existing methods often rely on model-specific gradients or heuristics that limit generalization to unseen architectures. In this paper, we rethink adversarial transferability from a compact subspace perspective and propose CoSA, a transferable attack framework that operates within a shared low-dimensional semantic space. Specifically, each point cloud is represented as a compact combination of class-specific prototypes that capture shared semantic structure, while adversarial perturbations are optimized within a low-rank subspace to induce coherent and architecture-agnostic variations. This design suppresses model-dependent noise and constrains perturbations to semantically meaningful directions, thereby improving cross-model transferability without relying on surrogate-specific artifacts. Extensive experiments on multiple datasets and network architectures demonstrate that CoSA consistently outperforms state-of-the-art transferable attacks, while maintaining competitive imperceptibility and robustness under common defense strategies. Codes will be made public upon paper acceptance.

💡 Research Summary

This paper addresses the challenging problem of transferable adversarial attacks on 3‑D point clouds, where perturbations crafted against a surrogate model must also fool unseen target models. Existing point‑cloud attacks either rely heavily on model‑specific gradients (white‑box attacks) or use heuristic geometric constraints, which limits their generalization to other architectures. Recent transferable methods—augmentation‑based, factorization‑based, and latent‑space‑based—still operate in model‑dependent feature spaces and fail to capture a shared semantic structure across networks.

The authors propose a novel “compact subspace” perspective. They first embed each point cloud into a low‑dimensional latent space using a pretrained auto‑encoder (Point‑MAE). Within this latent space, class‑specific prototypes are obtained by k‑means clustering of training samples, forming a prototype dictionary D_y for each class y. Any input point cloud is then sparsely reconstructed over its class dictionary, yielding a compact representation α* that captures the essential semantic content of the object.

To generate transferable perturbations, the method introduces a low‑rank perturbation subspace S. This subspace is parameterized by an orthonormal basis U (size d × r) and a coefficient matrix Γ (size r × m_y). The prototype matrix is perturbed as ΔD_y = U Γ, and the perturbed latent code becomes z′ = (D_y + U Γ) α*. By constraining Γ with a nuclear‑norm regularizer and enforcing orthogonality of U, the perturbations are forced to lie in a few shared directions that are largely independent of any particular network’s internal representations.

The attack objective jointly minimizes (i) a misclassification loss on the surrogate classifier f_s, (ii) a geometric fidelity loss (Chamfer‑like) between the original and adversarial point clouds, (iii) the nuclear norm of Γ to promote low‑rank structure, and (iv) an orthogonality penalty on U. A reconstruction constraint D(P, Dec(z′)) ≤ ε bounds the overall distortion, ensuring imperceptibility. After optimizing U and Γ, the adversarial point cloud is obtained by decoding the perturbed latent code.

Extensive experiments are conducted on ModelNet40 and ShapeNet using six representative point‑cloud networks: PointNet, PointNet++, DGCNN, PointTransformer, PCT, and PointMLP. When the surrogate is PointNet, CoSA achieves transfer success rates 12‑18 % higher than state‑of‑the‑art transferable attacks such as FGSM‑Transfer, factorization‑based methods, and latent‑space attacks. Moreover, the Chamfer and Hausdorff distances of the generated adversarial examples are 30‑40 % lower, indicating superior visual stealth. Under common defenses (SOR, DUP‑Net, PointGuard, AdvPC), CoSA maintains attack success rates of 55‑62 %, substantially outperforming baselines whose success drops below 30 %.

Ablation studies reveal that (a) removing the prototype‑guided base subspace dramatically reduces transferability, confirming the importance of semantic compression, and (b) omitting the low‑rank regularization leads to larger geometric distortions, highlighting the role of compact perturbation directions.

Limitations include the additional computational cost of pre‑training the auto‑encoder and constructing class prototypes, sensitivity to class imbalance (which can affect prototype quality), and the current focus on untargeted attacks. Future work is suggested on dynamic prototype updates, unsupervised subspace learning to reduce label dependence, and lightweight encoder‑decoder designs for real‑time robotic applications.

In summary, CoSA introduces a principled framework that leverages shared semantic subspaces and low‑rank perturbations to produce adversarial point clouds that are both highly transferable across diverse architectures and visually imperceptible, advancing the state of the art in 3‑D adversarial robustness research.

Comments & Academic Discussion

Loading comments...

Leave a Comment