Omni-fMRI: A Universal Atlas-Free fMRI Foundation Model

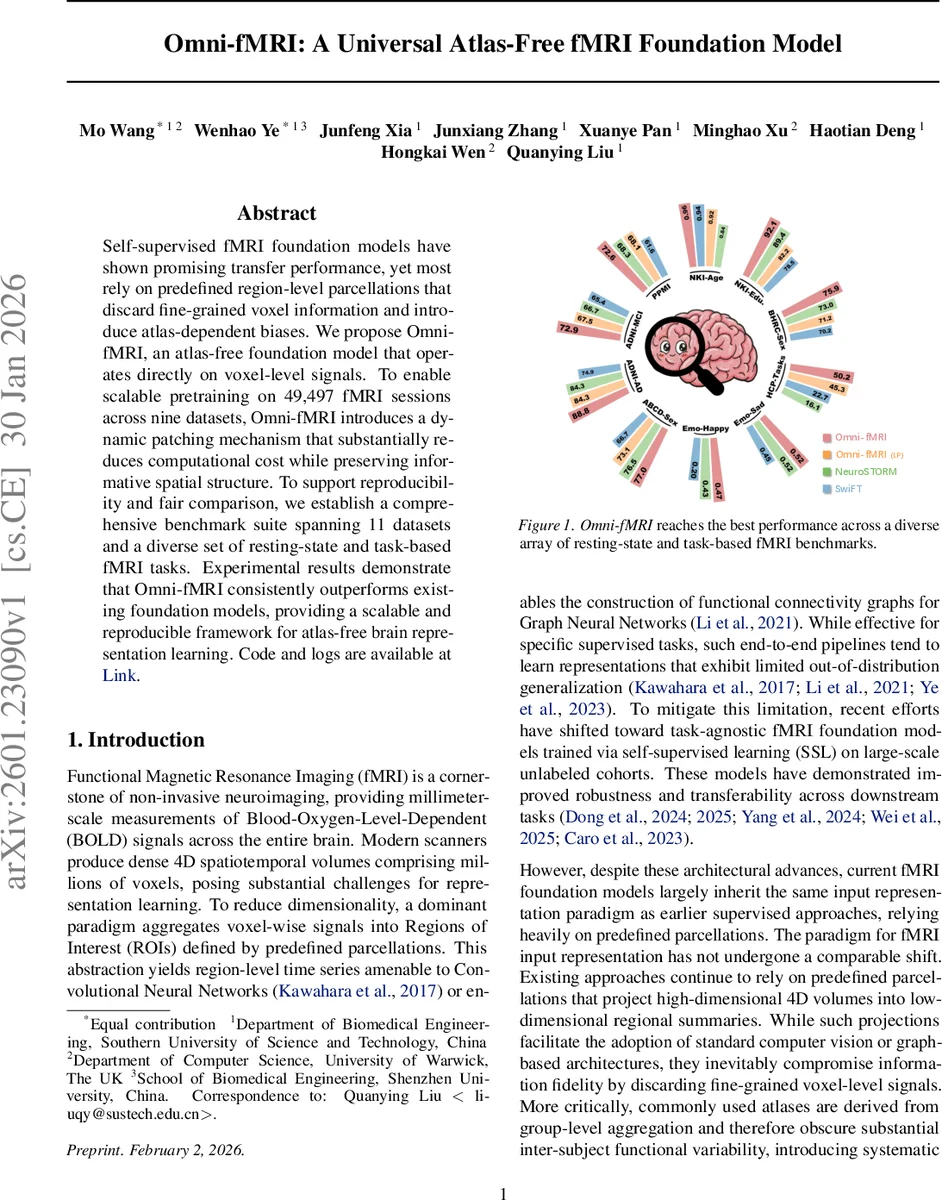

Self-supervised fMRI foundation models have shown promising transfer performance, yet most rely on predefined region-level parcellations that discard fine-grained voxel information and introduce atlas-dependent biases. We propose Omni-fMRI, an atlas-free foundation model that operates directly on voxel-level signals. To enable scalable pretraining on 49,497 fMRI sessions across nine datasets, Omni-fMRI introduces a dynamic patching mechanism that substantially reduces computational cost while preserving informative spatial structure. To support reproducibility and fair comparison, we establish a comprehensive benchmark suite spanning 11 datasets and a diverse set of resting-state and task-based fMRI tasks. Experimental results demonstrate that Omni-fMRI consistently outperforms existing foundation models, providing a scalable and reproducible framework for atlas-free brain representation learning. Code and logs are available.

💡 Research Summary

Omni‑fMRI introduces a truly atlas‑free foundation model for functional MRI that operates directly on voxel‑level BOLD signals. The authors identify three major shortcomings of existing fMRI foundation models: (1) reliance on predefined region‑of‑interest (ROI) parcellations that discard fine‑grained spatial information, (2) systematic bias introduced by group‑level atlases that obscure inter‑subject variability, and (3) computational inefficiency when attempting to model the full 4‑D volume with standard Vision Transformers (ViTs). To overcome these issues, Omni‑fMRI proposes a novel pipeline consisting of (i) a dynamic patch tokenization strategy, (ii) a dual‑path multi‑scale embedding module, and (iii) a scale‑aware masked autoencoding (MAE) objective.

Dynamic patch tokenization first estimates a spatiotemporal complexity score for each local 3‑D block using the variance of voxel intensities across time. Blocks with mean intensity below a background threshold are discarded entirely. Remaining blocks are classified as “coarse” if their variance falls below a complexity threshold τ, otherwise they are recursively subdivided into finer patches. This adaptive scheme reduces the average token count from roughly 14 000 per time frame to about 4.3 K, dramatically lowering memory and compute demands while preserving detail in information‑rich brain regions.

Because tokens now have heterogeneous spatial resolutions, the dual‑path embedding projects them into a common latent space. Base‑resolution patches are embedded directly with a 3‑D convolutional tokenizer. Larger patches are represented by a low‑frequency embedding of a down‑sampled version of the patch plus a residual branch that aggregates high‑frequency details from sub‑patches via a strided convolution. The residual branch is modulated by a Zero‑initialized MLP (ZeroMLP), which forces the model to initially rely on low‑frequency information and gradually incorporate fine details, effectively providing a curriculum‑like training dynamic.

The self‑supervised pretraining follows the MAE paradigm, but standard MAE assumes uniform token size, which would bias the loss toward large patches. Omni‑fMRI therefore injects a learnable scale embedding into each decoder token and employs a bank of scale‑specific reconstruction heads. The reconstruction loss is normalized by both the number of masked tokens at each scale and the voxel volume of the corresponding patch, ensuring scale‑invariant gradients.

The model is pretrained on 49 497 fMRI sessions spanning nine large public datasets (including UK Biobank, HCP, ABCD, etc.), covering a wide age range and diverse acquisition protocols. To evaluate transferability, the authors construct a benchmark suite of 11 datasets and 16 downstream tasks, ranging from resting‑state network classification to task‑based cognitive state decoding, demographic prediction, and clinical diagnosis. Both linear probing and full fine‑tuning are performed. Omni‑fMRI consistently outperforms prior state‑of‑the‑art foundation models such as NeuroStorm, SLIM‑Brain, and other ROI‑based approaches, achieving average improvements of 3–7 % in accuracy or AUC. Notably, linear probes on Omni‑fMRI often surpass the fine‑tuned performance of competing models, highlighting the quality of the learned representations.

Efficiency analyses show that the dynamic patching reduces GPU memory consumption by roughly 45 % and cuts pretraining wall‑clock time by more than half compared to hierarchical Transformer baselines, while still enabling global self‑attention that captures long‑range functional connectivity.

Beyond performance, the authors emphasize reproducibility: all code, pretrained weights, experiment logs, and exact test‑subject identifiers are released publicly, providing a standardized platform for future research.

In summary, Omni‑fMRI demonstrates that atlas‑free voxel‑level modeling of fMRI is both feasible and advantageous. By coupling adaptive tokenization, multi‑scale embedding, and scale‑aware self‑supervision, the framework delivers superior representation learning, computational efficiency, and reproducibility, setting a new benchmark for large‑scale brain imaging foundation models.

Comments & Academic Discussion

Loading comments...

Leave a Comment