Autonomous Chain-of-Thought Distillation for Graph-Based Fraud Detection

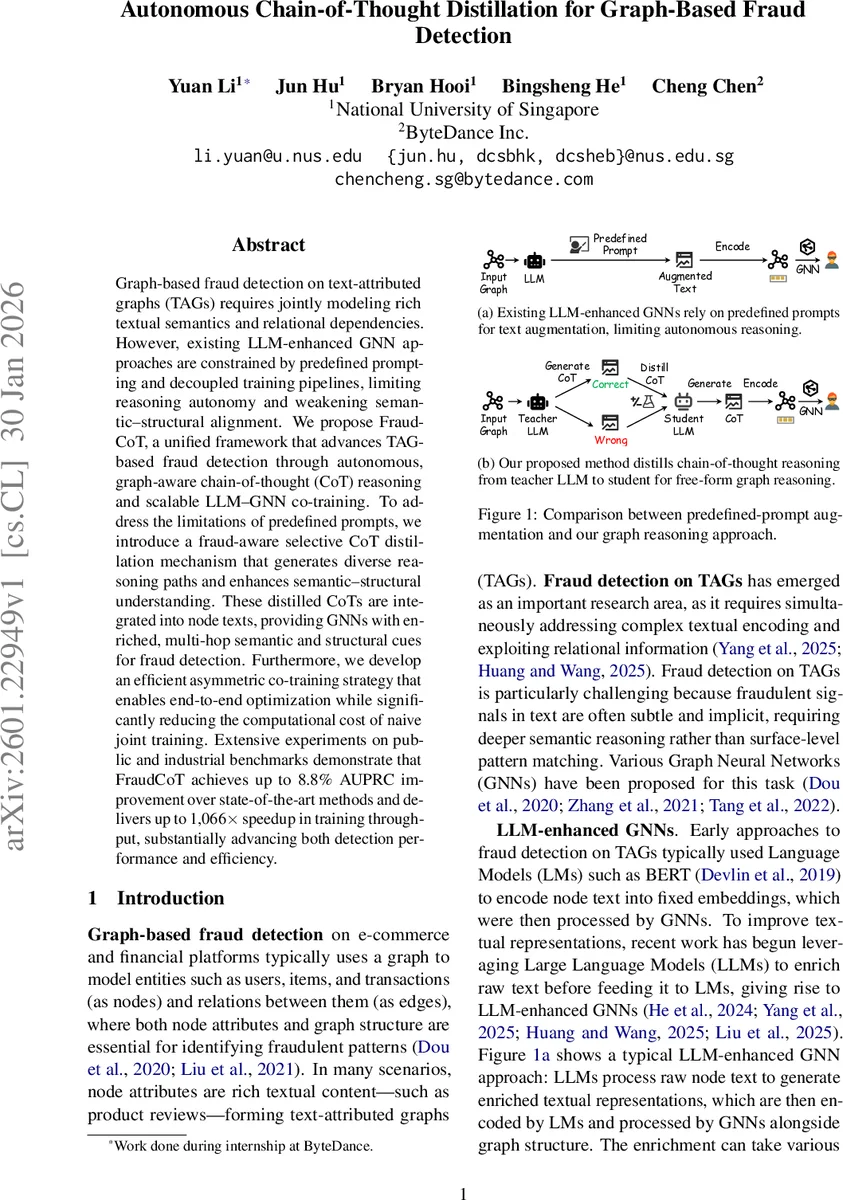

Graph-based fraud detection on text-attributed graphs (TAGs) requires jointly modeling rich textual semantics and relational dependencies. However, existing LLM-enhanced GNN approaches are constrained by predefined prompting and decoupled training pipelines, limiting reasoning autonomy and weakening semantic-structural alignment. We propose FraudCoT, a unified framework that advances TAG-based fraud detection through autonomous, graph-aware chain-of-thought (CoT) reasoning and scalable LLM-GNN co-training. To address the limitations of predefined prompts, we introduce a fraud-aware selective CoT distillation mechanism that generates diverse reasoning paths and enhances semantic-structural understanding. These distilled CoTs are integrated into node texts, providing GNNs with enriched, multi-hop semantic and structural cues for fraud detection. Furthermore, we develop an efficient asymmetric co-training strategy that enables end-to-end optimization while significantly reducing the computational cost of naive joint training. Extensive experiments on public and industrial benchmarks demonstrate that FraudCoT achieves up to 8.8% AUPRC improvement over state-of-the-art methods and delivers up to 1,066x speedup in training throughput, substantially advancing both detection performance and efficiency.

💡 Research Summary

FraudCoT introduces a unified framework that tackles two fundamental shortcomings of existing large‑language‑model‑enhanced graph neural networks (LLM‑GNNs) for fraud detection on text‑attributed graphs (TAGs). The first shortcoming is the reliance on predefined prompts, which forces the language model to perform shallow pattern matching rather than deep, multi‑step reasoning. To overcome this, FraudCoT employs a teacher LLM (e.g., DeepSeek‑R1) that, given a node’s raw text and its neighborhood, autonomously generates multiple chain‑of‑thought (CoT) reasoning paths. Each generated CoT is labeled as positive if the teacher’s decision matches the ground‑truth label, or negative otherwise. This creates a rich set of both correct and incorrect reasoning trajectories.

The second shortcoming is the decoupled training of semantic (LLM) and structural (GNN) components, which weakens the alignment between textual semantics and graph topology. FraudCoT addresses this with an efficient asymmetric co‑training strategy. During each training batch, the LLM encoder processes only the target nodes’ CoT‑augmented texts, while neighbor nodes reuse cached embeddings computed once at the beginning of training. This reduces the number of LLM forward passes from O(|N(v)|) per target node to O(1), dramatically lowering computational cost while preserving the ability to propagate structural information through the GNN.

The learning pipeline consists of two stages. In the Fraud‑aware Selective CoT Distillation stage, the student LLM is fine‑tuned using a combined loss: a cross‑entropy term that encourages reproducing positive CoTs and an unlikelihood term that penalizes the generation of negative CoTs. Low‑rank adaptation (LoRA) adapters are employed to keep parameter updates lightweight. After distillation, each node’s final text is formed by concatenating its original textual attribute with the distilled CoT, producing a “CoT‑augmented” representation that explicitly encodes reasoning steps.

In the Efficient Asymmetric Co‑training stage, these CoT‑augmented texts are encoded by the LLM to obtain target node embeddings. Neighbor embeddings are fetched from a cache, and the GNN performs standard message passing on the mixed set of fresh and cached vectors. The whole system remains end‑to‑end differentiable, allowing gradients to flow from the fraud classification loss back to both the GNN and the LLM encoder, thereby aligning semantic and structural learning.

Experiments were conducted on several public fraud detection benchmarks (e.g., Yelp, Amazon) and a large‑scale industrial dataset from ByteDance, encompassing millions of nodes and tens of millions of edges. FraudCoT consistently outperformed strong baselines—including PC‑GNN, H2‑FDetector, ConsisGAD, and recent LLM‑GNN hybrids such as TAPE, STAR, and FLAG—by up to 8.8 % absolute improvement in area under the precision‑recall curve (AUPRC). Moreover, the asymmetric co‑training yielded a training‑throughput speedup of up to 1,066× compared with naïve joint training, reducing per‑epoch time from several minutes to a few seconds on the same hardware.

Beyond raw performance, the approach offers interpretability: the distilled CoTs are human‑readable rationales attached to each node, facilitating auditability and compliance in regulated domains. The authors acknowledge that teacher LLM inference remains costly, and that CoT generation is performed on a sampled subset of nodes, leaving room for future work on more scalable teacher models and full‑graph distillation.

In summary, FraudCoT advances fraud detection on text‑rich graphs by (1) enabling autonomous, multi‑hop reasoning through selective CoT distillation, and (2) preserving semantic‑structural alignment while dramatically cutting computational overhead via asymmetric co‑training. The framework sets a new state‑of‑the‑art in both detection accuracy and efficiency, and its core ideas are readily transferable to other domains involving text‑attributed graphs.

Comments & Academic Discussion

Loading comments...

Leave a Comment