Farewell to Item IDs: Unlocking the Scaling Potential of Large Ranking Models via Semantic Tokens

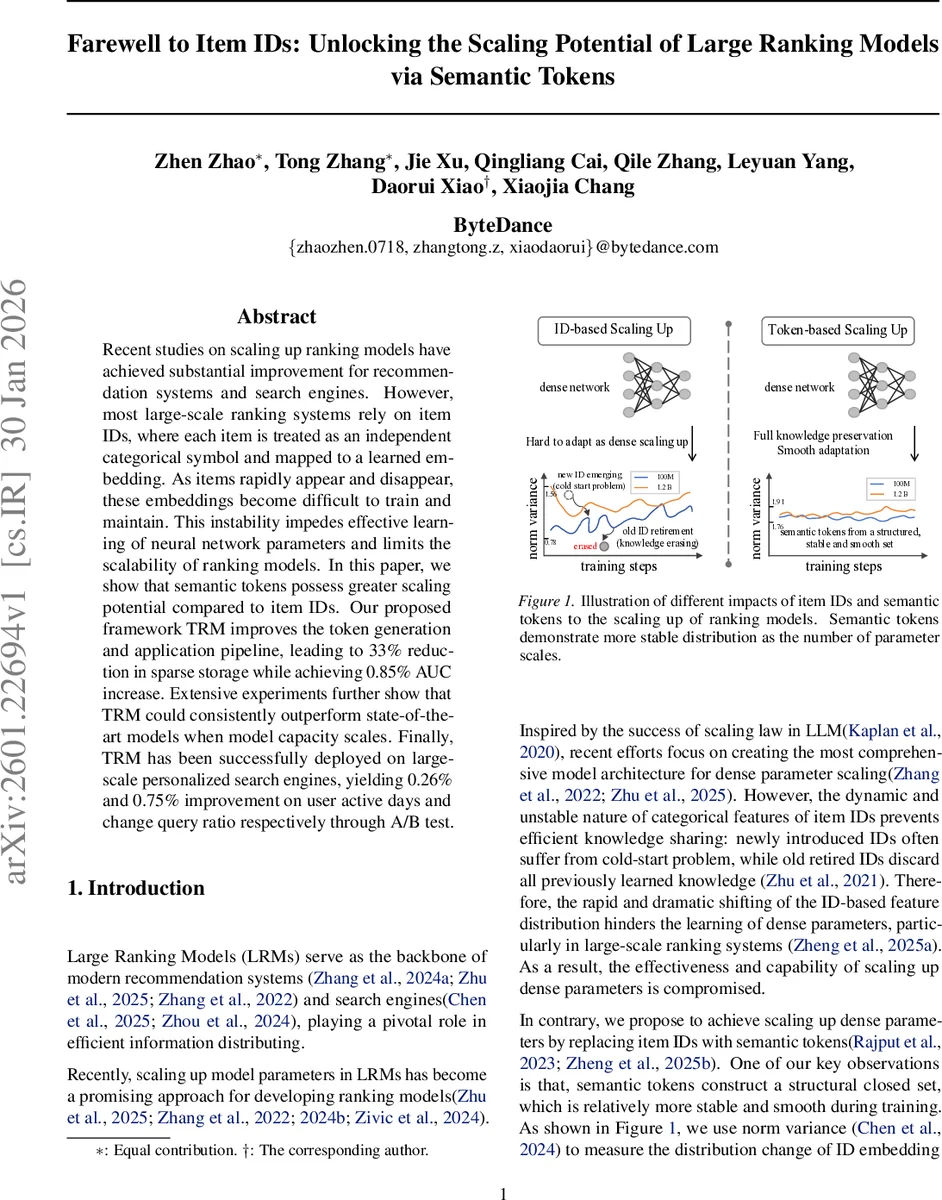

Recent studies on scaling up ranking models have achieved substantial improvement for recommendation systems and search engines. However, most large-scale ranking systems rely on item IDs, where each item is treated as an independent categorical symbol and mapped to a learned embedding. As items rapidly appear and disappear, these embeddings become difficult to train and maintain. This instability impedes effective learning of neural network parameters and limits the scalability of ranking models. In this paper, we show that semantic tokens possess greater scaling potential compared to item IDs. Our proposed framework TRM improves the token generation and application pipeline, leading to 33% reduction in sparse storage while achieving 0.85% AUC increase. Extensive experiments further show that TRM could consistently outperform state-of-the-art models when model capacity scales. Finally, TRM has been successfully deployed on large-scale personalized search engines, yielding 0.26% and 0.75% improvement on user active days and change query ratio respectively through A/B test.

💡 Research Summary

The paper addresses a fundamental bottleneck in large‑scale ranking models (LRMs) that rely on item IDs. In traditional systems, each item is represented by a categorical ID that is mapped to a learned embedding in a massive sparse table. Because new items constantly appear and old items retire, the distribution of ID embeddings drifts over time, leading to cold‑start problems, knowledge loss, and unstable training dynamics for the dense part of the model (e.g., MLPs or Transformers). This instability limits the benefits of scaling up dense parameters, which recent work has shown to be a key driver of performance gains in recommendation and search.

To overcome these issues, the authors propose a token‑based framework called TRM (Token‑based Recommendation Model). The core idea is to replace volatile IDs with semantic tokens that are derived from a collaborative‑aware multimodal representation of each item. The pipeline consists of three main stages:

-

Collaborative‑aware multimodal item representation – A large multimodal language model (e.g., Qwen2.5‑VL) is first fine‑tuned on a captioning task using visual inputs (frames, OCR, ASR) and textual metadata, yielding a caption loss L_cap. The model’s final‑layer token embeddings are then mean‑pooled to obtain a dense vector h for each item. Using interaction logs, the authors construct two types of positive pairs: query‑item pairs and item‑item pairs with high co‑click similarity. A contrastive alignment loss L_align is applied to pull together representations of behaviorally similar items while pushing apart unrelated ones. The combined objective L_rep = L_cap + λ·L_align produces embeddings that encode both content semantics and collaborative signals.

-

Hybrid tokenization with a generalization‑memorization trade‑off – The collaborative‑aware embeddings are quantized with residual‑quantization K‑means (RQ‑Kmeans) to generate coarse‑grained “generalization tokens” (gen‑tokens). To preserve fine‑grained, item‑specific knowledge, the authors extract high‑frequency k‑grams from the token sequences and apply Byte‑Pair Encoding (BPE) to create “memorization tokens” (mem‑tokens). Gen‑tokens capture shared semantic structure across items, while mem‑tokens retain unique combinatorial cues (e.g., “cake” + “candle” → “birthday”). Both token types are fed through separate hash‑based embedding modules and combined in a Wide & Deep architecture: the wide side consumes mem‑tokens, the deep side consumes gen‑tokens, with random dropout on the deep side to mitigate over‑fitting. This design directly addresses the observed failure of pure residual‑quantization methods, which lose combinatorial knowledge and degrade performance on older, high‑exposure items.

-

Joint optimization of discriminative and generative objectives – Existing token‑based approaches typically use either a discriminative loss (click‑through prediction) or a generative loss (next‑token prediction). TRM jointly optimizes both: the discriminative head predicts ranking scores from the full token sequence, while a generative head predicts the next token in the sequence, encouraging the model to learn internal token dependencies. This dual objective leverages the structural information inherent in token sequences, improving both accuracy and representation richness.

Empirical evaluation is conducted on real user logs from a large personalized search engine. Offline experiments show that swapping item IDs for TRM’s hybrid tokens yields a 0.65 %–0.85 % increase in AUC and a 33 % reduction in sparse storage. When the dense part of the model is scaled from 100 M to 1.2 B parameters, ID‑based baselines quickly saturate, whereas TRM continues to follow the scaling law L ∝ N^‑β, delivering consistent gains as model size grows. Online A/B testing confirms business impact: active user days rise by 0.26 % and the query‑change ratio drops by 0.75 %, demonstrating that the improvements translate to real‑world user experience.

The paper’s contributions are threefold: (1) a theoretical and empirical demonstration that token‑based representations scale more favorably than ID‑based ones; (2) the TRM framework that resolves three key deficiencies of prior token methods—misalignment with user behavior, loss of memorization, and neglect of token‑sequence structure; and (3) a successful production deployment that validates storage savings and metric improvements at industrial scale.

Overall, the work provides a compelling blueprint for moving beyond fragile ID embeddings toward stable, content‑driven semantic tokens, unlocking the full scaling potential of large ranking models in recommendation and search systems. Future directions include reducing token generation cost, enabling real‑time token updates, and extending the approach to other domains such as advertising and news feeds.

Comments & Academic Discussion

Loading comments...

Leave a Comment