Bonnet: Ultra-fast whole-body bone segmentation from CT scans

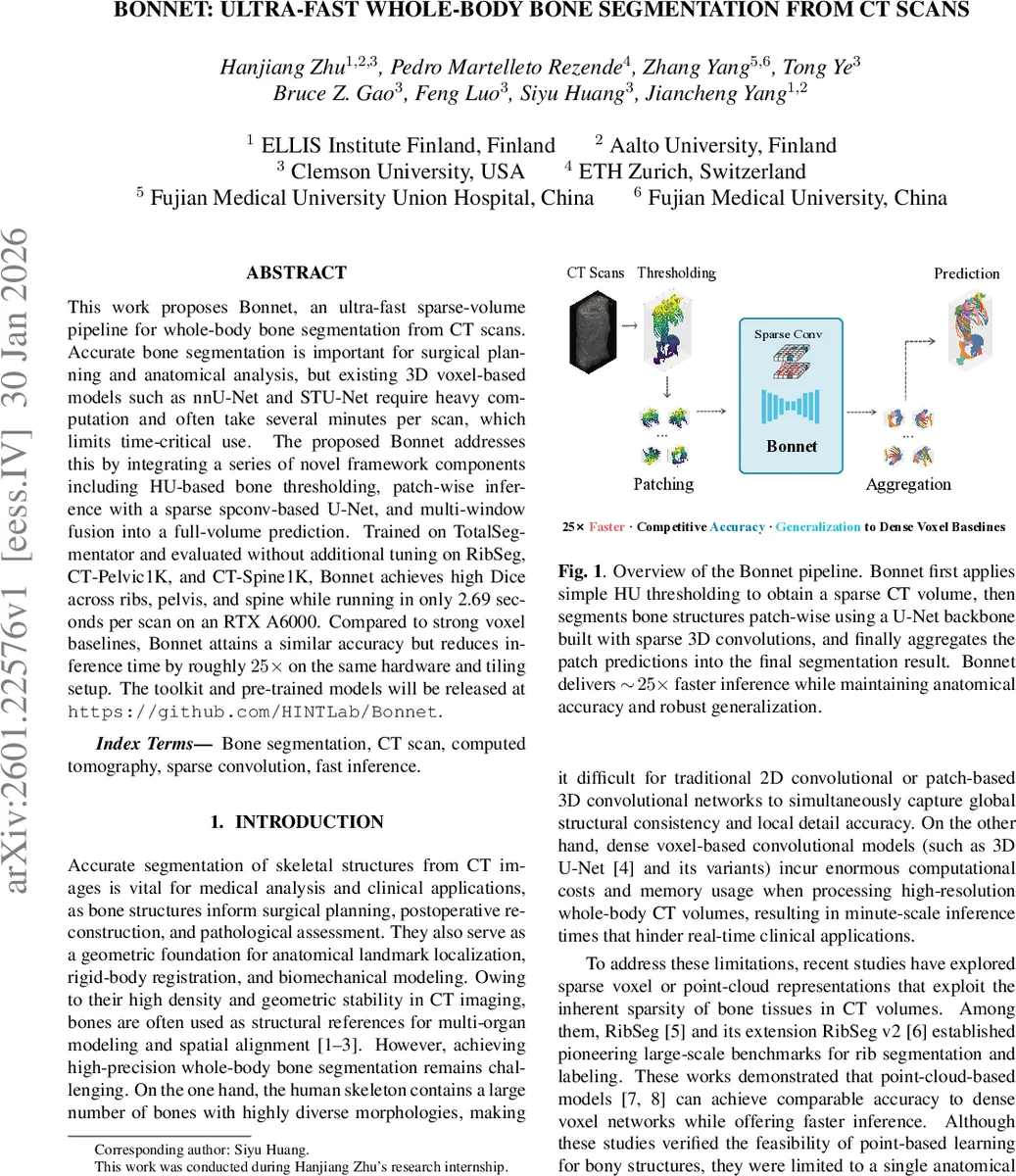

This work proposes Bonnet, an ultra-fast sparse-volume pipeline for whole-body bone segmentation from CT scans. Accurate bone segmentation is important for surgical planning and anatomical analysis, but existing 3D voxel-based models such as nnU-Net and STU-Net require heavy computation and often take several minutes per scan, which limits time-critical use. The proposed Bonnet addresses this by integrating a series of novel framework components including HU-based bone thresholding, patch-wise inference with a sparse spconv-based U-Net, and multi-window fusion into a full-volume prediction. Trained on TotalSegmentator and evaluated without additional tuning on RibSeg, CT-Pelvic1K, and CT-Spine1K, Bonnet achieves high Dice across ribs, pelvis, and spine while running in only 2.69 seconds per scan on an RTX A6000. Compared to strong voxel baselines, Bonnet attains a similar accuracy but reduces inference time by roughly 25x on the same hardware and tiling setup. The toolkit and pre-trained models will be released at https://github.com/HINTLab/Bonnet.

💡 Research Summary

The paper introduces Bonnet, a novel ultra‑fast pipeline for whole‑body bone segmentation from CT scans. Conventional 3‑D voxel‑based networks such as nnU‑Net and STU‑Net achieve high accuracy but suffer from prohibitive memory consumption and inference times of one minute or more when processing full‑body CT volumes. Bonnet overcomes these limitations by exploiting the intrinsic sparsity of bone tissue in CT images and by redesigning the inference workflow around sparse convolutions.

Key components:

- HU‑based thresholding – Voxels with Hounsfield Units between 200 and 3000 are retained, discarding the majority of soft‑tissue background. The remaining voxels are stored as a sparse tensor (coordinates, features, labels).

- Patch‑wise sparse inference – A lightweight U‑Net built on the spconv library processes 128³ sparse patches. The encoder alternates Sub‑Manifold Sparse Convolutions (which keep the original sparse support) and stride‑2 sparse convolutions for down‑sampling; the decoder uses inverse sparse convolutions for up‑sampling and symmetric skip connections. Each block is followed by SparseInstanceNorm and a LeakyReLU(0.01). The channel width is scaled by a factor of 4.0. A small MLP head produces logits for the K‑class bone label set.

- Multi‑window fusion – During testing, overlapping sliding windows (50 % overlap) are run independently. For each voxel, softmax scores from all windows covering it are combined using a Gaussian decay weight centered on each window (σ = 0.5). The final class is the arg‑max of the fused probability map. This strategy reduces boundary uncertainty and mitigates the effect of repeated coverage.

Training objective combines cross‑entropy with label smoothing (ε = 0.1) and a Soft Dice loss, summed with equal weight. This balances class‑imbalance and directly optimizes the Dice metric, which is crucial for thin structures such as ribs and vertebrae.

Experimental setup: Bonnet is trained from scratch on the public TotalSegmentator dataset (911 training, 228 validation, 89 test scans) using the described sparse pipeline. No external pre‑training or region‑specific fine‑tuning is performed. The same checkpoint is evaluated on three out‑of‑domain datasets: RibSeg (rib cage), CT‑Pelvic1K (pelvic region), and CT‑Spine1K (spine).

Results: On the internal TotalSegmentator test set, Bonnet achieves Dice scores of 94.25 % (ribs), 99.64 % (pelvis), 94.32 % (spine) and 94.91 % overall across 62 bone classes, while requiring only 2.69 seconds per scan on a single RTX A6000. Strong voxel baselines (nnU‑Net, STU‑Net variants) reach comparable Dice (up to 95.5 %) but need 66–78 seconds per scan, i.e., a ≈25× speed‑up.

Cross‑dataset evaluation shows robust generalization: Bonnet attains 85.91 % Dice on RibSeg, 96.80 % on CT‑Pelvic1K, and 93.63 % on CT‑Spine1K, despite never seeing these domains during training. Point‑based baselines (PointNet, PVCNN, SPVCNN) are either far slower or considerably less accurate (Dice ranging from 56 % to 85 %).

Qualitative visualizations confirm that Bonnet produces smooth, contiguous bone surfaces and preserves fine details such as thin ribs and vertebral arches, even on datasets with different acquisition parameters or patient populations.

Conclusions and impact: Bonnet demonstrates that a sparsity‑aware architecture can match dense voxel models in segmentation quality while delivering second‑scale inference. This makes whole‑body bone masks feasible for large‑scale retrospective studies, real‑time trauma triage, intra‑operative navigation, and any workflow where waiting a minute per scan is impractical. The authors plan to release the code and pretrained models, and to explore using the fast bone masks as structural priors for downstream tasks like organ localization, surgical planning, and biomechanical simulation.

Comments & Academic Discussion

Loading comments...

Leave a Comment