DELNet: Continuous All-in-One Weather Removal via Dynamic Expert Library

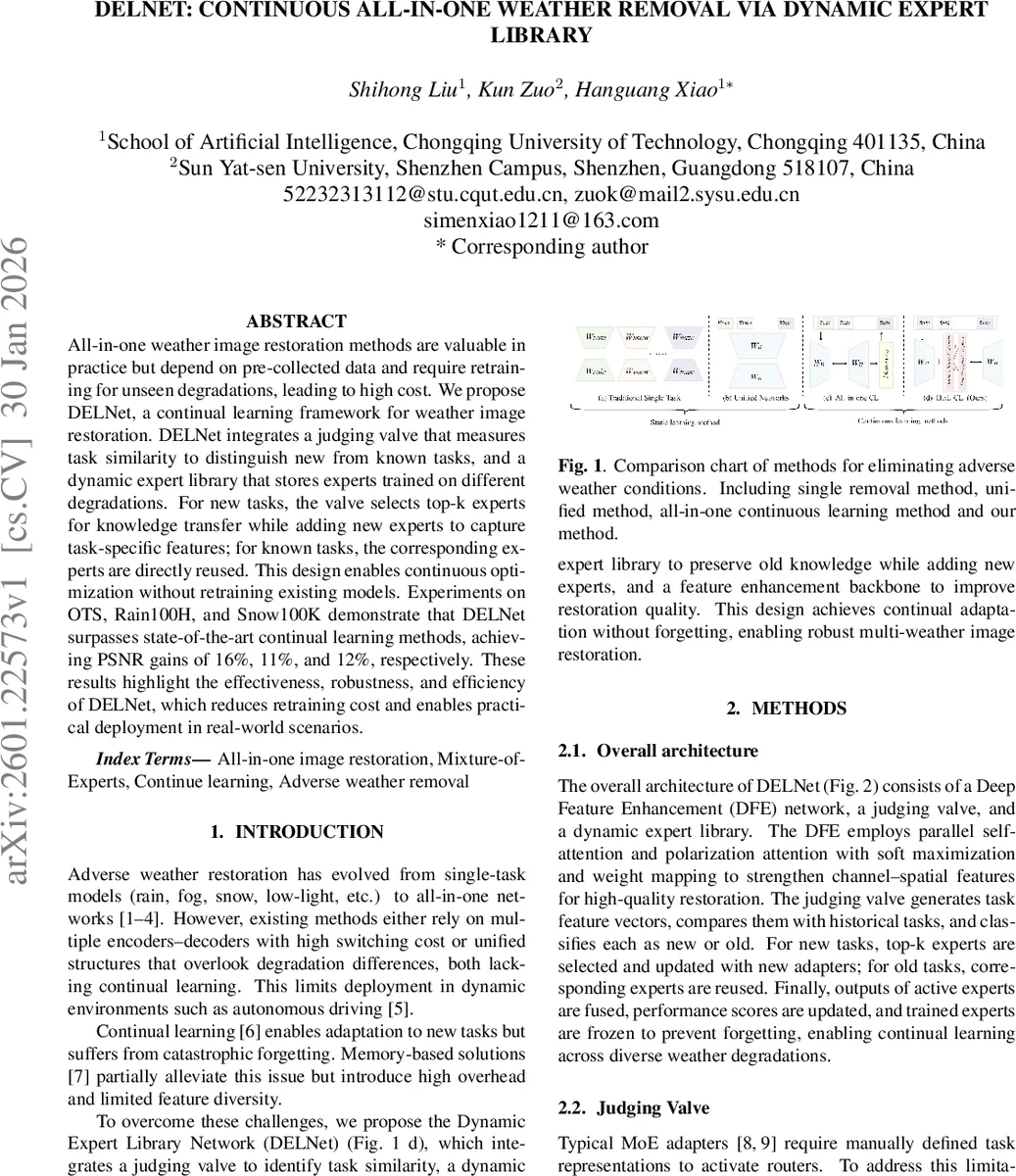

All-in-one weather image restoration methods are valuable in practice but depend on pre-collected data and require retraining for unseen degradations, leading to high cost. We propose DELNet, a continual learning framework for weather image restoration. DELNet integrates a judging valve that measures task similarity to distinguish new from known tasks, and a dynamic expert library that stores experts trained on different degradations. For new tasks, the valve selects top-k experts for knowledge transfer while adding new experts to capture task-specific features; for known tasks, the corresponding experts are directly reused. This design enables continuous optimization without retraining existing models. Experiments on OTS, Rain100H, and Snow100K demonstrate that DELNet surpasses state-of-the-art continual learning methods, achieving PSNR gains of 16%, 11%, and 12%, respectively. These results highlight the effectiveness, robustness, and efficiency of DELNet, which reduces retraining cost and enables practical deployment in real-world scenarios.

💡 Research Summary

The paper introduces DELNet, a continual‑learning framework designed for all‑in‑one adverse‑weather image restoration. Traditional all‑in‑one models either employ multiple encoder‑decoder branches with high switching cost or use a single unified architecture that ignores the intrinsic differences among weather degradations. Both approaches require retraining when a new degradation appears, which is costly and impractical for dynamic environments such as autonomous driving.

DELNet addresses these limitations through three tightly coupled components: (1) a Deep Feature Enhancement (DFE) module that fuses parallel self‑attention and polarization‑attention streams, applies soft‑maximization and weight mapping, and thereby strengthens channel‑spatial representations; (2) a Judging Valve (JV) that automatically extracts five statistical descriptors (mean, standard deviation, max, min, L2 norm) from the backbone features to form a task vector, computes a composite similarity score S_sum by weighted combination of cosine similarity, Euclidean similarity, and Pearson correlation (weights 0.5, 0.3, 0.2), and dynamically adjusts a similarity threshold based on the median and standard deviation of past scores; (3) a Dynamic Expert Library (DEL) that stores a set of lightweight adapters, each acting as an expert for a specific weather condition. Experts are evaluated by a joint performance‑usage score S_c = P_i × U_i, where P_i is an exponential moving average of recent loss‑based performance and U_i is the inverse of usage frequency. For a new task, the top‑k experts with highest S_c are selected, their outputs are fused using loss‑based temperature scaling (τ = 0.1), and a new adapter is trained and added to the library. For known tasks, the matching expert(s) are directly reused and all other experts are frozen, preventing catastrophic forgetting.

The loss function is a multi‑level objective: (i) L_sw combines L1 reconstruction with a contrastive loss for pixel‑level fidelity and semantic consistency; (ii) L_kd performs output‑level knowledge distillation between a frozen teacher (old model) and the current student, also using contrastive regularization; (iii) L_p projects encoder features into a shared latent space via an auto‑encoder and penalizes L1 distance between old and new projections; (iv) L_reg applies L2 regularization to adapter weights with a dynamic coefficient that grows with training steps; (v) L_div encourages expert specialization by penalizing low variance among the losses of active experts. The total loss is L_total = L_sw + αL_kd + λL_p + βL_reg + L_div, with α = 0.8, λ = 0.3, and β dynamically tuned.

Experiments are conducted on three benchmark datasets representing haze (RESIDE OTS), rain (Rain100H), and snow (Snow100K). Two training regimes are evaluated: a sequential task order (haze → rain → snow) and a multi‑task joint training setup. DELNet is compared against six continual‑learning baselines (EWC, MAS, LwF, POD, PIGWM, AFC) and five static all‑in‑one models (TransWeather, WGWS, WeatherDiff, ADSM, CLAIO). Results show that DELNet consistently outperforms all baselines, achieving PSNR improvements of 2.1–3.5 dB (≈11–16 % relative gain) and SSIM gains of 0.02–0.04 over the best continual‑learning methods, while also surpassing static models by up to 1.27 dB. Notably, DELNet requires only 5.6 M parameters, far fewer than the largest competitor (WeatherDiff, 83 M), demonstrating superior efficiency.

Ablation studies confirm the contribution of each component: removing the judging valve, the DFE module, or the expert library degrades performance substantially; the best trade‑off is achieved with 30 experts, balancing knowledge capacity and computational cost. Further analysis of task order sensitivity reveals that earlier tasks tend to retain higher quality, indicating that the dynamic threshold mechanism, while effective, could be refined for better order‑invariance.

In summary, DELNet introduces a novel combination of similarity‑driven task routing, a dynamically expandable mixture‑of‑experts, and a multi‑level loss hierarchy to enable continual, high‑quality weather image restoration without retraining existing models. The approach delivers state‑of‑the‑art performance, parameter efficiency, and practical applicability for real‑world systems that must adapt to ever‑changing adverse weather conditions.

Comments & Academic Discussion

Loading comments...

Leave a Comment