Keep Rehearsing and Refining: Lifelong Learning Vehicle Routing under Continually Drifting Tasks

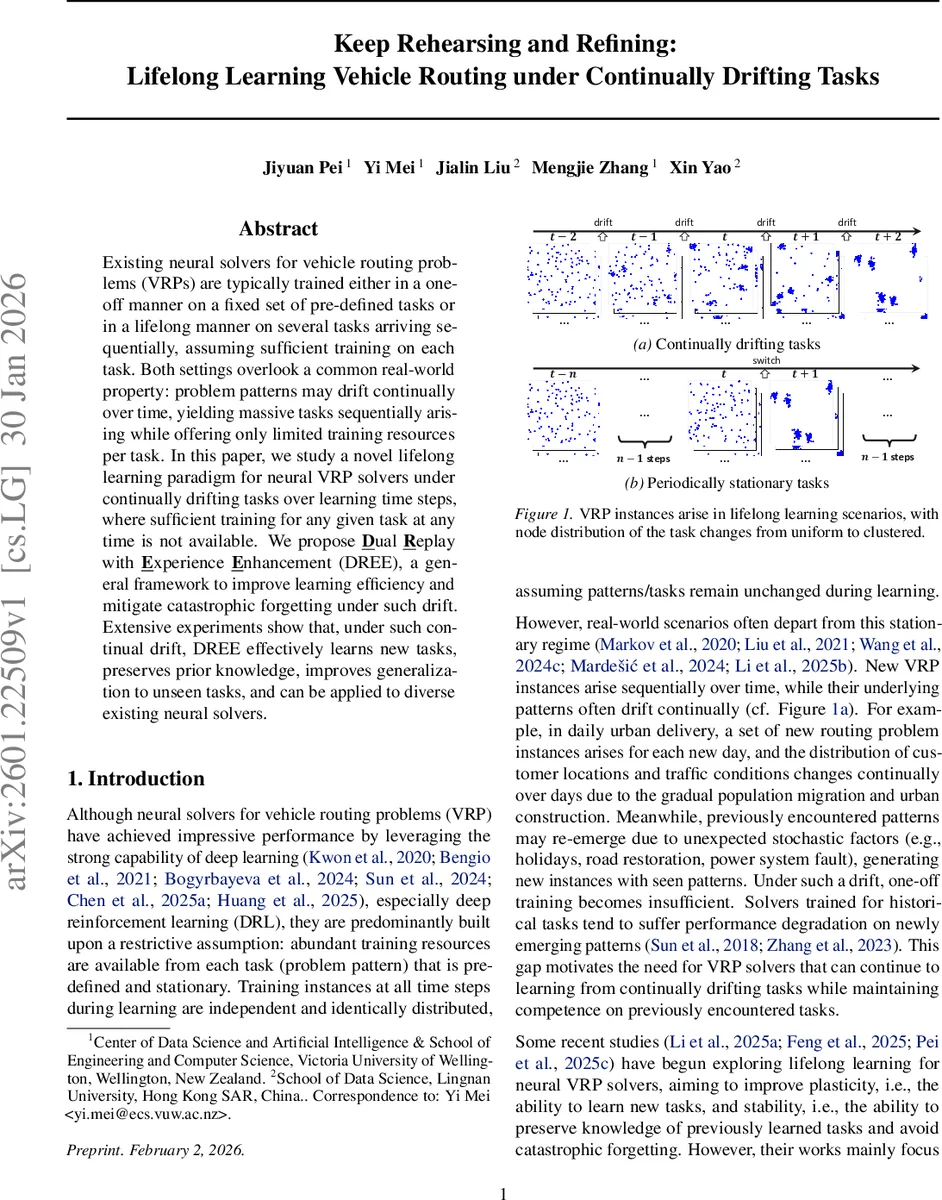

Existing neural solvers for vehicle routing problems (VRPs) are typically trained either in a one-off manner on a fixed set of pre-defined tasks or in a lifelong manner on several tasks arriving sequentially, assuming sufficient training on each task. Both settings overlook a common real-world property: problem patterns may drift continually over time, yielding massive tasks sequentially arising while offering only limited training resources per task. In this paper, we study a novel lifelong learning paradigm for neural VRP solvers under continually drifting tasks over learning time steps, where sufficient training for any given task at any time is not available. We propose Dual Replay with Experience Enhancement (DREE), a general framework to improve learning efficiency and mitigate catastrophic forgetting under such drift. Extensive experiments show that, under such continual drift, DREE effectively learns new tasks, preserves prior knowledge, improves generalization to unseen tasks, and can be applied to diverse existing neural solvers.

💡 Research Summary

The paper tackles a practical gap in neural vehicle routing problem (VRP) solvers: existing methods assume either a one‑off training on a fixed set of tasks or a lifelong learning setting where each task remains stationary long enough to receive ample training. In real‑world logistics, however, problem patterns (e.g., customer locations, traffic conditions, constraints) drift continuously over time, and only a few training steps are available for each emerging task. The authors formalize this “continually drifting scenario” as a sequence of tasks {P₁,…,P_T} where at every time step t a new task Pₜ is sampled, but the per‑task training budget is insufficient for conventional deep reinforcement learning (DRL) to converge.

To address this, they propose Dual Replay with Experience Enhancement (DREE), a general lifelong‑learning framework that simultaneously replays problem instances and the solver’s behaviors on those instances, while actively improving the quality of the stored experiences. DREE consists of three mechanisms:

- Problem Instance Replay (PIR) – stores raw VRP instances in a fixed‑size buffer (using reservoir sampling) and periodically re‑feeds them to the current model, reinforcing knowledge of past tasks.

- Behavior Replay (BR) – alongside each instance, the buffer keeps the solution construction trajectory (state‑action distributions). During training, a behavior‑imitation loss forces the model to reproduce these high‑quality behaviors, thus preserving procedural knowledge.

- Experience Enhancement (EE) – when the model discovers a better solution for a buffered instance while performing PIR, the stored behavior is updated on‑the‑fly. This continual refinement mitigates the “low‑quality experience” problem that arises when tasks receive only limited training.

The total loss combines the standard DRL objective L_DRL(θ, p) with weighted BR and EE terms (λ₁, λ₂ control their influence). By jointly optimizing these losses, DREE achieves a balance between plasticity (learning new drifting tasks) and stability (preventing catastrophic forgetting).

Experiments are conducted on two classic VRP variants: Capacitated VRP (CVRP) and the Traveling Salesman Problem (TSP). The authors compare DREE against recent lifelong‑learning baselines (Li et al., 2025a; Feng et al., 2025; Pei et al., 2025c) under the continually drifting regime. Evaluation metrics include: (i) adaptation speed to newly arriving tasks, (ii) retention of performance on previously seen tasks, and (iii) generalization to unseen tasks. Results show that DREE consistently outperforms baselines, delivering 10–15 % higher solution quality when training resources per task are scarce, and dramatically reducing forgetting. Moreover, DREE is architecture‑agnostic: it can be plugged into both construction‑based and improvement‑based neural solvers, demonstrating broad applicability.

The paper also discusses limitations. Maintaining two replay streams and performing EE updates incurs extra computational overhead, especially as buffer size grows. Extending the approach to highly constrained VRPs (e.g., time windows, heterogeneous fleets) may require more sophisticated behavior representations and loss designs. Future work is suggested on memory compression, multi‑modal experience integration (e.g., real‑time traffic forecasts), and asynchronous buffer updates to further improve efficiency.

In summary, DREE offers the first systematic solution for lifelong neural VRP learning under continuous task drift, effectively marrying dual replay with dynamic experience refinement to achieve robust, adaptable, and generalizable routing performance in realistic, resource‑constrained environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment