Dual-Phase Federated Deep Unlearning via Weight-Aware Rollback and Reconstruction

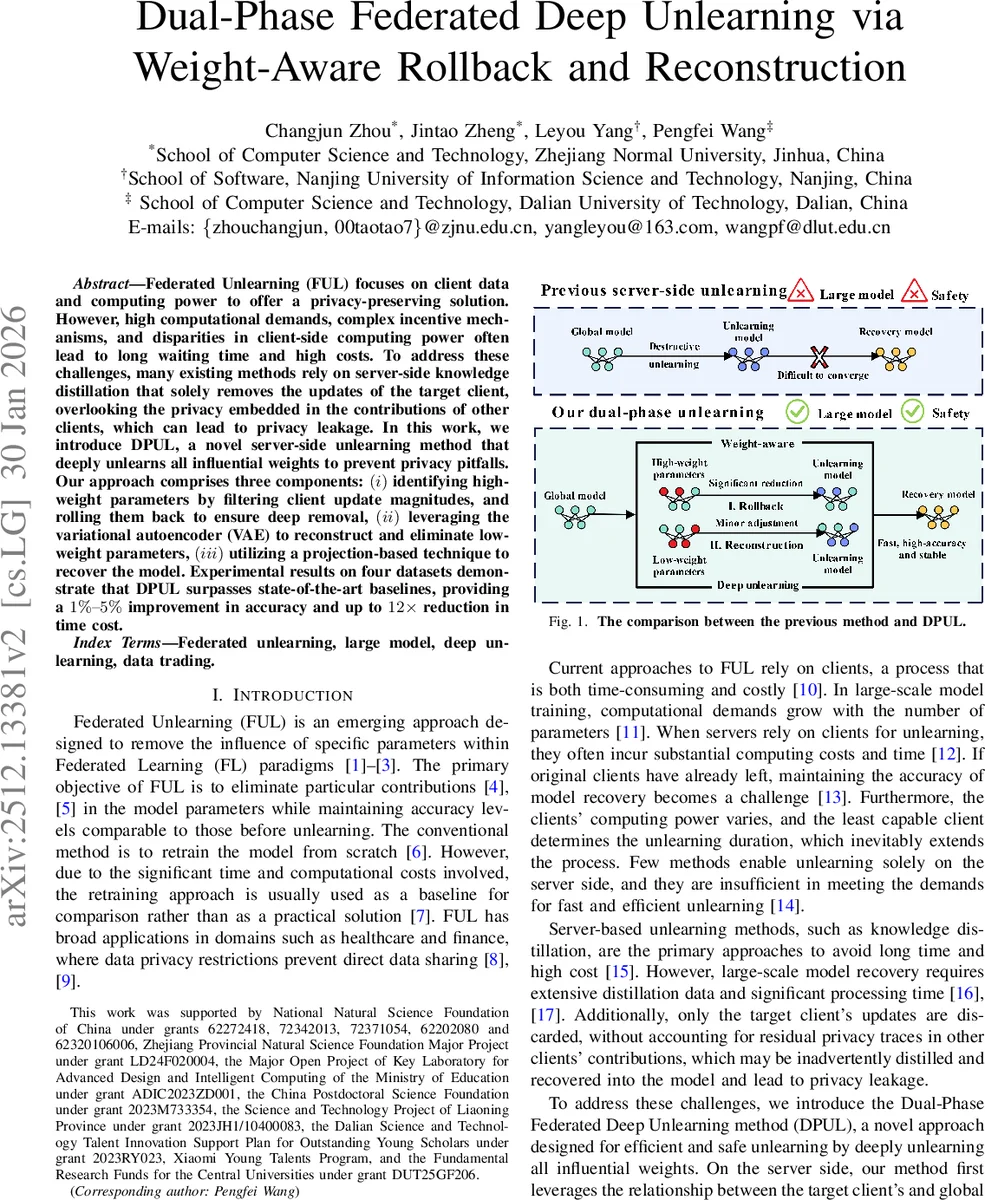

Federated Unlearning (FUL) focuses on client data and computing power to offer a privacy-preserving solution. However, high computational demands, complex incentive mechanisms, and disparities in client-side computing power often lead to long times and higher costs. To address these challenges, many existing methods rely on server-side knowledge distillation that solely removes the updates of the target client, overlooking the privacy embedded in the contributions of other clients, which can lead to privacy leakage. In this work, we introduce DPUL, a novel server-side unlearning method that deeply unlearns all influential weights to prevent privacy pitfalls. Our approach comprises three components: (i) identifying high-weight parameters by filtering client update magnitudes, and rolling them back to ensure deep removal. (ii) leveraging the variational autoencoder (VAE) to reconstruct and eliminate low-weight parameters. (iii) utilizing a projection-based technique to recover the model. Experimental results on four datasets demonstrate that DPUL surpasses state-of-the-art baselines, providing a 1%-5% improvement in accuracy and up to 12x reduction in time cost.

💡 Research Summary

The paper tackles the problem of federated unlearning (FUL), i.e., removing the influence of a specific client’s data from a globally trained model while preserving overall performance. Existing approaches either retrain from scratch, which is prohibitively expensive, or rely on server‑side knowledge distillation that discards only the target client’s updates. The latter leaves residual traces in the contributions of other clients, creating a privacy leakage risk. To overcome these limitations, the authors propose DPUL (Dual‑Phase Federated Deep Unlearning), a fully server‑centric framework that separates the removal of high‑impact (“high‑weight”) parameters from low‑impact (“low‑weight”) ones, and then restores model accuracy through a lightweight projection step.

Method Overview

-

Weight‑Aware Rollback (High‑Weight Removal).

The server stores the cumulative global updates ΔM and the cumulative updates contributed by the target client ΔU. For each parameter i, if |ΔU_i|·λ exceeds |ΔM_u_i| (where ΔM_u = ΔM \ ΔU and λ is a hyper‑parameter), the parameter is flagged as high‑weight. All subsequent updates to that parameter are rolled back to the most recent low‑weight state, effectively erasing the strong signal contributed by the leaving client. This process is formalized in Algorithm 1 and constitutes the “memory rollback” phase. -

Reconstruction Unlearning (Low‑Weight Removal).

After high‑weight parameters are eliminated, the remaining low‑weight parameters may still encode subtle private information. The authors address this by training a set of β‑Variational Autoencoders (β‑VAEs). Both the original global model M and the rolled‑back model M′ are split into I slices; each slice i is fed to a dedicated VAE V_i. Training data consist of paired snapshots (M_t(i), M′_t(i)) across all FL rounds, and the loss combines reconstruction error with a KL‑divergence term weighted by β. A multi‑head (parallel) training scheme accelerates convergence. Once trained, each V_i receives the final global parameters M_T and outputs a reconstructed slice M_u(i). Concatenating all slices yields the unlearned model M_u. -

Projected Boost Recovery (Accuracy Restoration).

To regain any accuracy loss, the server records per‑round accuracies L_acc. It selects the round t whose accuracy is closest to that of M_u and uses a small auxiliary dataset D_b to perform a projection‑based fine‑tuning. The optimization minimizes a loss L_b over D_b, computes a gradient‑scaled update ΔM_u*, and applies it to M_u, producing the recovered model M_r. This step requires far fewer data and epochs than full knowledge‑distillation pipelines.

Experimental Validation

DPUL is evaluated on four datasets (CIFAR‑10, FEMNIST, CelebA, and a large‑scale image set) using multiple network architectures (ResNet, MobileNet, etc.). Compared to state‑of‑the‑art baselines—including retraining, server‑side distillation, gradient‑ascent unlearning, and pruning‑based methods—DPUL achieves 1 %–5 % higher test accuracy while reducing unlearning time by up to 12×. Ablation studies confirm that both the rollback and VAE reconstruction components contribute significantly to privacy removal and efficiency.

Strengths

- Fully Server‑Side: Eliminates the need for client participation, cutting communication overhead and avoiding the “slowest client” bottleneck.

- Two‑Tier Parameter Treatment: Distinguishes between high‑impact and low‑impact updates, allowing aggressive rollback for the former and learned reconstruction for the latter.

- Scalable Multi‑Head VAE: Parallel slice‑wise training reduces VAE training time, making the approach viable for large models.

- Lightweight Recovery: Projection‑based fine‑tuning uses a tiny dataset, sidestepping the massive data requirements of traditional knowledge distillation.

Limitations and Open Questions

- Server Storage Overhead: Maintaining full histories of ΔM and ΔU for all rounds can be memory‑intensive, especially for very deep models or long training horizons.

- Hyper‑Parameter Sensitivity: The performance hinges on λ (high‑weight threshold), β (VAE KL weight), slice count I, and the size of D_b. The paper provides empirical settings but lacks a systematic sensitivity analysis.

- Privacy Guarantees: While the method intuitively removes client influence, formal privacy metrics (e.g., differential privacy bounds) are not derived, leaving the exact leakage risk unquantified.

- VAE Reconstruction Quality: The ability of β‑VAEs to “unlearn” information depends on the capacity of the encoder‑decoder pair; overly expressive VAEs might inadvertently preserve traces, whereas overly constrained ones could degrade model utility.

- Recovery Dataset Representativeness: The small auxiliary dataset must be sufficiently representative of the global distribution; otherwise, the projection step may introduce bias or insufficiently restore performance.

Conclusion

DPUL introduces a novel, fully server‑centric pipeline for federated unlearning that combines weight‑aware rollback, variational auto‑encoder reconstruction, and projection‑based recovery. Empirical results demonstrate substantial gains in both accuracy preservation and computational efficiency over existing methods. By addressing storage overhead, providing formal privacy analyses, and further automating hyper‑parameter selection, future work can solidify DPUL as a practical standard for privacy‑compliant model maintenance in federated learning ecosystems.

Comments & Academic Discussion

Loading comments...

Leave a Comment